Towards Dynamic Master Determination in MEMS-based Micro-Scanning LiDAR Systems Philipp Stelzer 1 , Andreas Strasser 1 , Christian Steger 1 and Norbert Druml 2 Abstract— Automated driving has been expected for decades. The first systems, which at least partially automate the vehicle, have been installed in higher priced vehicles for several years. In the near future, however, many more competencies are to be transferred to the systems and the vehicle will thus be fully automated. Such systems receive their data from various sensor systems such as Light Detection And Ranging (LiDAR). Therefore, it is essential that this information is transmitted correctly and reliably to the environmental perception system. In order to ensure this, redundancy of sensors is a key factor in addition to diversity. For example, multiple, independent- ly controlled MEMS-based LiDAR systems can be operated synchronously. This requires the selection of a Master system which can be reliably followed by all Slave systems. In this publication, an architecture for MEMS-based Micro-Scanning LiDAR systems is proposed to determine the appropriate system as Master. The architecture has been implemented in an FPGA prototyping platform to demonstrate its feasibility and evaluate its performance. I. I NTRODUCTION Until now, Advanced Driver-Assistance Systems (ADAS) have taken over tasks such as lane keeping, but the driver had to be ready to intervene at any time [1]. For example, in Article 8 of the Vienna Convention on Road Traffic, some countries have agreed that the driver must have a continuous opportunity to gain control of the vehicle [2]. At the moment there are also some considerations about how, when, how long, and where a human can intervene in systems of auto- mated vehicles. Terken et al. [3], for example, have shown in their article about various use cases where shared control can occur. Flemisch et al. [4] also made considerations to this effect in their article. However, these approaches are only possible up to SAE level 3, since beyond that the driver is only a passenger without responsibilities. These SAE levels are well explained in SAE International Standard J3016 [5]. At the moment, this is still a vision for the future, but in a few years, highly automated vehicles will appear on the roads. Consequently, there have been several projects addressing this issue for some time, developing concepts and systems to enable SAE level 4 and 5 of vehicles. In the PRYSTINE project, for instance, camera, robust Radio Detection And Ranging (RADAR), and Light Detection And Ranging (LiDAR) sensors are deployed to achieve Fail-operational Urban Surround perceptION (FUSION) [6]. Figure 1 illustrates this concept of PRYSTINE from the intended FUSION. How such fail-operational architectures 1 Institute of Technical Informatics, Graz University of Technology, 8010 Graz, Austria {stelzer, strasser, steger}@tugraz.at 2 ATV Sense and Control, Infineon Technologies Austria AG, 8020 Graz, Austria {norbert.druml}@infineon.com for environmental perception systems can be designed is also described by Kohn et al. [7] in their publication. Fail- operational behaviour is mandatory for high automated vehi- cles of SAE level 4 and 5 and is also claimed by Vermesan et al. [8] and Macher et al. [9]. In the absence of the driver at SAE levels 4 and 5, the vehicle requires multiple systems re- sponsible for execution of steering, acceleration/deceleration and monitoring of driving environment. Also, the fall-back performance of dynamic driving task is no longer the driver, but from SAE level 4 the system as well. Such fusion systems combine data from different sensors and thus provide reliable information about the driving environment in the fusion of these data. Koci et al. [10] and De Silva et al. [11] discussed in their publications the importance of such sensors and data fusion for automated vehicles. Therefore, affordable automotive qualified RADAR and LiDAR components that can be deployed in large vehicle fleets for environmental detection are important. An automotive qualified MEMS- based LiDAR has been presented by Yoo et al. [12], which can be a key for affordable LiDAR sensor systems in highly automated vehicles. But to achieve the largest possible Field- of-View (FoV), multiple MEMS-based LiDAR systems must be synchronised. For this purpose, a Master system has to be selected that operates a MEMS mirror that has the appropriate physical properties so that all Slave systems can reliably follow it. If the LiDAR systems were operated asyn- chronously, so-called ghost objects could occur, as described by Baumgart et al. [13]. Therefore, our publication addresses the determination of an appropriate Master system for the synchronisation of multiple independently controlled MEMS Fig. 1. PRYSTINE’s concept view of a Fail-operational Urban Surround perceptION (FUSION) [6].

Welcome message from author

This document is posted to help you gain knowledge. Please leave a comment to let me know what you think about it! Share it to your friends and learn new things together.

Transcript

Towards Dynamic Master Determination in MEMS-based

Micro-Scanning LiDAR Systems

Philipp Stelzer1, Andreas Strasser1, Christian Steger1 and Norbert Druml2

Abstract— Automated driving has been expected for decades.The first systems, which at least partially automate the vehicle,have been installed in higher priced vehicles for several years.In the near future, however, many more competencies are tobe transferred to the systems and the vehicle will thus befully automated. Such systems receive their data from varioussensor systems such as Light Detection And Ranging (LiDAR).Therefore, it is essential that this information is transmittedcorrectly and reliably to the environmental perception system.In order to ensure this, redundancy of sensors is a key factorin addition to diversity. For example, multiple, independent-ly controlled MEMS-based LiDAR systems can be operatedsynchronously. This requires the selection of a Master systemwhich can be reliably followed by all Slave systems. In thispublication, an architecture for MEMS-based Micro-ScanningLiDAR systems is proposed to determine the appropriate systemas Master. The architecture has been implemented in an FPGAprototyping platform to demonstrate its feasibility and evaluateits performance.

I. INTRODUCTION

Until now, Advanced Driver-Assistance Systems (ADAS)

have taken over tasks such as lane keeping, but the driver

had to be ready to intervene at any time [1]. For example, in

Article 8 of the Vienna Convention on Road Traffic, some

countries have agreed that the driver must have a continuous

opportunity to gain control of the vehicle [2]. At the moment

there are also some considerations about how, when, how

long, and where a human can intervene in systems of auto-

mated vehicles. Terken et al. [3], for example, have shown in

their article about various use cases where shared control can

occur. Flemisch et al. [4] also made considerations to this

effect in their article. However, these approaches are only

possible up to SAE level 3, since beyond that the driver is

only a passenger without responsibilities. These SAE levels

are well explained in SAE International Standard J3016 [5].

At the moment, this is still a vision for the future, but

in a few years, highly automated vehicles will appear on

the roads. Consequently, there have been several projects

addressing this issue for some time, developing concepts

and systems to enable SAE level 4 and 5 of vehicles. In

the PRYSTINE project, for instance, camera, robust Radio

Detection And Ranging (RADAR), and Light Detection

And Ranging (LiDAR) sensors are deployed to achieve

Fail-operational Urban Surround perceptION (FUSION) [6].

Figure 1 illustrates this concept of PRYSTINE from the

intended FUSION. How such fail-operational architectures

1Institute of Technical Informatics, Graz University of Technology, 8010Graz, Austria {stelzer, strasser, steger}@tugraz.at

2ATV Sense and Control, Infineon Technologies Austria AG, 8020 Graz,Austria {norbert.druml}@infineon.com

for environmental perception systems can be designed is

also described by Kohn et al. [7] in their publication. Fail-

operational behaviour is mandatory for high automated vehi-

cles of SAE level 4 and 5 and is also claimed by Vermesan

et al. [8] and Macher et al. [9]. In the absence of the driver at

SAE levels 4 and 5, the vehicle requires multiple systems re-

sponsible for execution of steering, acceleration/deceleration

and monitoring of driving environment. Also, the fall-back

performance of dynamic driving task is no longer the driver,

but from SAE level 4 the system as well. Such fusion systems

combine data from different sensors and thus provide reliable

information about the driving environment in the fusion of

these data. Koci et al. [10] and De Silva et al. [11] discussed

in their publications the importance of such sensors and

data fusion for automated vehicles. Therefore, affordable

automotive qualified RADAR and LiDAR components that

can be deployed in large vehicle fleets for environmental

detection are important. An automotive qualified MEMS-

based LiDAR has been presented by Yoo et al. [12], which

can be a key for affordable LiDAR sensor systems in highly

automated vehicles. But to achieve the largest possible Field-

of-View (FoV), multiple MEMS-based LiDAR systems must

be synchronised. For this purpose, a Master system has

to be selected that operates a MEMS mirror that has the

appropriate physical properties so that all Slave systems can

reliably follow it. If the LiDAR systems were operated asyn-

chronously, so-called ghost objects could occur, as described

by Baumgart et al. [13]. Therefore, our publication addresses

the determination of an appropriate Master system for the

synchronisation of multiple independently controlled MEMS

Fig. 1. PRYSTINE’s concept view of a Fail-operational Urban SurroundperceptION (FUSION) [6].

mirrors.

With our paper contribution we:

• achieve a determination of an appropriate Master sys-

tem,

• prevent a system crash by determining an ineligible

Master system and

• enhance safety by enabling a safe and reliable synchro-

nisation of MEMS-based LiDAR systems.

The remainder of the paper is structured as follows. The

overview on related work is given in Section II. The method

will be described in detail in Section III and the results

including a short discussion will be provided in Section IV.

A summary of the findings will conclude this paper in

Section V.

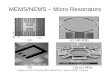

II. RELATED WORK

There are already LiDAR systems on the market that can

perceive the driving environment and still have a very large

FoV. Such a system is for example the HDL-64E system from

Velodyne [14], [15]. The major drawbacks of such systems,

however, are that they either perform poorly or are bulky,

expensive, and energy hungry [16]. Additionally, there are

also MEMS-based LiDAR systems available. A distinction is

made between LiDAR systems with 1D MEMS mirrors and

2D MEMS mirrors. Wang et al. [16] describe the advantages

and disadvantages well in their publication. It is also evident

from this publication that 1D scanning MEMS mirrors are

the more mature and typically have wider scanning angles,

larger apertures and higher resonant frequencies. Therefore,

in terms of automotive applications for highly automated

vehicles, a MEMS-based LiDAR system with a 1D MEMS

mirror is arguably the better choice. Thus, Druml et al. [17]

have introduced an automotive qualified, low-cost, long-

range and robust 1D MEMS Micro-Scanning LiDAR system.

However, Druml et al.’s system has either a small and long-

range FoV or a wide and short-range FoV, so a way has to

be found to achieve both.

A. 1D MEMS Micro-Scanning LiDAR

In this Subsection, the MEMS-based LiDAR System ap-

proach by Druml et al. is presented. With this approach, a

vertical laser beam line is typically projected into the scenery.

This laser beam line is then moved through the scenery with

Fig. 2. Functional principle of a 1D Micro-Scanning LiDAR [17].

System

Safety

Controller

(AURIX)

Laser Illumination

MEMS Mirror

MEMS

Driver

ASIC

Actuation

Sensing

Reflected Signal

Photo Diodes

dt

Emitted

Signal Point

Cloud

Data

Trigger and

Laser Power Setting

FPGA / Dedicated

LiDAR Hardware

Accelerators

Receiver

Circuits

Raw Data

Emitter Path

Receiver Path

Trigger and

Gain Setting

Config

and

Status

Fig. 3. System concept of a 1D MEMS-based automotive qualified LiDARsystem by Druml et al. [17].

the help of an oscillating 1D MEMS mirror. This method

is called horizontal scanning and its principle is depicted in

Figure 2. What it depends on which FoV is obtained will

be explained later. First, the architecture of Druml et al.,

shown in Figure 3, will be explained in general. This MEMS-

based LiDAR system basically consists of an Emitter path, a

Receiver path, and a System Safety Controller (AURIX) [18]

that coordinates the Emitter and Receiver paths. As the

mirror frequency is indirectly responsible for the size and

depth of the FoV, we have focused on the Emitter path,

especially the MEMS Driver ASIC, of Druml et al.’s LiDAR

system. The MEMS Driver actuates, senses and controls the

mirror. A MEMS device can be operated either in open-loop

or closed-loop, according to Borovic et al. [19]. Actuation

of the mirror happens by switching a High Voltage (HV) on

and off. When the HV is switched on, the mirror is pulled

towards the zero position. Once the HV is switched off, an

oscillation in direction of the maximum mirror deflection

occurs. Stelzer et al. [20] state that the maximum mirror

Fig. 4. Druml et al.’s MEMS mirror response curve [17].

Master Management System

HV On/OffSensing HV On/OffSensing

f1 f2

HV On/OffSensing

fn

MEMS System

2

MEMS System

nF

allb

ack F

req

ue

ncy

Regis

ter

Fa

llback F

req

ue

ncy

Com

pasrion

Blo

ck

Ma

ster

Dete

rmin

ation B

lock

MEMS System

1

MMSC_1MMSC_2

MMSC_n

FBF_nFBF_2FBF_1

Fig. 5. Block diagram of a MEMS-based LiDAR environment perception system with an additional Master Management block.

deflection depends on the actuation frequency. A low mirror

oscillation frequency results in a narrow but long-range FoV

and a higher mirror oscillation results in a wider but short-

range FoV. Furthermore, the mirror has to be operated in a

certain frequency range to achieve a corresponding maximum

mirror deflection. This is conditioned by the implemented

electrostatic comb drive approach [21] and the associated

mechanical MEMS mirror characteristics. Figure 4 shows

the response curve of the MEMS mirror - the MEMS mirror

corresponds to a nonlinear oscillator. The two threshold

frequencies fjump and ffb can also differ from example to

example due to process variations.

B. Synchronisation of MEMS-based LiDAR Systems

In order to extend the FoV of an environment perception

system and to enhance safety, it is possible to integrate addi-

tional sensors into the system. Figure 6 shows symbolically

how the field of view is extended by two additional MEMS-

based LiDAR systems. However, in the case of MEMS-based

LiDAR systems, these individual sensors must be synchro-

nised to avoid possible interferences in the measurements.

Fig. 6. Icon of multiple independently controlled and synchronised MEMS-based LiDAR systems [22].

How these independently controlled MEMS mirrors can be

synchronised is presented by Stelzer et al. [22] and Strasser et

al. [23] in their publications. Since each mirror has different

fallback frequencies caused by process variations, not every

mirror is equally well suited as a Master mirror. Depending

on the characteristics of the different MEMS mirrors, the

Master system should be selected. The maximum possible

FoV of the entire environment perception system will also

result from this.

From this, the following research questions emerge:

• Is it possible that despite the different characteristics of

MEMS mirrors, the system with the most appropriate

MEMS mirror is always made the Master?

• What happens if the Master crashes for some reason

and becomes unavailable to the environment perception

system?

• Is it necessary to check more often whether the most

appropriate MEMS mirror is the Master mirror or is the

Master determined only once?

III. DYNAMIC MASTER DETERMINATION IN

MEMS-BASED LIDAR SYSTEMS

In this Section, we introduce the novel dynamic Mas-

ter determination architecture and describe the process of

determining the Master system. In Figure 5, the Master

Management System (MMS) is shown in general. It shows

separate MEMS-based LiDAR systems that are synchronised

together to form the LiDAR environment perception system.

In principle, n systems can be synchronised with each other

and thus a larger FoV can be obtained. For the synchroni-

sation, however, a Master is needed, which is determined

and assigned by the MMS. The MMS is implemented on an

external device, for example the System Safety Controller,

and communicates with the individual LiDAR systems. The

various fallback frequencies of the individual MEMS mirrors

are communicated to the MMS via the Fallback Frequency

(FBF) m connections - m stands for 1 to n. These are stored

in a register of the MMS and subsequently compared with

each other. The system with the MEMS mirror that has the

lowest fallback frequency is then designated as the Master.

This is because it ensures that all Slave mirrors can follow

HV On/OffSensing

fm

MMSC_m FBF_m

MEMS System m

Fallback Frequency Detection Block

Frequency

Adaption

Block

FBFDT

……

…

FBFT

……

……

Fallback

Frequency

Determination

Block

Fig. 7. Concept of a MEMS-based LiDAR system with an integratedfallback frequency detection block.

the Master mirror. If a Master were chosen that had a higher

fallback frequency than at least one Slave, there would be a

risk that at least one Slave system would permanently crash.

In the worst case, this can have safety-relevant consequences,

such as an accident due to a lack of environment perception.

Via Master Management System Communication (MMSC)

m the individual MEMS-based LiDAR systems are informed

whether they are the Master or not. Those systems that

are Slaves then switch to Slave mode. How the fallback

frequency of the individual MEMS mirrors is determined

is explained below.

A. Fallback Frequency Determination in MEMS-based Li-

DAR Systems

In this Subsection it is explained how the fallback fre-

quencies of the individual MEMS-based LiDAR systems

are determined. To determine the fallback frequency of the

individual MEMS system, an additional module must be

integrated into the existing system, as shown in Figure 7. In

this module, there is a frequency adaption block, which first

reduces the frequency to reach the jump frequency and then

increases it until the fallback frequency is reached. However,

this process only starts once the Fallback Frequency Determi-

nation Trigger (FBFDT) is set. This trigger can be set either

by the System Safety Controller, the system itself at system

start-up or by another external device on which the MMS

is implemented. When the fallback frequency is reached,

this value is written into a register via Fallback Frequency

Threshold (FBFT) and subsequently transmitted to the MMS.

To determine the jump frequency and fallback frequency, an

analog-to-digital converter (ADC) value of the Driver is used.

By means of this value, significant frequency changes can be

detected. More about this follows in Section IV.

B. Dynamic Master Determination Procedure

Next, the dynamic Master determination procedure is de-

scribed in detail in this Subsection. How the dynamic Master

determination procedure looks like is shown in Figure 8 and

described in the following steps:

1) System Start-Up

In the process of the system start also the trigger

FBFDT is set to get the current fallback frequency

of the MEMS system right at the beginning. During

operation it is not necessary to start another check for

the correct fallback frequency, only at the next system

start.

2) Mirror Frequency Reduction

At the beginning the frequency is reduced step by step

in open-loop mode. This is continued until the jump

Start

System Start-Up

Trigger

Frequency

Adaption

Yes

No

End

Reduce

Mirror

Frequency

Reached Jumping

Frequency not yet

Reached

Jumping

Frequency

Raise

Mirror

FrequencyReached Fallback

Frequency not yet

Reached

Fallback

Frequency

Set

Fallback

Frequency

in Register

Get Fallback

Frequency

from MEMS

System m

Store

Fallback

Frequency

in Register

MEMS System m

Master Management System

Fallback

Frequency

Comparison

Set MEMS

System m

as Slave

Fallback

Frequency

lower than

from another

MEMS System

Set MEMS

System m

as Master

Fallback

Frequency

higher than

from another

MEMS System

Fig. 8. Process flow of the Dynamic Master Determination Procedure forMEMS-based LiDAR environment perception systems.

frequency of the mirror is reached. It is checked by

means of an ADC value of the Driver - explained in

the Section IV.

3) Mirror Frequency Raise

After that, the frequency is increased step by step until

the fallback frequency of the mirror is reached. At

this point, the mirror falls back to the lower resonance

curve. This is also detected by means of the ADC value

of the Driver.

4) Set Fallback Frequency

This value is then written to a register and forwarded

to the MMS via MMSC. At the same time, the mirror

is brought back to the upper resonance curve and put

into closed-loop mode. This will put the mirror into

operation mode and will make it ready to become

Master or to synchronise to the selected Master.

5) Get Fallback Frequency from MEMS Systems

After receiving the fallback frequencies from the

MEMS systems, these are written to a register in the

MMS. The system remains in the waiting state until

all connected MEMS systems have transmitted their

fallback frequencies to the MMS.

6) Comparison of Fallback Frequencies

Subsequently, the entered fallback frequencies are

compared with each other and sorted by means of a

common sorting algorithm. A priority list is created

within the MMS, based on these priorities the Master

system is selected. The system with the mirror that

has the lowest fallback frequency is given the highest

priority. The other priorities are assigned analogously.

7) Set Master System

The priority list is then consulted to decide which

system becomes the Master. This is also communicated

to the MEMS systems via MMSC. As long as the

system with the highest priority is available, this is

now the Master system. However, if this system is not

available for a certain period of time, the system with

0 0.5 1 1.5 2

Mirror Half Periods [1] 104

400

450

500

550

DL

2 a

vera

ge

[1

]

0 0.5 1 1.5 2

Mirror Half Periods [1] 104

4000

4200

4400

4600

4800

5000

Fre

qu

en

cy [

Hz]

Fig. 9. Measurement with the first MEMS mirror sample - fallbackfrequency at 4899 Hz.

0 0.5 1 1.5 2

Mirror Half Periods [1] 104

400

450

500

550

DL2 a

vera

ge [1]

0 0.5 1 1.5 2

Mirror Half Periods [1] 104

4000

4200

4400

4600

4800

5000

Fre

quency

[H

z]

Fig. 10. Measurement with the second MEMS mirror sample - fallbackfrequency at 4850 Hz.

the following higher priority automatically follows as

Master.

With this new dynamic Master determination procedure,

it is now possible to create an enlarged field of view with

multiple MEMS-based LiDAR systems and an appropriate

Master. This significantly increases the safety and reliability

of the entire environment perception system, as there is no

more risk of selecting an inappropriate Master. It is thus

possible to obtain the maximum possible FoV with the given

components without risking a system crash.

IV. RESULTS

In this Section we provide the test results of our novel

dynamic Master determination procedure, which has been

introduced in Section III. Our architecture was implemented

in an FPGA Prototyping Platform by Yoo et al. [12]. This

FPGA Prototyping Platform consists of a MEMS Driver

0 0.5 1 1.5 2

Mirror Half Periods [1] 104

400

450

500

550

DL2 a

vera

ge [1]

0 0.5 1 1.5 2

Mirror Half Periods [1] 104

4000

4200

4400

4600

4800

Fre

quency

[H

z]

Fig. 11. Measurement with the third MEMS mirror sample - fallbackfrequency at 4719 Hz.

0 0.5 1 1.5 2

Mirror Half Periods [1] 104

400

450

500

550

DL

2 a

vera

ge

[1

]

0 0.5 1 1.5 2

Mirror Half Periods [1] 104

4000

4200

4400

4600

4800

5000F

req

ue

ncy

[H

z]

Fig. 12. Measurement with the fourth MEMS mirror sample - fallbackfrequency at 4902 Hz.

Digital Part (FPGA), a MEMS Driver Discrete Analog Part,

and the 1D MEMS mirror. For our test setup, we have used

four different MEMS mirrors. The procedure was performed

for all four MEMS mirror samples. It can be clearly seen in

the measurements at which point in time the jump frequency

or fallback frequency of the respective mirror is reached.

Each plot contains a measurement of the actuation frequency

and a measurement of the Device Layer 2 (DL2) average

value. The frequency plot shows the frequency at which the

Driver switches the HV on and off. The DL2 average value

is an ADC value of the Driver and reflects the currents at

the MEMS mirror combs. The higher the DL2 average value,

the lower the mechanical deflection of the mirror. Between

the jump to the upper resonance curve and the fallback

to the lower resonance curve, it can be clearly seen that

the deflection is much more than on the lower resonance

curve. Using the four different MEMS mirrors, it can also

be clearly seen that there are different fallback frequencies.

The fallback frequencies of samples 1 and 4 are within a few

Hz, as can also be seen in Table I. The fallback frequency

TABLE I

FALLBACK FREQUENCIES OF MEMS MIRROR SAMPLES

Sample Fallback Frequency Determined Master Order

[Hz]

1 4899 3rd2 4850 2nd3 4719 1st4 4902 4th

of mirror number 2 is approximately 50 Hz lower than the

fallback frequency of mirrors 1 and 4. Mirror number 3’s

frequency is almost 200 Hz away. Thus, mirror number 3

would be the Master mirror among these four mirrors. If this

mirror would fail, mirror number 2 would follow as Master.

V. CONCLUSION

In our paper, we have introduced a novel Master deter-

mination procedure for synchronised MEMS-based LiDAR

systems. With this new procedure, we are able to select an

appropriate Master and thus obtain the maximum possible

FoV with the given components. We can also answer the

research question whether it is possible to always select the

most appropriate Master despite different characteristics with

yes. Since the mirror with the lowest fallback frequency is

always the most appropriate one, we always choose the most

appropriate Master. This should also prevent a system crash

of Slaves due to the operation of the mirrors too close to their

fallback frequencies. However, if the Master system crashes

unexpectedly, the Slave systems do not crash as well, but the

next better Slave is changed to be the Master. The FoV is

then more limited, but the perception of the environment is

still possible. We can also clearly answer the last research

question. It is not necessary to check more often than at

system startup which mirror is most appropriate as Master.

At the beginning a check is done and also a priority list

is created. If the Master fails, it is known which system

will replace the old Master. In case of changed fallback

frequencies due to damage or aging, it will be noticed at

the next system startup. Usually, a system start is performed

at least once a day, because no vehicle and its components

are in continuous operation. Finally, our presented procedure

increases the safety and reliability of a synchronised LiDAR

system and related applications. It is another step toward

highly automated respectively autonomous vehicles.

ACKNOWLEDGMENT

The authors would like to thank all national funding

authorities and the ECSEL Joint Undertaking, which funded

the PRYSTINE project under the grant agreement number

783190.

PRYSTINE is funded by the Austrian Federal Ministry

of Transport, Innovation and Technology (BMVIT) under

the program ICT of the Future between May 2018 and

April 2021 (grant number 865310). More information: http-

s://iktderzukunft.at/en/.

REFERENCES

[1] C. Brunglinghaus, “Wie das Recht automatisiertes Fahren hemmt,”ATZ - Automobiltechnische Zeitschrift, vol. 117, no. 4, pp. 8–13, Apr2015. [Online]. Available: https://doi.org/10.1007/s35148-015-0039-0

[2] United Nations Conference on Road Traffic, “19 . Conventionon Road Traffic,” https://treaties.un.org/pages/ViewDetailsIII.aspx?src=TREATY&mtdsg no=XI-B-19&chapter=11&Temp=mtdsg3&clang= en, retrieved: October, 2019. [Online]. Available:https://treaties.un.org/pages/ViewDetailsIII.aspx?src=TREATY&mtdsg no=XI-B-19&chapter=11&Temp=mtdsg3&clang= en

[3] J. Terken and B. Pfleging, “Toward shared control between automatedvehicles and users,” Automotive Innovation, vol. 3, no. 1, pp. 53–61,2020.

[4] F. Flemisch, D. Abbink, M. Itoh, M.-P. Pacaux-Lemoine, andG. Weel, “Shared control is the sharp end of cooperation: Towardsa common framework of joint action, shared control and humanmachine cooperation,” IFAC-PapersOnLine, vol. 49, no. 19, pp. 72 –77, 2016, 13th IFAC Symposium on Analysis, Design, and EvaluationofHuman-Machine Systems HMS 2016. [Online]. Available: http://www.sciencedirect.com/science/article/pii/S2405896316320547

[5] SAE, “SAE International Standard J3016 - Taxonomy and Definitionsfor Terms Related to On-Road Motor Vehicle Automated DrivingSystems,” SAE International, Standard, January 2014.

[6] N. Druml, G. Macher, M. Stolz, E. Armengaud, D. Watzenig, C. Ste-ger, T. Herndl, A. Eckel, A. Ryabokon, A. Hoess, S. Kumar, G. Dim-itrakopoulos, and H. Roedig, “Prystine - programmable systems forintelligence in automobiles,” in 2018 21st Euromicro Conference on

Digital System Design (DSD), Aug 2018, pp. 618–626.

[7] A. Kohn, R. Schneider, A. Vilela, A. Roger, and U. Dannebaum,“Architectural Concepts for Fail-Operational Automotive Systems,” inSAE 2016 World Congress and Exhibition. SAE International, April2016.

[8] O. Vermesan, R. Bahr, R. John, M. Ottella, R. Gjølstad, O. Buckholm,and H.-E. Sand, “Advancing the Design of Fail-Operational Architec-tures, Communication Modules, Electronic Components, and Systemsfor Future Autonomous/Automated Vehicles,” in Intelligent System

Solutions for Auto Mobility and Beyond, C. Zachaus and G. Meyer,Eds. Cham: Springer International Publishing, 2021, pp. 53–71.

[9] G. Macher, N. Druml, O. Veledar, and J. Reckenzaun, “Safety andSecurity Aspects of Fail-Operational Urban Surround perceptION(FUSION),” in Model-Based Safety and Assessment, Y. Papadopoulos,K. Aslansefat, P. Katsaros, and M. Bozzano, Eds. Cham: SpringerInternational Publishing, 2019, pp. 286–300.

[10] J. Koci, N. Jovii, and V. Drndarevi, “Sensors and Sensor Fusionin Autonomous Vehicles,” in 2018 26th Telecommunications Forum

(TELFOR), 2018, pp. 420–425.

[11] V. De Silva, J. Roche, and A. Kondoz, “Robust Fusion ofLiDAR and Wide-Angle Camera Data for Autonomous MobileRobots,” Sensors, vol. 18, no. 8, 2018. [Online]. Available:https://www.mdpi.com/1424-8220/18/8/2730

[12] H. W. Yoo, N. Druml, D. Brunner, C. Schwarzl, T. Thurner, M. Hen-necke, and G. Schitter, “MEMS-based lidar for autonomous driving,”e & i Elektrotechnik und Informationstechnik, vol. 135, no. 6, pp.408–415, Oct 2018.

[13] M. Baumgart, C. Consani, M. Dielacher, and N. Druml, “Opticalsimulation of Time-of-Flight sensor accuracy in rain,” in The Euro-

pean Conference on Lasers and Electro-Optics. Optical Society of

America, 2017, p. CH P 25.[14] Velodyne LiDAR, “HDL-64E,” 2016.[15] R. Halterman and M. Bruch, “Velodyne HDL-64E lidar for unmanned

surface vehicle obstacle detection,” in Unmanned Systems Technology

XII, G. R. Gerhart, D. W. Gage, and C. M. Shoemaker, Eds., vol.7692, International Society for Optics and Photonics. SPIE, 2010,pp. 123 – 130. [Online]. Available: https://doi.org/10.1117/12.850611

[16] D. Wang, C. Watkins, and H. Xie, “MEMS Mirrors for LiDAR: AReview,” Micromachines, vol. 11, no. 5, 2020. [Online]. Available:https://www.mdpi.com/2072-666X/11/5/456

[17] N. Druml, I. Maksymova, T. Thurner, D. Van Lierop, M. Hennecke,and A. Foroutan, “1D MEMS Micro-Scanning LiDAR,” in The Ninth

International Conference on Sensor Device Technologies and Appli-

cations (SENSORDEVICES 2018), 09 2018.[18] Infineon Technologies AG. (2018) AURIX System Safety Controller.

https://iot-automotive.news/aurix-microcontroller-tc3xx-family-infineon-fuels-automated-driving-electromobility.

[19] B. Borovic, A. Q. Liu, D. Popa, H. Cai, and F. L. Lewis, “Open-loopversus closed-loop control of MEMS devices: choices and issues,”Journal of Micromechanics and Microengineering, vol. 15, no. 10, p.1917, 2005.

[20] P. Stelzer, A. Strasser, C. Steger, A. Garcia, and N. Druml, “Fast AngleAdaption of a MEMS-based LiDAR System,” IFAC-PapersOnLine,vol. 52, no. 15, pp. 55 – 60, 2019, 8th IFAC Symposium onMechatronic Systems MECHATRONICS 2019.

[21] P. Deng and W. Ma, “Nonlinearity investigation of the MEMSscanning mirror with electrostatic comb drive,” in The 9th IEEE

International Conference on Nano/Micro Engineered and Molecular

Systems (NEMS), 2014, pp. 212–215.[22] P. Stelzer, A. Strasser, C. Steger, H. Plank, and N. Druml, “To-

wards Synchronisation of Multiple Independent MEMS-based Micro-Scanning LiDAR Systems,” in 2020 IEEE Intelligent Vehicles Sympo-

sium (IV), 2020, pp. 1080–1085.[23] A. Strasser, P. Stelzer, C. Steger, and N. Druml, “Towards Synchronous

Mode of Multiple Independently Controlled MEMS Mirrors,” IFAC-

PapersOnLine, vol. 52, no. 15, pp. 31 – 36, 2019, 8th IFAC Sympo-sium on Mechatronic Systems MECHATRONICS 2019.

Related Documents