MASTER'S THESIS CloudSimDisk Energy-Aware Storage Simulation in CloudSim Baptiste Louis 2015 Master of Science (120 credits) Computer Science and Engineering Luleå University of Technology Department of Computer Science, Electrical and Space Engineering

Welcome message from author

This document is posted to help you gain knowledge. Please leave a comment to let me know what you think about it! Share it to your friends and learn new things together.

Transcript

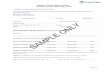

MASTER'S THESIS

CloudSimDiskEnergy-Aware Storage Simulation in CloudSim

Baptiste Louis2015

Master of Science (120 credits)Computer Science and Engineering

Luleå University of TechnologyDepartment of Computer Science, Electrical and Space Engineering

Luleå University of Technology

Department of Computer Science, Electrical and Space Engineering

PERCCOM Master Program

Master's Thesis in

PERvasive Computing & COMmunicationsfor sustainable development

Baptiste Louis

CLOUDSIMDISK: ENERGY-AWARE STORAGESIMULATION IN CLOUDSIM

2015

Supervisors: Professor Christer Åhlund - Luleå University of Technology

Doctor Karan Mitra - Luleå University of Technology

Doctor Saguna Saguna -Luleå University of Technology

Examiners: Assoc. Professor Karl Andersson - Luleå University of Technology

Professor Eric Rondeau - University of Lorraine

Professor Jari Porras - Lappeeranta University of Technology

This thesis is prepared as part of an European Erasmus Mundus program

PERCCOM - Pervasive Computing & COMmunications for sustainable develop-

ment.

This thesis has been accepted by partner institutions of the consortium (cf. UDL-

DAJ, no1524, 2012 PERCCOM agreement).

Successful defense of this thesis is obligatory for graduation with the following na-

tional diplomas:

• Master in Complex Systems Engineering (University of Lorraine);

• Master of Science in Technology (Lappeenranta University of Technology);

• Degree of Master of Science (120 credits) - Major: Computer Science and

Engineering; Specialisation: Pervasive Computing and Communications for

Sustainable Development (Luleå University of Technology).

ABSTRACT

Luleå University of Technology

Department of Computer Science, Electrical and Space Engineering

PERCCOM Master Program

Baptiste Louis

CloudSimDisk: Energy-Aware Storage Simulation in CloudSim

Master's Thesis - 2015.

99 pages, 51 �gures, 11 tables, and 4 appendices.

Keywords: Modelling and Simulation, Energy Awareness, CloudSim, Storage, Cloud

Computing.

Cloud Computing paradigm is continually evolving, and with it, the size and the

complexity of its infrastructure. Assessing the performance of a Cloud environment

is an essential but strenuous task. Modeling and simulation tools have proved their

usefulness and powerfulness to deal with this issue. This master thesis work con-

tributes to the development of the widely used cloud simulator CloudSim and pro-

poses CloudSimDisk, a module for modeling and simulation of energy-aware storage

in CloudSim. As a starting point, a review of Cloud simulators has been conducted

and hard disk drive technology has been studied in detail. Furthermore, CloudSim

has been identi�ed as the most popular and sophisticated discrete event Cloud simu-

lator. Thus, CloudSimDisk module has been developed as an extension of CloudSim

v3.0.3. The source code has been published for the research community. The simula-

tion results proved to be in accordance with the analytic models, and the scalability

of the module has been presented for further development.

ACKNOWLEDGMENTS

I would like to express my gratitude to my supervisor Professor Christer Åhlund for

the con�dence that he has placed in me and for his continuous guidance during this

research work. It is my honor to accomplish this master thesis under his supervision.

As well, I would like to thank Doctor Karan Mitra for his support and his valuable

knowledge in term of cloud computing and research work.

Thanks to Doctor Saguna for her advices and her daily dose of joviality.

Thanks to my PERCCOM classmates, especially Rohan Nanda and Khoi Ngo who

were with me at Skellefteå.

Thanks to Karl Anderson and Robert Brannstrom for their presence, their accessi-

bility and their assistance during my thesis work.

Thanks to Rodrigo Calheiros (Melbourne University) for his feedbacks on my im-

plementation.

Thanks to Eric Rondeau, PERCCOM coordinator, and all the PERCCOM team

for these two years of Master.

Skellefteå, May 26, 2015

Baptiste Louis

5

CONTENTS

1 INTRODUCTION 11

1.1 Context . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11

1.2 Fundamentals . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 12

1.2.1 Data Growth . . . . . . . . . . . . . . . . . . . . . . . . . . . 12

1.2.2 Cloud Computing . . . . . . . . . . . . . . . . . . . . . . . . 14

1.2.3 Cloud Simulators . . . . . . . . . . . . . . . . . . . . . . . . . 18

1.3 Research Challenges and Objective . . . . . . . . . . . . . . . . . . . 19

1.4 Thesis Contribution . . . . . . . . . . . . . . . . . . . . . . . . . . . . 19

1.5 Thesis Outline . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 20

2 BACKGROUND AND RELATED WORK 21

2.1 Energy E�cient Storage in Cloud Environment . . . . . . . . . . . . 21

2.2 Cloud Simulators . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 24

2.2.1 Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 24

2.2.2 CloudSim: a Framework for Modeling and Simulation of Cloud

Computing Infrastructures and Services . . . . . . . . . . . . . 28

2.2.3 CloudSim and Storage Modeling . . . . . . . . . . . . . . . . . 30

2.3 CloudSim Background . . . . . . . . . . . . . . . . . . . . . . . . . . 33

2.3.1 Entities . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 33

2.3.2 Life Cycle . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 34

2.3.3 Events Passing . . . . . . . . . . . . . . . . . . . . . . . . . . 35

2.3.4 Future Queue and Deferred Queue . . . . . . . . . . . . . . . 36

2.4 Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 37

3 CLOUDSIMDISK: ENERGY-AWARE STORAGE SIMULATION

IN CLOUDSIM 38

3.1 Module Requirements . . . . . . . . . . . . . . . . . . . . . . . . . . . 38

3.2 CloudSimDisk Module . . . . . . . . . . . . . . . . . . . . . . . . . . 38

3.2.1 HDD Model . . . . . . . . . . . . . . . . . . . . . . . . . . . 39

3.2.2 HDD Power Model . . . . . . . . . . . . . . . . . . . . . . . . 41

3.2.3 Data Cloudlet . . . . . . . . . . . . . . . . . . . . . . . . . . . 41

3.2.4 Data Center Persistent Storage . . . . . . . . . . . . . . . . . 42

3.3 Execution Flow . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 42

3.4 Packages Description . . . . . . . . . . . . . . . . . . . . . . . . . . . 50

3.5 Energy-Awareness . . . . . . . . . . . . . . . . . . . . . . . . . . . . 53

3.6 Scalability . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 55

3.6.1 HDD Characteristics . . . . . . . . . . . . . . . . . . . . . . . 55

6

3.6.2 HDD Power Modes . . . . . . . . . . . . . . . . . . . . . . . . 56

3.6.3 Randomized Characteristics . . . . . . . . . . . . . . . . . . . 56

3.6.4 Data Center Persistent Storage Management . . . . . . . . . . 57

3.6.5 Broker Request Arrival Distribution . . . . . . . . . . . . . . . 58

3.7 Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 59

4 RESULTS 60

4.1 Inputs and Outputs . . . . . . . . . . . . . . . . . . . . . . . . . . . . 60

4.1.1 Input Parameters . . . . . . . . . . . . . . . . . . . . . . . . . 60

4.1.2 Simulation Outputs . . . . . . . . . . . . . . . . . . . . . . . . 61

4.2 Results . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 64

4.2.1 Request Arrival Distribution . . . . . . . . . . . . . . . . . . . 64

4.2.2 Sequential Processing . . . . . . . . . . . . . . . . . . . . . . 65

4.2.3 Seek Time Randomness . . . . . . . . . . . . . . . . . . . . . 69

4.2.4 Rotation Latency Randomness . . . . . . . . . . . . . . . . . . 70

4.2.5 Data Transfer Time Variation . . . . . . . . . . . . . . . . . . 71

4.2.6 Seek Time, Rotation Latency and Data Transfer Time Com-

pared with Energy Consumption per Transaction . . . . . . . 72

4.2.7 Persistent Storage Energy Consumption . . . . . . . . . . . . 74

4.2.8 Energy Consumption and File Sizes . . . . . . . . . . . . . . . 75

4.2.9 Disk Array Management . . . . . . . . . . . . . . . . . . . . . 78

4.3 Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 81

5 CONCLUSIONS AND FUTURE WORK 82

5.1 Thesis Contribution: CloudSimDisk . . . . . . . . . . . . . . . . . . . 82

5.2 Limitations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 82

5.3 Future Work . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 83

REFERENCES 83

APPENDICES

Appendix 1: CloudSimDisk Source Code

Appendix 2: Run a First Example

Appendix 3: Hard Drive Disk Model

Appendix 4: Hard Drive Disk Power Model

7

List of Figures

1 The Vostok ice core data [6]. . . . . . . . . . . . . . . . . . . . . . . . 11

2 Cloud service delivery models: SaaS, PaaS, and IaaS, based on [29]. . 15

3 Deployment models of Cloud solutions. . . . . . . . . . . . . . . . . . 16

4 Free Cooling in Facebook data center, Luleå (Sweden) [37]. . . . . . . 17

5 Architectural details of the three layers of MDCSim simulator [44]. . . 26

6 Architecture of the GreenCloud simulation environment [45]. . . . . . 27

7 Layered CloudSim architecture [43]. . . . . . . . . . . . . . . . . . . . 29

8 CloudSim and StorageCloudSim architecture overview [83]. . . . . . 31

9 Concept of HDD processing element in CloudSimEx [86]. . . . . . . 32

10 CloudSim architecture with the new data Clouds layer [82]. . . . . . 33

11 CloudSim high level modeling. . . . . . . . . . . . . . . . . . . . . . . 34

12 CloudSim Life Cycle. . . . . . . . . . . . . . . . . . . . . . . . . . . . 34

13 Example of queue management during three clock ticks. . . . . . . . . 36

14 Diagram of model parameters [91]. . . . . . . . . . . . . . . . . . . . 39

15 CloudSimDisk Cloudlet constructor. . . . . . . . . . . . . . . . . . . . 42

16 Event passing sequence diagram for "Basic Example 1", 3 Cloudlets. . 43

17 Event passing sequence diagram for "Wikipedia Example", 3 Cloudlets. 44

18 Process when datacenter receives a CLOUDLET_SUBMIT event. . . 45

19 HDD internal process of adding a �le. . . . . . . . . . . . . . . . . . . 47

20 Example of storage management with one HDD: (a) graphic; (b) code. 49

21 CloudSimDisk: 27 classes organized in 8 packages. . . . . . . . . . . . 50

22 Class Diagram of CloudSimDisk extension. . . . . . . . . . . . . . . . 52

23 Example of idle intervals history management. . . . . . . . . . . . . . 54

24 Example of console information output. . . . . . . . . . . . . . . . . 62

25 Wikipedia workload distribution. . . . . . . . . . . . . . . . . . . . . 64

26 Simple example distribution. . . . . . . . . . . . . . . . . . . . . . . 65

27 Sequential processing of requests illustrated. . . . . . . . . . . . . . . 65

28 Sequential processing of requests part 1.1. . . . . . . . . . . . . . . . 66

29 Sequential processing of requests part 1.2. . . . . . . . . . . . . . . . 66

30 Sequential processing of requests part 2.1. . . . . . . . . . . . . . . . 66

31 Sequential processing of requests part 2.2. . . . . . . . . . . . . . . . 67

32 Sequential processing of requests part 2.3. . . . . . . . . . . . . . . . 67

33 Sequential processing of requests part 2.4. . . . . . . . . . . . . . . . 67

34 Sequential processing of requests part 3.1. . . . . . . . . . . . . . . . 68

35 Sequential processing of requests part 3.2. . . . . . . . . . . . . . . . 68

36 Seek Time distribution. . . . . . . . . . . . . . . . . . . . . . . . . . 69

8

37 Rotation Latency distribution. . . . . . . . . . . . . . . . . . . . . . 70

38 Transfer times and �le sizes. . . . . . . . . . . . . . . . . . . . . . . 71

39 The transaction time: sum of the seek time, the rotation latency and

the transfer time. . . . . . . . . . . . . . . . . . . . . . . . . . . . . 72

40 Energy consumption per transaction compared with: (a) Seek Time;

(b) Rotation Latency; (c) Data Transfer Time. . . . . . . . . . . . . . 73

41 "MyExampleWikipedia1", 5000 requests - Final result. . . . . . . . . 74

42 Energy consumed per operation Eoperation with �le sizes of (a) 1 MB,

(b) 10 MB, (c) 100 MB and (d) 1000 MB. . . . . . . . . . . . . . . . 77

43 Disk array management algorithm: (a) FIRST-FOUND; (b) ROUND-

ROBIN. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 78

44 CloudSimDisk simulation with 1 HDD, 2 HDDs and 3 HDDs in the

persistent storage using Round Robin algorithm management. . . . . 80

A1.1 CloudSimDisk home page on GitHub. . . . . . . . . . . . . . . . . . . 93

A1.2 CloudSim core simulation engine on CloudSimDisk GitHub repository. 94

A2.1 CloudSimDisk console output for "MyExample0". . . . . . . . . . . . 95

A3.1 CloudSimDisk HDD model of the Seagate Enterprise NAS 6TB (Ref:

ST6000VN0001). . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 96

A3.2 The abstract class extended by all Hard Disk Drive models. . . . . . 97

A4.1 CloudSimDisk HDD power model of the HGST Ultrastar 900GB (Ref:

HUC109090CSS600). . . . . . . . . . . . . . . . . . . . . . . . . . . . 98

A4.2 The abstract class extended by all Hard Disk Drive power models. . 99

9

List of Tables

1 Comparison of Cloud Computing Simulators (alphabetic order). . . . 25

2 Entities IDs assignment. . . . . . . . . . . . . . . . . . . . . . . . . . 35

3 CloudSimDisk HDD characteristics. . . . . . . . . . . . . . . . . . . . 40

4 CloudSimDisk HDD power mode. . . . . . . . . . . . . . . . . . . . . 41

5 Trace of HDD values related to Figure 20. . . . . . . . . . . . . . . . 48

6 "IdleIntervalsHistory" values related to Figure 23. . . . . . . . . . . . 54

7 Sets the request arrival distribution in MyPowerDatacenterBroker. . . 58

8 Input parameters for CloudSimDisk simulations. . . . . . . . . . . . . 61

9 Excel values output. . . . . . . . . . . . . . . . . . . . . . . . . . . . 63

10 Common input parameters for ROUND-ROBIN experiments. . . . . . 79

11 Maximum "waiting queue" length for ROUND-ROBIN examples. . . 81

10

ABBREVIATIONS AND SYMBOLS

(Alphabetic order)

BAU Business As Usual

CERN European Organization for Nuclear Research

CUE Carbon Usage E�ectiveness

DAS Direct Attached Storage

GeSI Global e-Sustainability Initiative

HDD Hard Drive Disk

IaaS Infrastructure-as-a-Service

IDC International Data Corporation

LHC Large Hardon Collider

LTO Linear Tape-Open

NaaS Network-as-a-Service

NAS Network Attached Storage

NIST National Institute of Standards and Technology

PaaS Platform-as-a-Service

PUE Power Usage E�ectiveness

RPM Rotation Per Minute

SaaS Software-as-a-Service

SAN Storage Arena Network

SLA Service Level Agreement

SSD Solid State Drive

StaaS Storage-as-a-Service

UPS Uninterruptible Power Supply

WUE Water Usage E�ectiveness

11

1 INTRODUCTION

This chapter aims to introduce the whole thesis. It starts with a global context, then

it describes the fundamentals concepts, and next, it presents the research challenges,

objective and the thesis contribution. At last, the thesis outline is established.

1.1 Context

In the past decades, the "Digital Revolution"1, centered around information, trans-

formed the way we communicate, we produce, we think, and marked the beginning

of the "Information Age", characterized by the development of Information and

Communication Technologies (ICTs) [1,2]. According to [3], these technologies were

responsible for more than 8% of the global electricity consumption (168GW) in 2008,

and are predicted to be 14% in 2020.

In parallel, a serious preoccupation arises around global warming. In 1979, the Na-

tional Academy of Sciences (NAS) [4] estimated an increase in the average global

temperature of about 3 degrees Celsius in the coming decades. The Vostok ice core

drilling (1998), later con�rmed by the European Project for Ice Coring in Antarctica

(EPICA, 2004), empowered scientists to correlate the concentration of CO2 and the

evolution of the surface temperature during the last hundreds millenniums (see Fig-

ure 1). Present measurements are unprecedented and indicate a signi�cant human

perturbation since the beginning of industrial revolution [5].

Figure 1. The Vostok ice core data [6].

1also known as the "Third Industrial Revolution"

12

In 2008, the Global e-Sustainability Initiative (GeSI) published the SMART 2020

report [7] questioning the impact of ICTs on the human environment. Two answers

have been identi�ed: �rst, the ICT sector has a clear impact on excessive CO2

emissions and it is expected to increase by a factor of 2.7 for 2020; second, 15% of

the total Business As Usual (BAU) emissions, predicted for 2020, can be avoided

thanks to ICTs, for a total of 600 billion euros savings.

Additionally in this report, data centers have been identi�ed as the "fastest-growing

contributor to the ICT sector's carbon footprint", attributable to the "vast amount

of data ... stored" and related to the "Information Age".

1.2 Fundamentals

This section gives a summary of the Internet of Things and Big Data paradigms,

and the fundamentals of Cloud-Computing technologies. Cloud simulation, which

is a central part of this work, is introduced as well.

1.2.1 Data Growth

The generation of data is growing at an astonishing rate. According to a Garner Sur-

vey [8], data growth is the biggest "data center hardware infrastructure" challenge

for large enterprises and 30% of the respondents plan to build a new data center

in the year coming to overcome this growth. An International Data Corporation

(IDC)'s study [9] claims that the "digital universe" will reach an unthinkable 44

zettabytes2 by 2020, giving a growth factor of 3003 compare to 2005. Another IDC's

study [10] states that 75% of the "digital universe" is produced by individuals, while

investments of enterprises in IT infrastructure increased by 50% between 2005 and

2011, and is predicted to grow by 40% from 2012 to 2020, with a main focus on

"storage management", "security", "big data", and "cloud computing".

Drivers of this multiplication include di�erent factors. The switch from analog to

digital technologies had its impact in the last decades as demonstrated by [11], which

estimated that 94% of storage technologies were in digital format in 2007 compare

to 0.8% in 1986. Subsequently, Atzori et al. [12] observed a continuous decreasing

price of digital storage and concluded that once data is generated, it is most likely

that it will be stored inde�nitely. Three years later, SINTEF [13], the largest in-

21 zettabyte = 1015 megabytes3Between 2006 and 2011, the growth factor was "only" 9.

13

dependent research organization in Scandinavia, evaluated that 90% of the word's

data has been generated over the last two years, con�rming the expected growth.

The emerging paradigm called Internet of Things (IoT) has a signi�cant impact on

data growth's predictions for the next decade. De�ned as the fourth major growth

spurt of digital data by IDC [14], IoT was approaching 20 billion connected objects

in 2014. Further, new business and research opportunities created by IoT, such as

real-time information on "mission-critical systems", "environmental monitoring" or

"product tracking" along their life cycle, will increase the amount of communicating

things to 30 billion by 2020.

Data was getting exceedingly big before IoT paradigm. According to [15], the term

"Big Data" appears in the ACM digital library in October 1997 and refers to a

problem where a set of data do not �t in the local disk memory. Today, the de�nition

of this problem has not really changed but includes the idea of complexity such as

"resource contention and interference", "heterogeneous data �ows" and "uncertain

resource needs" [16].

In 2012, Snijders et al. [17] de�ned Big Data as "a loosely de�ned term used to

describe data sets so large and complex that they become awkward to work with using

standard statistical software". More recently, a similar work have been conducted

by De Mauro et al. [18] to provide a "consensual" and "thorough" de�nition for

Big Data and proposed to de�ne the term as "Big Data represents the Information

assets characterized by such a High Volume, Velocity and Variety to require speci�c

Technology and Analytical Methods for its transformation into Value".

This last de�nition retains the 3Vs attributes introduce by Gartner's analyst Doug

Laney back in 2001 [19]:

• Volume: refers to the amount of data expressed in bytes and preceded by a

speci�c pre�x to represent very large amount of data.

Examples: petabyte, terabyte, and zettabyte.

• Variety: refers to the di�erent forms of data, mainly due to diverse data

source and a�ecting directly the complexity of processing this data.

Examples: pure text, photo, audio, video, web, and raw data.

• Velocity: refers to the rate at which data changes hands in a network.

Examples: trading, social network, and real time streams.

14

Although the "3Vs" model is still widely used today, new "V" dimensions have been

introduced [20] like Veracity as an indicator of meaningfulness to the problem being

analyzed, or Variability as an indicator of inconsistency of the data at times.

As an example of rapid growth of unstructured data, more than 500 000 gigabytes

of data was daily uploaded to Facebook databases in 2012 [21]. Today, YouTube

users upload 300 hours of new video every minute [22], compare to an upload rate

of 60 hours in 2012 and 6 hours in 2007 [23]. Concerning the digitalization of

information, the U.S. Library of Congress was storing in April 2011 not less than 235

terabytes of data [24]. In the atomic area, the Large Hadron Collider (LHC), built

by the European Organization for Nuclear Research (CERN), produced roughly

30 petabytes of data per year and will produce 110 petabytes a year after some

updates [25].

The near future will stretch the three dimensions of Big Data at their extreme.

Its "3Vs" require a speci�c environment with dedicated infrastructure and tools

enabling enterprises and scientists to store and analyze this incommodious data

complexity. As explained by [26, 27], Cloud Computing technology is become a

powerful environment capable of dealing with Big Data challenges.

1.2.2 Cloud Computing

The National Institute of Standards and Technology (NIST) has de�ned Cloud Com-

puting as "a model for enabling ubiquitous, convenient, on-demand network access

to a shared pool of con�gurable computing resources [...] that can be rapidly provi-

sioned and released with minimal management e�ort or service provider interaction"

where "computing resources" refers both to the IT infrastructure (servers, storage,

and networks components) and the abstract application layer (virtualization, inter-

faces, and software) [28]. The wide range of services provided by Cloud Computing

has been divided into three main cloud service delivery models (see Figure 2):

• Software-as-a-Service (SaaS): an application running on the Cloud and ac-

cessible by the end user through an interface like a web browser.

• Platform-as-a-Service (PaaS): a con�gurable platform integrating both hard-

ware and software tools for web application development.

• Infrastructure-as-a-Service (IaaS): a virtualized pool of computing resources

physically located in the cloud and manageable over the Internet.

15

Figure 2. Cloud service delivery models: SaaS, PaaS, and IaaS, based on [29].

Yet, the list is not exhaustive as many other "XaaS" have been coined such as

STorage-as-a-Service (StaaS) and Network-as-a-Service (NaaS) [30].

The Cloud Computing IT infrastructure is amassed in large data centers. It needs

to be managed, maintained, upgraded, and consumes huge amount of energy every

day, leading to major costs for its owner. Therefore, the deployment of a Cloud

infrastructure is a strategic move that need to be evaluated beforehand. Currently,

four Cloud infrastructure models have been de�ned (see Figure 3):

• Private Cloud: the infrastructure is used by a single organization but can

be managed and/or owned by a third party.

• Public Cloud: the infrastructure is available to the general public over the

Internet o�ering limited security and variable performances.

• Community Cloud: the infrastructure is shared by several organizations but

commonly managed internally or by a third party.

• Hybrid Cloud: the infrastructure is a combination of Cloud models that

remains a unique entity, o�ering more �exibility at the expense of complexity.

Each model has di�erent degree of security, complexity and management. Thus, the

model choice will be done according to requirements or needs of the organization.

16

Figure 3. Deployment models of Cloud solutions.

Regardless of the model, a Cloud Computing solution provides a great trade-o�

between convenience, cost and �exibility. From the hardware side, [31] identi�es

three new facets conferred by Cloud Computing:

• appearance of unlimited available resources procured by the rapid elasticity of

the system outward and inward;

• opportunity to scale up or down your infrastructure dynamically and auto-

matically, according to your personal or business needs over the time, and

consequently to pay for the real usage ("On-demand self-service");

• possibility to access "ready-to-use" computing environment and eliminating

the up-front commitment by Cloud users.

Such a degree of convenience is achieved through virtualization of hardware resources

into multiple virtual machines and virtual storage. While virtualization improves

the scalability of the cloud system and reduces the number of physical equipment,

it requires powerful resources to not a�ect the performances of the system since one

physical machine might handle multiple virtual ones.

At the storage level, virtualization backwards speci�c Direct Attached Storage (DAS)

resources to a pool of storage, accessible through an internal network (Network At-

tached Storage (NAS) and Storage Arena Network (SAN)).

17

Whereas the storage technologies have changed dramatically in the past few years,

there is today a "performance gap" [32] between the CPUs speed of servers and

the related memory or storage subsystem. Due to di�erent evolution history, stor-

age elements became a bottleneck in the computing system, a�ecting performances

when data is requested by processor tasks. The Solid State Drive (SSD) elimi-

nates the binding mechanical parts of Hard Disk Drives (HDDs), thus reduces the

overall access time and improves its performances. Additionally, this technology is

more reliable and consumes less energy, which makes the SSD technology "greener".

However, its cost-per-bit is still signi�cantly higher than HDD, which makes SDD

solution cost-prohibitive and limits its utilization to critical I/O applications. Thus,

HDDs storage resources are still widely present in modern data centers.

Concerning energy consumption and sustainability, Cloud Computing has a signi�-

cant impact on the environment. According to [33�35], running physical equipment

represents about 40% to 55% of the energy bill in a data center. Then, 30% to 45%

is used to cool the equipment due to thermal characteristic of electronic circuitry

and 10% to 15% is consumed by Uninterruptible Power Supplies (UPSs), lighting

and other. A report from the Natural Resources Defense Council (NRDC) [36] eval-

uated in 2013 that the U.S. data centers were using the equivalence of 34 power

plants, each of them generating 500 megawatts of electricity. The resulting CO2

emissions were close to 100 million Tons. Recently, Big companies like Facebook,

Google and Apple decided to build their data centers in the Nord part of Europe,

for di�erent reasons including electricity price, social stability but mainly for the

adequate climate, enabling to use outside air to cool down IT equipment and reduce

the overall data center energy consumption (see Figure 4).

Figure 4. Free Cooling in Facebook data center, Luleå (Sweden) [37].

18

However, even though energy consumption is an important factor of the Cloud

Computing environmental impact, it is not proportional to CO2 emissions. Cloud

providers are looking for alternative source of energy to reduce their carbon dioxide

emanations. As a good example, all the equipment inside Facebook Luleå data

center is powered by 100% renewable energy locally generated by hydroelectric power

plans. In another way, Google is investing in carbon o�sets projects and buy clean

power from speci�c producer in order to balance emissions from their data centers.

1.2.3 Cloud Simulators

Cloud simulator tools empowered researchers, engineers and developers to have con-

trol on all the layers of their Cloud environment, in a stable, cost-e�cient and

scalable way.

They can replicate, repeat and validate scenarios published by the research commu-

nity. Then, they can apply their own modi�cations according to their focus area and

results can be e�ciently reused by others. Also, simulation is a cost e�ective and

highly scalable way to realize tests on a large scale infrastructure since adding re-

sources is basically the matter of changing one variable. Further, simulator tools are

often based on discrete time which enable users to launch many di�erent simulations

which compute rapidly with minimum computing resources. [16]

According to [16,38�42], three popular simulators can be identi�ed: CloudSim, MD-

CSim and GreenCloud.

CloudSim [43] is an event-based tool, considered as the "most popular" [41] and "so-

phisticated" [42] Cloud simulator available, mainly due to its extensibility and open

source license. It enables modeling of hardware resources (data center, host, CPUs

elements) as well as internal network topology and virtual machines with di�erent

scheduling policies.

MDCSim [44] is a commercial discrete event-based simulator developed at the Penn-

sylvania State University. Its goal is the simulation of multi-tier data center architec-

tures. It includes multiple vendors' hardware resources models and allows estimation

of servers' power consumption.

GreenCloud [45] has been built on the top of the well-known network simulator ns-2.

It is a packet level simulator focused on energy-aware data center environment mod-

eling, including communication links, switches, gateways, as well as communication

protocols, resource allocation and workload scheduling.

19

1.3 Research Challenges and Objective

Cloud Computing is a recent paradigm, continually evolving, with an increasing

number of IT equipment and a raise of complex data structures. Research chal-

lenges include management of Service Level Agreement (SLA), security and privacy,

as well as resource monitoring and data management. Further, Cloud Computing is

facing global challenges in term of interoperability and common standardization.

More recently, energy-e�cient computing devices, energy-aware resource manage-

ment and overall data center environment supervision are predominant topics in the

scienti�c literature, with the aim to reduce the ICT energy consumption and the re-

lated CO2 emissions. Also, Cloud modeling and simulation are indirectly important

challenges to provide engineers and researchers a suitable platform to develop new

algorithms, new models and new frameworks for Cloud Computing.

The thesis topic was centered on energy e�cient data storage in data center, with

the objective to identify and develop a method to study the energy e�ciency of the

data center storage. Hence, this thesis raise questions such as how to study a data

center storage system, how to identify key components to study energy e�ciency,

and how to provide energy awareness in a large scale system such as data centers.

1.4 Thesis Contribution

The present master thesis work contributes in the area of modeling and simulation

of Cloud environment, with the development of the widely used CloudSim simulator.

Due to data growth, storage has become a major part of computing cloud systems

and its related energy consumption. Unfortunately, the current version of CloudSim

does not provide any fully implemented simulation of storage components.

Therefore, this thesis work presents CloudSimDisk, a module for energy-aware stor-

age modeling and simulation in CloudSim simulator. The extension is implemented

in accordance with CloudSim architecture, and provides a high degree of scalabil-

ity for future development. The source code has been published for the research

community.

20

1.5 Thesis Outline

This section gives an overview of the thesis structure with a brief introduction to

the following chapters.

Chapter 2 � Background and Related Work

Chapter two presents a literature study of energy e�cient storage in Cloud envi-

ronment and Cloud simulators. Further, CloudSim architecture and its features are

analyzed in detail, and related works on storage modeling are presented. The chap-

ter is concluded with a background on the operation of CloudSim discrete event

simulator.

Chapter 3 � CloudSimDisk: Energy-Aware Storage Simulation in CloudSim

Chapter three introduces the CloudSimDisk module. At �rst, the module require-

ments are presented. Then, the di�erent components of CloudSimDisk are described.

Further, the package diagram, the execution �ow of CloudSimDisk simulations and

the implementation of energy-awareness are explained. At last, the scalability of the

module is demonstrated.

Chapter 4 � Results

Chapter four presents the results produced by CloudSimDisk. Inputs and outputs

of the simulations are explained in detail. The computation of the energy consump-

tion is analytically described and simulation results demonstrate the validity of the

implementation.

Chapter 5 � Conclusions and Future Work

Chapter �ve summaries the thesis contribution and discusses the limitations of the

current implementation. The chapter concludes this master thesis with future works

for CloudSimDisk.

21

2 BACKGROUND AND RELATED WORK

This chapter presents the related work of this thesis. The �rst part discusses en-

ergy e�cient storage in Cloud environment, including storage technologies, energy

e�ciency and data management. The second part discusses Cloud Computing simu-

lation tools: it inventories the main simulators available today and compares them.

Then, the well-known CloudSim tool is analyzed in depth and CloudSim storage

modeling is discussed. The chapter ends with a short and clear background on

CloudSim operation, necessary for the complete understanding of the next chapter.

2.1 Energy E�cient Storage in Cloud Environment

Pushed forward by data growth (see 1.2.1), e�cient storage in cloud environment has

become a strategic element in term of cost, performances and energy consumption.

Hard Disk Drive (HDD) is a well-established technology widely used in data cen-

ters. According to [46�48], its average cost per Gigabyte (GB) in 2014 was close to

US$0.03, decreasing continually since 1980. The areal density of HDDs had an aver-

age of 860 Gbits per square inch (or 1333 bits per micrometer-squared) in 2013 [49],

way ahead LTO Tape (2.1 Gbits/in2), ENT Tape (3.1 Gbits/in2) and optical Blu-ray

discs (75 Gbits/in2). The resulting capacity of these drives varies from 250 GB to

4 Terabytes (TB), and up to 10TB for the most recent ones, using thinner platters,

helium-�lled technology and Singled Magnetic Recording (SMR) [50, 51]. Concern-

ing performances, HDDs have a transfer rate in the order of 100-200 MB/s [52] which

vary depending on many di�erent factors including the rotation speed of platter, the

location of the �le on the platter and the number of sectors per track. As such, HDD

technology is a cost-e�cient and performance solution to store data in data center

environment.

However, I/O intensive applications require very low latency to access stored data.

Solid-State Drives (SSDs) have the advantages to not have a mechanical part, which

reduce their latency and improve their performances. The data rate of such drives is

around 200-500 MB/s [53] and their areal density was of 900 Gbits per square inch

in 2013 according to IBM [49]. But the main drawback of this technology, which

explains its slow adoption, is its cost. The cost per GB for SSD technology is around

US$0.40 for the cheapest models [54,55], 13 times higher than HDD technology.

22

To optimize the balance between cost and performance, the current trend is to dif-

ferentiate the application level requirements to match with an optimized resource

storage. SSD solution is used for critical I/O application while cheaper HDD tech-

nology is used for the remaining storage needs. Note that other technologies like

blue-ray disk [56] or Linear Tape-Open (LTO) magnetic tape [57] are studied for

long term conservation data with very low access frequency, or even probably never

accessed (backup, data required to be retained for a certain number of years by

law). Jay Parikh, Facebook's vice president of infrastructure engineering, stated

during the 2014 Open Compute summit that their experimental "Blu-ray system

reduces costs by 50 percent and energy use by 80 percent compared with its current

cold-storage system" based on HDD technology [58].

To summarize, the HDD technology, introduced by IBM in early 50s and improved

in the following decades, is still widely used in today's data centers, mainly due to

its low cost and high areal storage. Nevertheless, critical I/O applications require

more performance technology like Solid-State Drive to deal with hot data, data that

needs to be accessed quickly and frequently. Further, cold data, or data that are

almost never accessed, can be stored on low performance, low cost and low power

consumption technology like Tape or Blu-ray.

With the rise in Data (see 1.2.1), energy e�ciency became an important factor to

reduce the cost of the overall storage system in Cloud environment. One drawback

of HDD technology is that it requires signi�cant power in idle mode to spin platters,

while no operation is processing. One way to limit this downside is to reduce HDD

spin speed during inactive periods. The Open Compute Project [59] states that

"reducing HDD RPM (Rotation Per Minute) by half would save roughly 3-5W per

HDD". If we consider tens or even hundreds of thousands of HDD, in one data cen-

ter, the energy saved would be in the order of hundreds of kilowatts. Yet, it needs

to be considered that the related performances of the storage system will drop since

the speed of the disk is lower. Another approach is to reduce the number of HDD

in the system, without decreasing the total capacity of the data center. At �rst,

Perpendicular Magnetic Recording (PMR) technique has replaced the Longitudinal

Magnetic Recording (LMR) technique by storing bits vertically instead of horizon-

tally and squeezes around 750Gb per square inch on a disk platter. Next, Shingled

Magneting Recording (SMR) technique has been introduced. Because the reader

width of HDDs is smaller than the writer width, SMR technology overlaps adjacent

data tracks and improves the areal density of the drive by 25% [60]. This technol-

ogy is mainly interesting for long storage scenario with a few data modi�cations and

suppressions, since writing performances are a�ected by the shingled method.

23

Nishikawa et al. [61] proposes a novel energy e�cient storage management system for

intensive I/O behavior applications. The aim is to use application I/O patterns to

optimize the storage device I/O behavior. Hence, intervals during which an HDD is

inactive can be optimized by allowing the device to switch in power-o� state and to

save energy. The results show that the proposed framework provide equal or better

performances in term of energy consumption than conventional storage power-saving

methods (Popular Data Concentration (PDC) and Dynamic Data Reorganization

(DDR)). Since the proposed approach utilizes the application's I/O behaviors, it

can be also applied to SSD technology.

Wan et al. [62] develops a high performance energy-e�cient replication storage sys-

tem, using a RAID5 or RAID6 cache to conserve the reliability of the system. It

bu�ers many small writes requests in fewer large write transactions. Hence, the

front bu�er part of the system allows the back replica part to optimize its periods

of active and standby mode. As a result, the proposed solution improves writing

performances and saves more energy than previous system (GRAID and eRAID).

However, the PERAID system does not tolerate failure of the primary RAID cache

and the performances of the replica have not been yet analyzed, as well as its energy

e�ciency.

Taal et al. [63] proposes a decisional process for o�oading storage tasks from local

systems to remote data centers, based on greenhouse gas emissions. The decision

depends on the carbon emissions of the local storage, the remote data center and

the network connecting them. At �rst, they analyze the Power Usage E�ectiveness

(PUE) of both data centers, as well as the energy e�ciency of their storage systems

and the intermediary network. Then, the energy consumed by each part is converted

into grams of CO2 emitted according to the energy source used. The results show

that the o�oading decision is mainly a�ected by the interconnecting network, that

is to say, moving storage tasks to a cleaner remote data center is not systematically

greener. Future works plan to take into account some cold storage systems. Also,

the evaluation of the data center energy e�ciency should consider new metrics such

as Water Usage E�ectiveness (WUE) and Carbon Usage E�ectiveness (CUE).

Shuja et al. [64] presents a survey of techniques and architectures for designing

energy-e�cient data centers. Section III focuses on storage systems and discusses

several works related to energy e�ciency. Among them, [65] identi�ed two solu-

tions to reduce energy consumption on the storage part: improving energy e�ciency

of hardware and reducing data redundancy. The second solution implies however

careful consideration of "data replication, mapping, and consolidation". Also, [66]

proposes Hibernator, a disk array energy management system using, among other,

HDDs with spin-down capability. The proposed model saves 29% more energy than

24

previous solutions, while providing comparable performances. The main conclusion

of the survey resumes the advantages of �ash storage technology, but underlines its

under-representation in current cloud paradigm, due to their higher cost.

2.2 Cloud Simulators

Cloud Computing systems are complex. They require particular tools to analyze

speci�c quality concerns such as resource provisioning, task scheduling, network

con�guration, security or virtual machines management. Testing in real world en-

vironment is one technique for evaluated the performances of a system, but it is a

costly and time consuming method [44]. Moreover, the user might not have access

to all the system's components that need to be analyzed. Simulation splits apart

proper quality concern and allows to focus on the desired problem, that can be tested

under many di�erent scenarios [67]. Cloud simulators provide a stable, cost-e�cient

and scalable environment, where tests can be replicated, repeated and validated by

the research community. They empower users to control all the layers of the Cloud

system, videlicet the physical resources con�guration, the infrastructure topology,

the code middle-ware platform, the cloud application services and the user workload

behavior [43].

2.2.1 Overview

In the literature, few recent papers [39,41,67�69] have established a review of avail-

able tools for modeling and simulation of Cloud Computing environment. A non-

exhaustive but illustrative list of 19 simulators have been studied for this thesis

work, including CloudSim, CloudAnalyst, GreenCloud, MDCsim, iCanCloud, Net-

workCloudSim, EMUSIM, GroundSim, MR-CloudSim, DCSim, SimIC, D-Cloud,

PreFail, SPECI, OCT, OpenCirrus, CDOSim, TeachCloud and GDCSim. Each of

them has their own particularities, depending on their underlying platform, their

core simulation, their programing language and their maturity.

Table 1 compares the 19 simulators on six di�erent criteria, namely the base plat-

form (NS-2, SimJava, GridSim, CloudSim, etc.), the availability of the tool (Open

Source or Commercial license), the programing language used (Java, C++, XML,

etc.), the presence or not of a Graphic User Interface (GUI), the execution time

range (second, minute) and the energy-awareness capability (Yes or No).

25

Table

1.Com

parison

ofCloudCom

putingSimulators

(alphabeticorder).

SIM

ULATOR

PLATFORM

AVAILABILITY

CODE

GUI

TIM

ING

ENERGYAWARE

CDOSim

CloudSim

-Java

No

Second

No

CloudAnalyst

CloudSim

OpenSource

Java

Yes

Second

Yes

CloudSim

SimJava,GridSim

OpenSource

Java

No

Second

Yes

D-Cloud

Eucalyptus

OpenSource

-No

-No

DCSim

-OpenSource

Java

No

Minute

No

EMUSIM

CloudSim,AEF

OpenSource

Java

No

Second

Yes

GDCSim

BlueTool

OpenSource

C++,XML

No

Minute

Yes

GreenCloud

NS-2

OpenSource

C++,OTcl

Limited

Minute

Yes

GroundSim

-OpenSource

Java

Limited

Second

No

iCanCloud

OMNET,MPI

OpenSource

C++

Yes

Second

No

MDCsim

CSIM

Com

mercial

Java,C++

No

Second

Minimal

MR-CloudSim

CloudSim

Not

available

Java

No

-Yes

NetworkC

loudSim

CloudSim

OpenSource

Java

No

Second

Yes

OpenC

loudTestbed

Heterogeneous

Needregistration

-Limited

Minute

No

OpenC

irrus

Heterogeneous

OpenSource

-No

-Yes

PreFail

-OpenSource

Java

No

Minute

No

SimIC

SimJava

Not

available

Java

Yes

Second

Minimal

SPECI

SimKit

OpenSource

Java

Limited

Minute

No

TeachCloud

CloudSim

OpenSource

Java

Yes

Second

No

26

For this thesis work, the main criteria were the availability of the code and the pro-

graming language used for development. An Open Source software is free, permits

collaborative development and thus, encourages users to adopt it. The programing

language of the software should be generic and unique to facilitate its development

and to allow more contributions by the community. Several simulator tools meet

these expectations. Hence, to reduce the list, a more detailed analysis of the lit-

erature review has been carried out. Despite the fact that Cloud simulator tools

are numerous, the correlation between [16,38�42] revealed three major tools namely

CloudSim [43], MDCSim [44] and GreenCloud [45].

MDCSim [44] has been developed in 2009 by Seung-Hwan Lim from Pennsylvania

State University (USA). It models large scale multi-tier data center architecture with

a "comprehensive, �exible, and scalable" simulation platform, three reasons of its

predominant use. Each layer of its architecture is modeled independently and can be

modi�ed without a�ected other layers. Moreover, the simulator allows to estimate

and to measure the power consumption of a server cluster composed of thousands of

nodes. Figure 5 shows the architecture of the simulator. "NIC" stands for Network

Interface Card.

Figure 5. Architectural details of the three layers of MDCSim simulator [44].

One critical drawback of this simulator is its availability since MDCSim is a com-

mercial tool so users need to buy a license in order to use it. Further, it is not sure

you can access the source code of the tool.

GreenCloud [45] is an open source simulator, designed to model energy aware data

centers. It has been released in its �rst version by the Luxembourg University

in December 2010 as an extension of the well-known ns-2 network simulator [70].

27

Hence, GreenCloud is a packet-based simulator, focused on communication patterns

between data center components. From the energy perspective, GreenCloud provides

power models for server components, gateways, links, as well as core, access and

aggregation switches of multi-tier architecture. It o�ers �exible workload that can

be con�gured and tested on both computational and communicational attributes.

Also, new resource allocation, workload scheduling or communication protocols can

be implemented and tested. Figure 6 shows the architecture of the simulator.

Figure 6. Architecture of the GreenCloud simulation environment [45].

Compared to MDCSim, GreenCloud can simulate only small data center architec-

tures since its core packet-based simulation is highly time and resource consuming.

Further, GreenCloud users have to know both C++ and OTcl languages to develop

and run a simulation, which is a signi�cant obstacle for its wide adoption.

Thus, previously mentioned drawbacks of the popular GreenCloud and MDCSim

simulators are �rst reasons to consider CloudSim [43] for this thesis work. Addition-

ally, CloudSim has been identi�ed as the "most popular" [41] and "sophisticated" [42]

Cloud simulator available today. The Java programming language used for its im-

plementation has been the focus of several projects during my master program.

Further, the CloudSim source code is released under open source license, so it is free

and easy to download, which makes its development more convenient and dynamic.

28

2.2.2 CloudSim: a Framework for Modeling and Simulation of Cloud

Computing Infrastructures and Services

CloudSim is a widely used software framework for modeling and simulation of Cloud

Computing environments. It has been developed in 2010 by the CLOUDS Labora-

tory at the Computer Science and Software Engineering Department of Melbourne

University (Australia), and it is used in research by several universities and organi-

zations, including Duke University from North Carolina, HP Labs at Palo Alto and

the National research Center for Intelligent Computer systems (NCIC) in China.

According to [41] published in February 2014, CloudSim "is the most popular sim-

ulator tool available for cloud computing environment". Java Object Oriented

Programming (OOP) language is used for its implementation, which results in a

highly scalable simulator. Additionally to this widely known programing language,

CloudSim is an open source software so that any users can download its entire

source code and participate to its development. The simulator enables modeling

of CPU components, RAM, storage, Virtual Machine (VM), host, Cloud broker

and data center, as well as VM allocation policy, VM scheduler, dynamic workload,

power-aware simulation and network modeling built from BRITE network topology

�les [71].

Many important contributions have been undertaken around CloudSim. Garg et

al. [42] have developed NetworkCloudSim as an extension of the simulator allowing

simulation of networking protocols and communication between Cloud applications.

The extension has been integrated to CloudSim Toolkit 3.0. Beloglazov et al. [72]

have developed new algorithms related to VM migration and dynamic VM consoli-

dation problems, and CloudSim have been chosen as testing platform. As a result, a

power package has been created in CloudSim to enable power aware simulation and

the package has been then integrated in CloudSim Toolkit 2.0. Later, in CloudSim

Toolkit 3.0, the power package have been expanded with new accurate power models

of servers (HP and IBM) based on data from SPECpower benchmark [73]. Also, due

to the lack of Graphical User Interface (GUI), Wickremasinghe et al. [74] have de-

veloped CloudAnalyst, a CloudSim-based visual modeler for analyzing Cloud Com-

puting environments and applications, running on the top of the simulator. Other

related project can be found on the CloudSim o�cial website [75].

Recent discussions on the CloudSim community group, on stackover�ow.com and on

researchgate.net reveal several ongoing development of the simulator. Additionally,

CloudSim Toolkit 1.0 has been released in April 2009, Toolkit 2.0 in May 2010 and

Toolkit 3.0 in January 2012. Thus, it can be expected that a new version will be

29

soon released.

Concerning the architecture of CloudSim framework (see Figure 7), three layers can

be identi�ed as follow (bottom-up description):

1. The Core Simulation Engine: event queues control, clock updates, clock

tick execution, resource registration, dynamic environment supervision, simu-

lation termination.

2. The Modeling libraries: data centers, hosts, VMs, Cloudlets (tasks), �les,

storage, Processing elements (Pes), allocation and scheduling policies, network

topology, utilization models, power models.

3. The User Con�guration Code: scenarios, infrastructure and resources

speci�cations, application and workload con�gurations, allocation and schedul-

ing policies declarations.

Figure 7. Layered CloudSim architecture [43].

Developing a complete and accurate Cloud simulator is a long-lasting task that

need to evolve with business needs and new research �ndings. While CloudSim is

30

widely used, some major features are still unsatisfactory: modeling and simulation

of storage is of them.

2.2.3 CloudSim and Storage Modeling

A rapid analysis of CloudSim reveals a clear lack in Storage modeling. The related

embedded code is limited to a single model of HDD reused from GridSim simulator

[76], barely scalable due to its implementation and including some mistakes in its

algorithm apropos to the rotation latency [77] as well as the seek time and transfer

time of the model [78]. Also, from CloudSim Toolkit 1.0, a class SanStorage.java

implementing bandwidth and networkLatency parameters is still "not yet fully

functional" [79] since no improvements have been carried out in the succeeding new

version of the simulator.

Some projects related to CloudSim and storage modeling have been undertaken.

StorageCloudSim [80] has been released under open source license, CloudSimEx

project [81] is in continual development on GitHub, and Long et al. [82] worked on

cloud data storage, but they did not share their extension to the community.

StorageCloudSim Tobias Sturm from Karlsruhe Institute of Technology pub-

lished in August 2013 a Bachelor Thesis entitled "Implementation of a Simulation

Environment for Cloud Object Storage Infrastructures" [83]. His work added mod-

eling and simulation of Storage as a Service (STaaS) Clouds in CloudSim, using

the Cloud Data Management Interface (CDMI) standard. The architecture of the

simulator is depicted in Figure 8.

In this implementation, a data element is represented by a Binary Large OB-

ject (BLOB) and a storage disk is represented by a Object-Based Storage Device

(OBSD). Some new methods have been implemented compare to CloudSim storage

interface including:

• getMaxReadTransferRate(); // Returns the max possible transfer rate for read

operations in byte/ms.

• getMaxWriteTransferRate(); // Returns the max possible transfer rate for

write operations in byte/ms.

• getReadLatency(); //Returns the average latency of the drive in ms for read

31

operations. The latency includes the rotational latency, the command process-

ing latency and the settle latency.

• getWriteLatency(); //Returns the average latency of the drive in ms for write

operations. The latency includes the rotational latency, the command process-

ing latency and the settle latency.

However, these pieces of information are rarely provided by disk manufacturers and

need to be retrieved using speci�c benchmarking tools. Also, StorageCloudSim is

limited to STaaS Cloud Computing model type (see 1.2.2) with a focus on the Cloud

Data Management Interface standard, and does not provide any implementation of

energy aware storage for CloudSim.

Figure 8. CloudSim and StorageCloudSim architecture overview [83].

32

CloudSimEx The objective of CloudSimEx project is to develop a set of CloudSim

extensions and integrate them in the o�cial version of the simulator when the de-

velopment deserves it in term of usefulness, usability and maturity. [84]

The ongoing CloudSimEx features include web session modeling, better logging utili-

ties, utilities for generating CSV �les for statistical analysis, automatic id generation,

utilities for running multiple experiments in parallel, utilities for modeling network

latencies and MapReduce simulation.

Associated to CloudSimEx project, [85] worked on the performances of the per-

sistent storage. For that purpose, a new modeling of disk I/O performance has

been implemented: a HDD processing element HddPe extends the processing ele-

ment class Pe.java modeling a CPU in CloudSim. Then, Host.java, VM.java and

Cloudlet.java have been modi�ed to support the new disk I/O model. Thus, it is

not an implementation of disk simulation, but a more abstracted way to represent

I/O disk operation, independently to the device. The extended Cloudlet (Task)

has both CPU and Disk operations to be executed by respectively CPU and HDD

processing elements, as shown by Figure 9.

Figure 9. Concept of HDD processing element in CloudSimEx [86].

Data Cloud Layer Long and Zhao [82] extended CloudSim by adding the �le

striping and data replica features to the simulator. Thus, the expended CloudSim

layer architecture includes a new data Cloud layer between Cloud Services layer and

VM services layer (see Figure 10). The extension can be used to model di�erent

replica management strategy, commonly known as Redundant Array of Independent

Disks (RAID). However, the source code has not been shared with the research

community and it has not been possible to contact the authors.

33

Figure 10. CloudSim architecture with the new data Clouds layer [82].

2.3 CloudSim Background

In this section, a detailed description of the CloudSim tool is presented, including

the entities elements, the simulation life cycle, the core events passing operation and

the dynamic characteristic of CloudSim.

2.3.1 Entities

CloudSim is a framework for modeling and simulation of Cloud Computing infras-

tructures and services. Figure 11 shows the high level modeling of CloudSim where

components with continuous border lines are called "entities" (see 2.3.3). An entity

has the ability to send and to receive events to each other (see 2.3.3), and also to

interact with the other components (dash lines).

One major component of CloudSim is the Datacenter entity which aims to model a

real Datacenter: it houses a list of Hosts (physical servers machines) and a list of

storage (physical storage devices), both with de�ned hardware speci�cations (RAM,

Bandwidth, Capacity, CPUs for the Host; Capacity, SeekTime, Latency and maxi-

mum Transfer Rate for Storage).

CloudSim supports server virtualization so each host runs one or more Virtual Ma-

chines (VMs). Each VM is assigned to a Host according to speci�c VM Allocation

Policies de�ned for a particular Datacenter. Further, each host allocates resources

to VMs according to speci�c VM Scheduler Policies de�ned for a particular host.

Then, Cloud application jobs are managed internally by VMs according to various

Cloudlet Scheduler Policies de�ned for each particular VM.

A Cloudlet models the Cloud-based application services in CloudSim. It represents

a job, a request or a task to be executed. The Datacenter Broker models the inter-

34

Figure 11. CloudSim high level modeling.

mediary layer between end users and cloud providers responsible to meet the user

application's Quality of Services (QoS) needs. Thus this component supervises the

cloudlet arrival rate for a speci�c data center.

Finally, CloudSim core simulation implements automatically two entities: the Cloud-

InformationService (CIS) entity, responsible for resource registration, indexing and

discovery, and the CloudSimShutdown entity, responsible to signal the end of the

simulation to the CIS entity. These entities should not be created by the user himself

since the core simulation does it.

2.3.2 Life Cycle

In CloudSim, each simulation is following a speci�c life cycle (see Figure 12) that

needs to be chronologically followed in order for the simulation to work properly.

Figure 12. CloudSim Life Cycle.

35

Firstly, CloudSim common attributes such as "trace �ag", "calendar" and "number

of user" are initialized. CouldInformationService and CloudSimShutdown entities

are created. This has to be done before creating any other entities.

Secondly, Broker and Datacenter(s) entities are created. As explained in 2.3.1,

Datacenter is composed of Hosts machines. Thus, a hosts List is also created and

passed as parameter in the Datacenter constructor. Similarly, a VMs List and

Cloudlets List is created and sent to the Broker entity before the simulation starts.

Thirdly, all the entities threads are switched to running state and the core simulation

of CloudSim is executed (see 2.3.4). The simulation can be either stopped if there is

not more events in the queue or if the simulation reached a speci�c terminationTime

or if an abruptTerminate event appeared due to an unexpected problem.

Fourthly, once the simulation is �nished, the �nal result is printed. Note that the

�nal result can be formatted in a di�erent way depending on the scenarios executed.

Also, intermediary results can be printed during previous phases of the life cycle.

2.3.3 Events Passing

CloudSim is an event-based simulator, so each step of the simulation is triggered by

an event. Events are the heartbeat of CloudSim simulation environment: if no more

events are generated, it is the end of the simulation.

All communication between the main CloudSim components are accomplished by

the events passing activity. Each event can be seen as messages with a source ID and

a destinations ID: only an entity can send or receive an event. There are four di�er-

ent entities: CloudSimShutDown, CloudInformationService, Datacenter(s), and the

Broker. Their IDs are assigned consistently (Table 2) according to the CloudSim

Life Cycle (see 2.3.2).

Table 2. Entities IDs assignment.

ENTITIES IDs

CloudSimInformattionService 0CloudSimShutDown 1Datacenter(s) 2 ... nBroker n+1

36

Each event stores a unique Tag number corresponding to a speci�c happening (Ex-

ample: VM_DATACENTER_EVENT, END_OF_SIMULATION) or indicating the type of ac-

tion to perform by the recipient (Example: VM_CREATE, CLOUDLET_SUBMIT). Hence,

the recipient of an event has to implement a method which executes this particular

tag number. The execution can be a simple "print out" for the user or a more com-

plex sequence of sub methods which will eventually generate new events. Finally, an

event have a data parameter of type Object used to carry a Cloudlet or datacenter

characteristics or any other information that need to be transfer with the event.

2.3.4 Future Queue and Deferred Queue

Unlike GridSim [76], the core simulation of CloudSim framework is a dynamic en-

vironment by reason of two event queues: Future Queue and Deferred Queue (see

Figure 13). All events generated by entities during the runtime are added to the

Future Queue and sorted by their time parameter (tX ), "time at which the event

should be delivered to its destination entity [for execution]" [43]. As explained in

2.3.3, the execution of an event can result in the creation of new events, with similar

or di�erent "Time" parameter. In other word, events generation numbers (Event

X ) do not determine the order in Future Queue. Afterwards, the "top of the queue"

event in Future Queue is moved to the Deferred Queue and will be processed at the

next clock tick. If next event in the Future Queue has the same time, it will be

moved as well, and so on.

Figure 13. Example of queue management during three clock ticks.

In the example diagrammed in Figure 13, Event1 is executed �rst and generates

37

Event3. Then Event2, having the same Time parameter, is executed too and gen-

erates Event4 and Event5. No more events are in the Deferred Queue at this time.

Afterwards, Event4 being at the "top of the queue" in Future Queue is moved to

the Deferred Queue. A new event is in Deferred Queue so it is executed during

the next tick and it generates Event7. No more events are in the Deferred Queue

so the Event5 being at the "top of the queue" in Future Queue is moved to the

Deferred Queue. Also, Event6 has the same time parameter so it is moved as well

in Deferred Queue. The next step would be to execute Event4, then Event5 which

will eventually generate new events.

2.4 Summary

In this chapter, HDD has been identi�ed as the main technology used in today's

data centers, mainly due to its low cost and high areal density. From an energy

perspective, cold storage has been the topic of most of the recent researches related

to energy e�cient storage in cloud environment.

Further, Cloud Simulation has been identi�ed as a cost-e�ective solution to perform

experiments in a controllable, stable and repeatable way. Numerous Cloud simula-

tors have been compared. As a consequence of its popularity, its availability and

its extensibility, CloudSim has been the choice of this thesis work. The analysis

revealed a lack in storage modeling that has not been yet overcome.

Last, a background of CloudSim operation has been presented to prepare, in the

next chapter, the introduction of the CloudSimDisk module.

38

3 CLOUDSIMDISK: ENERGY-AWARE STORAGE

SIMULATION IN CLOUDSIM

This chapter presents CloudSimDisk, a module for energy aware storage simulation

in CloudSim simulator. The �rst part explains the objectives of the module, and

the module requirements. Next, the main concepts of CloudSimDisk are described,

such as HDD model, HDD power model, data cloudlet and data center persistent

storage. Then, the execution �ow and the packages diagram is explained. At last,

energy awareness and scalability for CloudSimDisk are discussed.

3.1 Module Requirements

CloudSimDisk has been developed according to di�erent requirements, which pro-

vide several advantages to the module and explain the architectural design choices.

The main requirement was to respect the architecture and the core processing of

CloudSim. In fact, CloudSimDisk is a module for CloudSim so it has to operate in

the same way than CloudSim. Also, similar design choices will reduce the learning

curve of CloudSim users who want to adopt CloudSimDisk. Further, it will encour-

age participations and contributions for the future development of the module.

Another important requirement is the scalability of the module. HDD technology is

complex to model due to the electromechanical nature of the devices. Additionally,

the technology is evolving rapidly. Hence, CloudSimDisk has to be developed with

the idea that implementing more characteristics, more features, should be possible

later. This capability is a positive argument for the adoption of CloudSimDisk.

An additional requirement was to consider �rst only the main parameters of the

HDD technology, and to provide energy consumption results based on this simpli-

�ed model. In fact, this work has to be achieved within strict deadlines, so some

development decisions such as this one has been taken.

3.2 CloudSimDisk Module

This section introduces the CloudSimDisk module. At �rst, the Hard Disk Drive

(HDD) model and the associated HDD power model are presented. Then, the data

cloudlet object, or storage task, is explained in details. Further, the data center

persistent storage is de�ned.

39

3.2.1 HDD Model

As explained in Chapter 1, HDDs are still today the most used storage technology in

Cloud computing environment. Unfortunately, CloudSim provides only one model

of HDD reused from GridSim simulator [76], barely scalable and including some

mistakes in its algorithm [78]. To overcome this barrier, CloudSimDisk module

implements a new HDD model.

According to [87] [88] [89], the main characteristics a�ecting the overall HDD perfor-

mance are the mechanical components, combination of the read/write head transver-

sal movement and the platter rotational movement. Additionally, the internal data

transfer rate, often called sustained rate, has been identi�ed as a bottleneck of the

overall data transfer rate of an HDD [90]. More recently, [91] proposed a HDD model

based on 23 input parameters which achieve between 91% to 96.5% accuracy. Figure

14 shows a diagram of model parameters used in their implementation, organized by

functional category. Each parameters is described in detail in order of importance:

�rst parameter is the position time, "the sum of the seek time and the rotational

latency", and second is the transfer time, "the time required to transfer one sector

of data to or from the media", namely the Internal Data Transfer Time.

Figure 14. Diagram of model parameters [91].

40

A new package, namely cloudsimdisk.models.hdd, has been created, and contains

classes modeling HDD storage components. Each model implements one method,

namely getCharacteristic(int key). In this method, the parameter key is an

integer corresponding to a speci�c characteristic of the HDD. To ensure the consis-

tency between di�erent HDD models, all the classes extend one common abstract

class, which declares the getCharacteristic(int key) method. Thereby, the pa-

rameter key corresponds to the same HDD characteristic in each model.

However, it is not convenient for developers or users to play with key numbers.

Hence, the getCharacteristic(int key) method has been declared as Protected

and cannot be used directly. Instead, the common abstract class implements a

getter for each HDD characteristic (getCapacity(), getAvgSeekTime(), etc.).

The getCharacteristic(int key) method is used only internally to retrieve the

required characteristic. As a result, methods accessed by users are semantically un-

derstandable. Table 3 inventories the available methods declared in HDD models to

retrieve HDD characteristics.

Table 3. CloudSimDisk HDD characteristics.

KEY

0getManufacturerName()

The name of the Manufacturer (Ex: Seagate Technology, Toshiba, West-ern Digital).

1getModelNumber()

The unique manufacturer reference (Ex: ST4000DM000).

2getCapacity()

The capacity of the HDD in megabyte (MB).

3getAvgRotationLatency()

The average rotation latency of the disk which is de�ned as half theamount of time it takes for the disk to make one full revolution, in second(s), directly dependent on the disk rotation speed in Rotation Per Minute(RPM).

4getAvgSeekTime()

The average seek time of the disk which is de�ned as the average timeneeded to move the read/write head from track x to track y, also corre-sponding to one-third of the longest possible seek time, moving from theoutermost track to the innermost track, assuming an uniform distributionof requests [92].

5getMaxInternalDataTransferRate()

The maximum internal data transfer rate which is de�ned as the rate atwhich data is transferred physically from the disk to the internal bu�er,also called Sustained Data Rate or Sustained Transfer Rate.

41

3.2.2 HDD Power Model

For the toolkit 3.0, Anton Beloglazov has included a power package to CloudSim,

based on his publication a year before [72]. This implementation provides the nec-

essary algorithm for modeling and simulation of energy-aware computational re-

sources, i.e. Host and Virtual Machines. However, it does not provide energy

awareness to the storage component.

Thus, similarly to 3.2.1, the package cloudsimdisk.power.models.hdd has been

created in accordance with the power package in place. Inside, the abstract class

PowerModelHdd.java implements semantically understandable getters to retrieve

the power data of a speci�c HDD in a particular operating mode. Table 4 invento-

ries the available operating power mode declared in HDD power models.

Table 4. CloudSimDisk HDD power mode.

KEY MODE DESCRIPTION

0 Active The disk is handling a request.1 Idle The disk is spinning but there is no activity on it.

3.2.3 Data Cloudlet

As explained in 2.3.1, CloudSimDisk models a request with a Cloudlet component.

However, the CloudSim implementation of this component interacts mainly with the

Host's CPU hardware element. No examples of interactions with storage element

are provided and no results are printed out. Thus, an extension of the CloudSim

Cloudlet is proposed by CloudSimDisk. The default Cloudlet constructor with eight

parameters has been reused. Additionally, two new parameters have been de�ned:

• requiredFiles: a list of �lenames that need to be retrieved by the cloudlet.

These requested �les have to be stored on the persistent storage of the Data-

center before the cloudlet is executed.

• dataFiles: a list of �les that need to be stored by the cloudlet. These new �les

will be added to the persistent storage of the Datacenter during the cloudlet

processing.

42

Note that requiredFiles has been already implemented in CloudSim v3.0.3 but

the constructor parameter to set this variable has been called fileList. However,

this list is not a list of File object, but a list of String corresponding to �lenames.

To make matters even more confusing, the new parameter dataFiles implemented

in CloudSimDisk is a list of File. Thus, in order to clarify things, the fileList

parameter has not been reused by CloudSimDisk. Instead, requiredFiles and