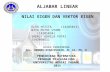

Chapter 6 Eigenvalues and Eigenvectors Po-Ning Chen, Professor Department of Electrical and Computer Engineering National Chiao Tung University Hsin Chu, Taiwan 30010, R.O.C.

Welcome message from author

This document is posted to help you gain knowledge. Please leave a comment to let me know what you think about it! Share it to your friends and learn new things together.

Transcript

-

Chapter 6

Eigenvalues and Eigenvectors

Po-Ning Chen, Professor

Department of Electrical and Computer Engineering

National Chiao Tung University

Hsin Chu, Taiwan 30010, R.O.C.

-

6.1 Introduction to eigenvalues 6-1

Motivations

The static system problem of Ax = b has now been solved, e.g., by Gauss-Jordan method or Cramers rule.

However, a dynamic system problem such asAx = x

cannot be solved by the static system method.

To solve the dynamic system problem, we need to nd the static featureof A that is unchanged with the mapping A. In other words, Ax maps to

itself with possibly some stretching ( > 1), shrinking (0 < < 1), or being

reversed ( < 0).

These invariant characteristics of A are the eigenvalues and eigenvectors.Ax maps a vector x to its column space C(A). We are looking for a v C(A)such that Av aligns with v. The collection of all such vectors is the set of eigen-

vectors.

-

6.1 Eigenvalues and eigenvectors 6-2

Conception (Eigenvalues and eigenvectors): The eigenvalue-eigenvector

pair (i,vi) of a square matrix A satises

Avi = ivi (or equivalently (A iI)vi = 0)where vi = 0 (but i can be zero), where v1,v2, . . . , are linearly independent.

How to derive eigenvalues and eigenvectors?

For 3 3 matrix A, we can obtain 3 eigenvalues 1, 2, 3 by solvingdet(AI) = 3+ c22+ c1+ c0 = 0.

If all 1, 2, 3 are unequal, then we continue to derive:Av1 = 1v1

Av2 = 2v2

Av3 = 3v3

(A 1I)v1 = 0(A 2I)v2 = 0(A 3I)v3 = 0

v1 = the only basis of the nullspace of (A 1I)v2 = the only basis of the nullspace of (A 2I)v3 = the only basis of the nullspace of (A 3I)

and the resulting v1, v2 and v3 are linearly independent to each other.

If A is symmetric, then v1, v2 and v3 are orthogonal to each other.

-

6.1 Eigenvalues and eigenvectors 6-3

If, for example, 1 = 2 = 3, then we derive:{(A 1I)v1 = 0(A 3I)v3 = 0

{{v1,v2} = the basis of the nullspace of (A 1I)v3 = the only basis of the nullspace of (A 3I)

and the resulting v1, v2 and v3 are linearly independent to each other

when the nullspace of (A 1I) is two dimensional. If A is symmetric, then v1, v2 and v3 are orthogonal to each other.

Yet, it is possible that the nullspace of (A 1I) is one dimensional, thenwe can only have v1 = v2.

In such case, we say the repeated eigenvalue 1 only have one eigenvector.

In other words, we say A only have two eigenvectors.

If A is symmetric, then v1 and v3 are orthogonal to each other.

-

6.1 Eigenvalues and eigenvectors 6-4

If 1 = 2 = 3, then we derive:(A 1I)v1 = 0 {v1,v2,v3} = the basis of the nullspace of (A 1I)

When the nullspace of (A1I) is three dimensional, then v1, v2 and v3are linearly independent to each other.

When the nullspace of (A 1I) is two dimensional, then we say A onlyhave two eigenvectors.

When the nullspace of (A 1I) is one dimensional, then we say Aonly have one eigenvector. In such case, the matrix-form eigensystem

becomes:

A[v1 v1 v1

]=[v1 v1 v1

] 1 0 00 1 00 0 1

If A is symmetric, then distinct eigenvectors are orthogonal to eachother.

-

6.1 Eigenvalues and eigenvectors 6-5

Invariance of eigenvectors and eigenvalues.

Property 1: The eigenvectors stay the same for every power of A.The eigenvalues equal the same power of the respective eigenvalues.

I.e., Anvi = ni vi.

Avi = ivi = A2vi = A(ivi) = iAvi = 2ivi

Property 2: If the nullspace of Ann consists of non-zero vectors, then 0 is theeigenvalue of A.

Accordingly, there exists non-zero vi to satisfy Avi = 0 vi = 0.

-

6.1 Eigenvalues and eigenvectors 6-6

Property 3: Assume with no loss of generality |1| > |2| > > |k|. Forany vector x = a1v1 + a2v2 + + akvk that is a linear combination of alleigenvectors, the normalized mapping P = 11A (namely,

Pv1 =

(1

1A

)v1 =

1

1(Av1) =

1

11v1 = v1)

converges to the eigenvector with the largest absolute eigenvalue (when being

applied repeatedly).

I.e.,

limk

Pkx = limk

1

k1Akx = lim

k1

k1

(a1A

kv1 + a2Akv2 + + akAkvk

)= lim

k1

k1

(a1

k1v1

steady state

+ a2k2v2 + + kkAkvk

transient states

)= a1v1.

-

6.1 How to determine the eigenvalues? 6-7

We wish to nd a non-zero vector v to satisfy Av = v; then

(A I)v = 0, where v = 0 det(A I) = 0.

So by solving det(A I) = 0, we can obtain all the eigenvalues of A.

Example. Find the eigenvalues of A =

[0.5 0.5

0.5 0.5

].

Solution.

det(A I) = det([

0.5 0.50.5 0.5

])= (0.5 )2 0.52 = 2 = ( 1) = 0 = 0 or 1.

-

6.1 How to determine the eigenvalues? 6-8

Proposition: Projection matrix (dened in Section 4.2) has eigenvalues either 1

or 0.

Proof:

A projection matrix always satises P 2 = P . So P 2v = Pv = v. By denition of eigenvalues and eigenvectors, we have P 2v = 2v. Hence, v = 2v for non-zero vector v, which immediately implies = 2. Accordingly, is either 1 or 0.

Proposition: Permutation matrix has eigenvalues satisfying k = 1 for some

integer k.

Proof:

A purmutation matrix always satises Pk+1 = P for some integer k.

Example. P =

0 0 11 0 00 1 0

. Then, Pv =

v3v1v2

and P 3v = v. Hence, k = 3.

Accordingly, k+1v = v, which gives k = 1 since the eigenvalue of P cannotbe zero.

-

6.1 How to determine the eigenvalues? 6-9

Proposition: Matrix

mAm + m1Am1 + + 1A + 0I

has the same eigenvectors as A, but its eigenvalues become

mm + m1m1 + + 1 + 0,

where is the eigenvalue of A.

Proof:

Let vi and i be the eigenvector and eigenvalue of A. Then,(mA

m + m1Am1 + + 0I)vi =

(m

m + m1m1 + + 0)vi

Hence, vi and(m

m + m1m1 + + 0)are the eigenvector and eigen-

value of the polynomial matrix.

-

6.1 How to determine the eigenvalues? 6-10

Theorem (Cayley-Hamilton): For a square matrix A, dene

f() = det(A I) = n + n1n1 + + 0.(Suppose A has linearly independent eigenvectors.) Then

f(A) = An + n1An1 + + 0I = all-zero n n matrix.

Proof: The eigenvalues of f(A) are all zeros and the eigenvectors of f(A) remain

the same as A. By denition of eigen-system, we have

f(A)[v1 v2 vn

]=[v1 v2 vn

]f(1) 0 00 f(2) 0... ... . . . ...

0 0 f(n)

.

Corollary (Cayley-Hamilton): (Suppose A has linearly independent eigen-

vectors.)

(1I A)(2I A) (nI A) = all-zero matrix

Proof: f() can be re-written as f() = (1 )(2 ) (n ).

-

6.1 How to determine the eigenvalues? 6-11

(Problem 11, Section 6.1) Here is a strange fact about 2 by 2 matrices with eigen-

values 1 = 2: The columns of A 1I are multiples of the eigenvector x2. Anyidea why this should be?

Hint: (1I A)(2I A) =[0 0

0 0

]implies

(1I A)w1 = 0 and (1I A)w2 = 0where (2I A) =

[w1 w2

]. Hence,

the columns of (2I A) give the eigenvectors of 1 if they are non-zero vectorsand

the columns of (1I A) give the eigenvectors of 2 if they are non-zero vectors .

So, the (non-zero) columns of A 1I are (multiples of) the eigen-vector x2.

-

6.1 Why Gauss-Jordan cannot solve Ax = x? 6-12

The forward elimination may change the eigenvalues and eigenvectors?

Example. Check eigenvalues and eigenvectors of A =

[1 22 5

].

Solution.

The eigenvalues of A satisfy det(A I) = ( 3)2 = 0. A = LU =

[1 0

2 1

] [1 20 9

]. The eigenvalues of U apparently satisfy

det(U I) = (1 )(9 ) = 0. Suppose u1 and u2 are the eigenvectors of U , respectively corresponding

to eigenvalues 1 and 9. Then, they cannot be the eigenvectors of A since if

they were,3u1 = Au1 = LUu1 = Lu1 =

[1 0

2 1

]u1 = u1 = 0

3u2 = Au2 = LUu2 = 9Lu2 = 9

[1 0

2 1

]u2 = u2 = 0.

Hence, the eigenvalues are nothing to do with pivots (except for a triangular A).

-

6.1 How to determine the eigenvalues? (Revisited) 6-13

Solve det(A I) = 0. det(A I) is a polynomial of order n.

f() = det(A I) = det

a1,1 a1,2 a1,3 a1,na2,1 a2,2 a2,3 a2,na3,1 a3,2 a3,3 a3,n... ... ... . . . ...

an,1 an,2 an,3 an,n

= (a1,1 )(a2,2 ) (an,n ) + terms of order at most (n 2)(By Leibniz formula)

= (1 )(2 ) (n )Observations

The coecient of n is (1)n, provided n 1. The coecient of n1 is ni=1 i =ni=1 ai,i = trace(A), provided n 2. . . . The coecient of 0 is ni=1 i = f(0) = det(A).

-

6.1 How to determine the eigenvalues? (Revisited) 6-14

These observations make easy the nding of the eigenvalues of 2 2 matrix.

Example. Find the eigenvalues of A =

[1 1

4 1

].

Solution.

{1 + 2 = 1 + 1 = 2

12 = 1 4 = 3= (1 2)2 = (1 + 2)2 412 = 16

= 1 2 = 4= = 3,1.

Example. Find the eigenvalues of A =

[1 1

2 2

].

Solution.

{1 + 2 = 3

12 = 0= = 3, 0.

-

6.1 Imaginary eigenvalues 6-15

In some cases, we have to allow imaginary eigenvalues.

In order to solve polynomial equations f() = 0, the mathematician was forcedto image that there exists a number x satisfying x2 = 1 .

By this technique, a polynomial equations of order n have exactly n (possiblycomplex, not real) solutions.

Example. Solve 2 + 1 = 0. = = i.

Based on this, to solve the eigenvalues, we were forced to accept imaginaryeigenvalues.

Example. Find the eigenvalues of A =

[0 1

1 0].

Solution. det(A I) = 2 + 1 = 0 = = i.

-

6.1 Imaginary eigenvalues 6-16

Proposition: The eigenvalues of a symmetric matrix A (with real entries) are

real, and the eigenvalues of a skew-symmetric (or antisymmetric) matrix B are

pure imaginary.

Proof:

Suppose Av = v. Then,Av = v (A real)

= (Av)Tv = (v)Tv (Equivalently, v Av = v (v))= (v)TATv = (v)Tv= (v)TAv = (v)Tv (Symmetry means AT = A)= (v)Tv = (v)Tv (Av = v)= v2 = v2 (Eigenvector must be non-zero, i.e., v2 = 0)= = = real

-

6.1 Imaginary eigenvalues 6-17

Suppose Bv = v. Then,Bv = v (B real)

= (Bv)Tv = (v)Tv= (v)TBTv = (v)Tv= (v)T(B)v = (v)Tv (Skew-symmetry means BT = B)= (v)T()v = (v)Tv (Bv = v)= ()v2 = v2 (Eigenvector must be non-zero, i.e., v2 = 0)= = = pure imaginary

-

6.1 Eigenvalues/eigenvectors of inverse 6-18

For invertible A, the relation between eigenvalues and eigenvectors of A and A1

can be well-determined.

Proposition: The eigenvalues and eigenvectors of A1 are(1

1,v1

),

(1

2,v2

), . . . ,

(1

n,vn

),

where {(i,vi)}ni=1 are the eigenvalues and eigenvectors of A.Proof:

The eigenvalues of invertible A must be non-zero because det(A) =ni=1 i = 0. Suppose Av = v, where v = 0 and = 0. (I.e., and v are eigenvalue andeigenvector of A.)

So, Av = v = A1(Av) = A1(v) = v = A1v = 1v = A1v.

Note: The eigenvalues of Ak and A1 are respectively k and 1 with the sameeigenvectors as A, where is the eigenvalue of A.

-

6.1 Eigenvalues/eigenvectors of inverse 6-19

Proposition: The eigenvalues of AT are the same as the eigenvalues of A. (But

they may have dierent eigenvectors.)

Proof: det(A I) = det ((A I)T) = det (AT IT) = det (AT I).

Corollary: The eigenvalues of an invertible (real-valued) matrix A satisfying

A1 = AT are on the unit circle of the complex plain.

Proof: Suppose Av = v. Then,

Av = v (A real)= (Av)Tv = (v)Tv= (v)TATv = (v)Tv= (v)TA1v = (v)Tv (AT = A1)= (v)T 1v = (v)Tv (A1v = 1v)= 1

v2 = v2 (Eigenvector must be non-zero, i.e., v2 = 0)

= = ||2 = 1

Example. Find the eigenvalues of A =

[0 1

1 0]that satises AT = A1.

Solution. det(A I) = 2 + 1 = 0 = = i.

-

6.1 Determination of eigenvectors 6-20

After the identication of eigenvalues via det(AI) = 0, we can determinethe respective eigenvectors using the nullspace technique.

Recall (from Slide 3-42) how we completely solveBnnvn1 = (Ann Inn)vn1 = 0n1.

Answer:

R = rref(B) =

[Irr Fr(nr)

0(nr)r 0(nr)(nr)

](with no row exchange)

Nn(nr) =[ Fr(nr)I(nr)(nr)

]Then, every solution v for Bv = 0 is of the form

v(null)n1 = Nn(nr)w(nr)1 for any w nr.

Here, we usually nd the (n r) orthonormal bases for the null spaceas the representative eigenvectors, which are exactly the (n r) columns ofNn(nr) (with proper normalization and orthogonalization).

-

6.1 Determination of eigenvectors 6-21

(Problem 12, Section 6.1) Find three eigenvectors for this matrix P (projection

matrices have = 1 and 0):

Projection matrix P =

0.2 0.4 00.4 0.8 0

0 0 1

.

If two eigenvectors share the same , so do all their linear combinations. Find an

eigenvector of P with no zero components.

Solution.

det(P I) = 0 gives (1 )2 = 0; so = 0, 1, 1.

= 1: rref(P I) =1 12 00 0 00 0 0

;

so F12 =[12 0], andN32 =

12 01 00 1

, which implies eigenvectors =

1210

and

001

.

-

6.1 Determination of eigenvectors 6-22

= 0: rref(P ) =1 2 00 0 00 0 1

(with no row exchange);

so F =

20

, and N31 =

21

0

, which implies eigenvector =

21

0

.

-

6.1 MATLAB revisited 6-23

In MATLAB:

We can nd the eigenvalues and eigenvectors of a matrix A by:[V D]= eig(A); % Find the eigenvalues/vectors of A

The columns of V are the eigenvectors. The diagonals of D are the eigenvalues.

Proposition:

The eigenvectors corresponding non-zero eigenvalues are in C(A). The eigenvectors corresponding zero eigenvalues are in N (A).

Proof: The rst one can be seen from Av = v and the second one can be proved

by Av = 0.

-

6.1 Some discussions on problems 6-24

(Problem 25, Section 6.1) Suppose A and B have the same eigenvalues 1, . . . , nwith the same independent eigenvectors x1, . . . ,xn. Then A = B. Reason:

Any vector x is a combintion c1x1 + + cnxn. What is Ax? What is Bx?Thinking over Problem 25: Suppose A and B have the same eigenvalues and

eigenvectors (not necessarily independent). Can we claim that A = B.

Answer to the thinking: Not necessarily.

As a counterexample, both A =

[1 11 1

]and B =

[2 22 2

]have eigenvalues 0, 0

and single eigenvector

[1

1

]but they are not equal.

If however the eigenvectors span the n-dimensional space (such as there are n of

them and they are linearly independent), then A = B.

Hint for Problem 25: A[x1 xn

]=[x1 xn

]1 0 00 2 0... ... . . . ...

0 0 n

.

This important fact will be re-emphasized in Section 6.2.

-

6.1 Some discussions on problems 6-25

(Problem 26, Section 6.1) The block B has eigenvalues 1, 2 and C has eigenvalues

3, 4 and D has eigenvalues 5, 7. Find the eigenvalues of the 4 by 4 matrix A:

A =

[B C

0 D

]=

0 1 3 0

2 3 0 40 0 6 1

0 0 1 6

.

Thinking over Problem 26: The eigenvalues of

[B C

0 D

]are the eigenvalues of B

and D because we can show

det

([B C

0 D

])= det(B) det(D).

(See Problems 23 and 25 in Section 5.2.)

-

6.1 Some discussions on problems 6-26

(Problem 23, Section 5.2) With 2 by 2 blocks in 4 by 4 matrices, you cannot always

use block determinants:A B0 D = |A| |D| but

A BC D = |A| |D| |C| |B|.

(a) Why is the rst statement true? Somehow B doesnt enter.

Hint: Leibniz formula.

(b) Show by example that equality fails (as shown) when C enters.

(c) Show by example that the answer det(AD CB) is also wrong.

-

6.1 Some discussions on problems 6-27

(Problem 25, Section 5.2) Block elimination subtracts CA1 times the rstrow

[A B

]from the second row

[C D

]. This leaves the Schur complement

D CA1B in the corner:[I 0

CA1 I] [

A B

C D

]=

[A B

0 D CA1B].

Take determinants of these block matrices to prove correct rules if A1 exists:A BC D = |A| |D CA1B| = |AD CB| provided AC = CA.

Hint: det(A) det(D CA1B) = det (A(D CA1B)).

-

6.1 Some discussions on problems 6-28

(Problem 37, Section 6.1)

(a) Find the eigenvalues and eigenvectors of A. They depend on c:

A =

[.4 1 c.6 c

].

(b) Show that A has just one line of eigenvectors when c = 1.6.

(c) This is a Markov matrix when c = .8. Then An will approach what matrix

A?

Definition (Markov matrix): A Markov matrix is a matrix with positive

entries, for which every column adds to one.

Note that some researchers dene the Markov matrix by replacing positive bynon-negative; thus, they will use the term positive Markov matrix. The below

observations hold for Markov matrices with non-negative entries.

-

6.1 Some discussions on problems 6-29

1 must be one of the eigenvalues of a Markov matrix.

Proof: A and AT have the same eigenvalues, and AT

1...1

=

1...1

.

The eigenvalues of a Markov matrix satisfy || 1.

Proof: ATv = v impliesn

i=1 ai,jvi = vj; hence, by letting vk satisfying

|vk| = max1in |vi|, we have

|vk| =

ni=1

ai,kvi

ni=1

ai,k |vi| ni=1

ai,k |vk| = |vk|

which implies the desired result.

-

6.1 Some discussions on problems 6-30

(Problem 31, Section 6.1) If we exchange rows 1 and 2 and columns 1 and 2, the

eigenvalues dont change. Find eigenvectors of A and B for 1 = 11. Rank one

gives 2 = 3 = 0.

A =

1 2 13 6 34 8 4

and B = PAP T =

6 3 32 1 18 4 4

.

Thinking over Problem 31: This is sometimes useful in determining the eigenval-

ues and eigenvectors.

If Av = v and B = PAP1 (for any invertible P ), then and u = Pv arethe eigenvalue and eigenvector of B.

Proof: Bu = PAP1u = PAv = P (v) = Pv = u.

For example, P =

0 1 01 0 00 0 1

. In such case, P1 = P = P T.

-

6.1 Some discussions on problems 6-31

With the fact above, we can further claim that

Proposition. The eigenvalues of AB and BA are equal if one of A and B is

invertible.

Proof: This can be proved by (AB) = A(BA)A1 (or (AB) = B1(BA)B). Notethat AB has eigenvector Av or (B1v) if v is the eigenvector of BA.

-

6.1 Some discussions on problems 6-32

The eigenvectors of a diagonal matrix =

1 0 00 2 0... ... . . . ...

0 0 n

are apparently

ei =

0...

0

1

0...

0

position i , i = 1, 2, . . . , n

Hence, A = SS1 have the same eigenvalues as and have eigenvectors vi =Sei. This implies that

S =[v1 v2 vn

]where {vi} are the eigenvectors of A.What if S is not invertible? Then, we have the next theorem.

-

6.2 Diagnolizing a matrix 6-33

A convenience for the eigen-system analysis is that we can diagonalize a matrix.

Theorem. A matrix A can be written as

A[v1 v2 vn

] =S

=[v1 v2 vn

]1 0 00 2 0... ... . . . ...

0 0 n

=

where {(i,vi)}ni=1 are the eigenvalue-eigenvector pairs of A.Proof: The theorem holds by denition of eigenvalues and eigenvectors.

Corollary. If S is invertible, then

S1AS = equivalently A = SS1.

-

6.2 Diagnolizing a matrix 6-34

This makes easy the computation of polynomial with argument A.

Proposition (recall Slide 6-9): Matrix

mAm + m1Am1 + + 0I

has the same eigenvectors as A, but its eigenvalues become

mm + m1m1 + + 0,

where is the eigenvalue of A.

Proposition:

mAm + m1Am1 + + 0I = S

(m

m + m1m1 + + 0)S1

Proposition:

Am = SmS1

-

6.2 Diagnolizing a matrix 6-35

Exception

It is possible that an n n matrix does not have n eigenvectors.

Example. A =

[1 11 1

].

Solution.

1 = 0 is apparently an eigenvalue for a non-invertible matrix.

The second eigenvalue is 2 = trace(A) 1 = [1 + (1)] 1 = 0. This matrix however only has one eigenvector

[1

1

](or some researchers may

say two repeated eigenvectors).

In such case, we still have[1 11 1

]

A

[1 1

1 1

]

S

=

[1 1

1 1

]

S

[0 0

0 0

]

but S has no inverse.

In such case, we cannot preform SAS1; so we say A cannot be diagonalized.

-

6.2 Diagnolizing a matrix 6-36

When will S be guaranteed to be invertible?

One easy answer: When all eigenvalues are distinct (and there are n eigenvalues).

Proof: Suppose for a matrixA, the rst k eigenvectors v1, . . . ,vk are linearly inde-

pendent, but the (k+1)th eigenvector is dependent on the previous k eigenvectors.

Then, for some unique a1, . . . , ak,

vk+1 = a1v1 + + akvk,which implies{

k+1vk+1 = Avk+1 = a1Av1 + . . . + akAvk = a11v1 + . . . + akkvk

k+1vk+1 = a1k+1v1 + . . . + akk+1vk

Accordingly, k+1 = i for 1 i k; i.e., that vk+1 is linearly dependent onv1, . . . ,vk only occurs when they have the same eigenvalues. (The proof is not

yet completed here! See the discussion and Example below.)

The above proof said that as long as we have the (k + 1)th eigenvector, then it

is linearly dependent on the previous k eigenvectors only when all eigenvalues are

equal! But sometimes, we do not guarantee to have the (k + 1)th eigenvector.

-

6.2 Diagnolizing a matrix 6-37

Example. A =

5 4 2 1

0 1 1 11 1 3 01 1 1 2

.

Solution.

We have four eigenvalues 1, 2, 4, 4. But we can only have three eigenvectors for 1, 2 and 4. From the proof in the previous page, the eigenvectors for 1, 2 and 4 are linearlyindepedent because 1, 2 and 4 are not equal.

Proof (Continue): One condition that guarantees to have n eigenvectors is that

we have n distinct eigenvalues, which now complete the proof.

Definition (GM and AM): The number of linearly independent eigenvectors

for an eigenvalue is called its geometric multiplicity; the number of its

appearance in solving det(A I) is called its algebraic multiplicity.Example. In the above example, GM=1 and AM=2 for = 4.

-

6.2 Fibonacci series 6-38

Eigen-decomposition is useful in solving Fibonacci series

Problem: Suppose Fk+2 = Fk+1+Fk with initially F1 = 1 and F0 = 0. Find F100.

Answer:

[Fk+2Fk+1

]=

[1 1

1 0

] [Fk+1Fk

]=

[Fk+2Fk+1

]=

[1 1

1 0

]k+1 [F1F0

] So,[

F100F99

]=

[1 1

1 0

]99 [1

0

]= S99S1

[1

0

]

=

[1+

5

215

2

1 1

](1+

5

2

)990

0(15

2

)99[1+

5

215

2

1 1

]1 [1

0

]

=

[1+

5

215

2

1 1

](1+

5

2

)990

0(15

2

)99 1(

1+5

2

)(15

2

) [ 1 1521 1+52][

1

0

]

-

6.2 Fibonacci series 6-39

=

(1+

5

2

)100 (15

2

)100(1+

5

2

)99 (15

2

)99 1(

1+5

2

)(15

2

) [ 11]

=1(

1+5

2

)(15

2

)(1+

5

2

)100(15

2

)100(1+

5

2

)99(15

2

)99 .

-

6.2 Generalization to uk = Aku0 6-40

A generalization of the solution to Fibonacci series is as follows.

Suppose u0 = a1v1 + a2v2 + + anvn.Then uk = A

ku0 = a1Akv1 + a2A

kv2 + + anAkvn= a1

k1v1 + a2

k2v2 + . . . + an

knvn.

Examination:

uk =

[Fk+1Fk

]=

[1 1

1 0

]k [F1F0

]= Aku0

and

u0 =

[1

0

]=

1(1+

5

2

)(15

2

)

a1

[1+

5

2

1

] 1(

1+5

2

)(15

2

)

a2

[15

2

1

]

So

u99 =

[F100F99

]=

(1+

5

2

)99(1+

5

2

)(15

2

) [1+521

]

(15

2

)99(1+

5

2

)(15

2

) [1521

].

-

6.2 More applications of eigen-decomposition 6-41

(Problem 30, Section 6.2) Suppose the same S diagonalizes both A and B. They

have the same eigenvectors in A = S1S1 and B = S2S1. Prove that AB =

BA.

Proposition: Suppose both A and B can be diagnosed, and one of A and B

have distinct eigenvalues. Then, A and B have the same eigenvectors if, and only

if, AB = BA.

Proof:

(See Problem 30 above) If A and B have the same eigenvectors, thenAB = (SAS

1)(SBS1) = SABS1 = SBAS1 = (SBS1)(SAS1) = BA.

Suppose without loss of generality that the eigenvalues of A are all distinct.Then, if AB = BA, we have for given Av = v and u = Bv,

Au = A(Bv) = (AB)v = (BA)v = B(Av) = B(v) = (Bv) = u.

Hence, u = Bv and v are both the eigenvectors of A corresponding to the

same eigenvalue .

-

6.2 More applications of eigen-decomposition 6-42

Let u =n

i=1 aivi, where {vi}ni=1 are linearly independent eigenvectors of A.{Au =

ni=1 aiAvi =

ni=1 aiivi

Au = u =n

i=1 aivi= ai(i ) = 0 for 1 i n.

This implies

u =

i:i=

aivi = av = Bv .

Thus, v is an eigenvector of B.

-

6.2 Problem discussions 6-43

(Problem 32, Section 6.2) Substitute A = SS1 into the product (A1I)(A2I) (A nI) and explain why this produces the zero matrix. We are sub-stituting the matrix A for the number in the polynomial p() = det(A I).The Cayley-Hamilton Theorem says that this product is always p(A) =zero

matrix, even if A is not diagonalizable.

Thinking over Problem 32: The Cayley-Hamilton Theorem can be easily proved

if A is diagonalizable.

Corollary (Cayley-Hamilton): Suppose A has linearly independent eigen-

vectors.

(1I A)(2I A) (nI A) = all-zero matrix

Proof:

(1I A)(2I A) (nI A)= S(1I )S1S(2I )S1 S(nI )S1= S (1I )

(1, 1)th entry= 0

(2I ) (2, 2)th entry= 0

(nI ) (n, n)th entry= 0

S1

= S[all-zero entries

]S1 =

[all-zero entries

]

-

6.3 Applications to dierential equations 6-44

We can use eigen-decomposition to solve

d

dt

u1(t)

u2(t)...

un(t)

= dudt = Au =

a1,1u1(t) + a1,2u2(t) + + a1,nun(t)a2,1u1(t) + a2,2u2(t) + + a2,nun(t)

...

an,1u1(t) + an,2u2(t) + + an,nun(t)

where A is called the companion matrix.

By dierential equation technique, we know that the solution is of the form

u = etv

for some constant and constant vector v.

Question: What are all and v that satisfydu

dt= Au?

Solution: Take u = etv into the equation:du

dt

(= etv

)= Au

(= Aetv

)= v = Av.

The answer are all eigenvalues and eigenvectors.

-

6.3 Applications to dierential equations 6-45

Sincedu

dt= Au is a linear system, a linear combination of solutions is still a

solution.

Hence, if there are n eigenvectors, the complete solution can be represented as

c1e1tv1 + c2e

2tv2 + + cnentvnwhere c1, c2, . . . , cn are determined by the initial conditions.

Here, for convenience of discussion at this moment, we assume that A gives us

exactly n eigenvectors.

-

6.3 Applications to dierential equations 6-46

It is sometimes convenient to re-express the solution ofdu

dt= Au for a given

initial condition u(0) as eAtu(0), i.e.,

c1e1tv1 + c2e

2tv2 + + cnentvn

=[v1 v2 vn

] S

e1t 0 00 e2t 0... ... . . . ...

0 0 ent

c1c2...

cn

= SetS1

eAt

Scu(0)

= eAtu(0)

where we dene

eAt

SetS1 = S

e1t 0 00 e2t 0... ... . . . ...

0 0 ent

S1, if S1 exists

k=0

1

k!(At)k, holds no matter whether S1 exist or not

-

6.3 Applications to dierential equations 6-47

and hence u(0) = Sc. Note that

k=0

1

k!(At)k =

k=0

tk

k!Ak =

k=0

tk

k!(SkS1) = S

( k=0

tk

k!k

)S1 = SetS1.

Key to remember: Again, we dene by convention that

f(A) = S

f(1) 0 00 f(2) 0... ... . . . ...

0 0 f(n)

S1.

So, eAt = S

e1t 0 00 e2t 0... ... . . . ...

0 0 ent

S1.

-

6.3 Applications to dierential equations 6-48

We can solve the second-order equation in the same manner.

Example. Solve my + by + ky = 0.

Answer:

Let z = y. Then, the problem is reduced to{y = z

z = kmy b

mz

= dudt

= Au

with u =

[y

z

]and A =

[0 1

km

bm

].

The complete solution for u is thereforec1e

1tv1 + c2e2tv2.

-

6.3 Applications to dierential equations 6-49

Example. Solve y + y = 0 with initial y(0) = 1 and y(0) = 0.

Solution.

Let z = y. Then, the problem is reduced to{y = z

z = y =du

dt= Au

with u =

[y

z

]and A =

[0 1

1 0].

The eigenvalues and eigenvectors of A are(,

[1

])and

(,

[

1

]).

The complete solution for u is therefore[y

y

]= c1e

t

[1

]+ c2e

t[

1

]with initially

[1

0

]= c1

[1

]+ c2

[

1

].

So, c1 =2and c2 = 2. Thus,

y(t) =

2et()

2et =

1

2et +

1

2et = cos(t).

-

6.3 Applications to dierential equations 6-50

In practice, we will use a discrete approximation to approximate a continuous

function. There are however three dierent discrete approximations.

For example, how to approximate y(t) = y(t) by a discrete system?Yn1 Forward approximation

Yn+1 2Yn + Yn1(t)2

=Yn+1Yn

t YnYn1tt

= Yn Centered approximationYn+1 Backward approximation

Lets take forward approximation as an example, i.e.,

Yn+1Ynt Zn

YnYn1t

Zn1t

= Yn1.Thus,{

y(t) = z(t)

z(t) = y(t)

Yn+1 Yn

t= Zn

Zn+1 Znt

= Yn[Yn+1Zn+1

] un+1

=

[1 t

t 1]

A

[YnZn

] un

-

6.3 Applications to dierential equations 6-51

Then, we obtain

un = Anu0 =

[1 1

] [

(1 + t)n 0

0 (1 t)n] [

12

212

2

] [1

0

]and

Yn =(1 + t)n + (1 t)n

2=[1 + (t)2

]n/2cos(n )

where = tan1(t).

Problem: |Yn| as n large.

-

6.3 Applications to dierential equations 6-52

Backward approximation will leave to Yn 0 as n large.

{y(t) = z(t)

z(t) = y(t)

Yn+1 Yn

t= Zn+1

Zn+1 Znt

= Yn+1[1 tt 1

]

A1

[Yn+1Zn+1

] un+1

=

[YnZn

] un

A solution to the problem: Interleave the forward approximation with

the backward approximation.{y(t) = z(t)

z(t) = y(t)

Yn+1 Yn

t= Zn

Zn+1 Znt

= Yn+1[1 0

t 1

] [Yn+1Zn+1

] un+1

=

[1 t

0 1

] [YnZn

] un

We then perform this so-called leapfrog method. (See Problems 28 and 29.)[Yn+1Zn+1

] un+1

=

[1 0

t 1

]1 [1 t

0 1

] [YnZn

] un

=

[1 t

t 1 (t)2]

|eigenvalues|=1 if t2

[YnZn

] un

-

6.3 Applications to dierential equations 6-53

(Problem 28, Section 6.3) Centering y = y in Example 3 will produce Yn+1 2Yn+Yn1 = (t)2Yn. This can be written as a one-step dierence equation forU = (Y, Z):

Yn+1 = Yn +t ZnZn+1 = Zn t Yn+1

[1 0

t 1

] [Yn+1Zn+1

]=

[1 t

0 1

] [YnZn

].

Invert the matrix on the left side to write this as Un+1 = AUn. Show that detA =

1. Choose the large time step t = 1 and nd the eigenvalues 1 and 2 = 1 of

A:

A =

[1 1

1 0]has |1| = |2| = 1. Show that A6 is exactly I.

After 6 steps to t = 6, U6 equals U0. The exact y = cos(t) returns to 1 at t = 2.

-

6.3 Applications to dierential equations 6-54

(Problem 29, Section 6.3) That centered choice (leapfrog method ) in Problem 28 is

very successful for small time steps t. But nd the eigenvalues of A for t =2

and 2:

A =

[1

2

2 1]

and A =

[1 2

2 3].

Both matrices have || = 1. Compute A4 in both cases and nd the eigenvectorsof A. That value t = 2 is at the border of instability. Time steps t > 2 will

lead to || > 1, and the powers in Un = AnU0 will explode.Note You might say that nobody would compute with t > 2. But if an atom

vibrates with y = 1000000y, then t > .0002 will give instability. Leapfroghas a very strict stability limit. Yn+1 = Yn + 3Zn and Zn+1 = Zn 3Yn+1 willexplode because t = 3 is too large.

-

6.3 Applications to dierential equations 6-55

A better solution to the problem: Mix the forward approximation

with the backward approximation.{y(t) = z(t)

z(t) = y(t)

Yn+1 Yn

t=

Zn+1 + Zn2

Zn+1 Znt

= Yn+1 + Yn2

[1 t

2t2

1

] [Yn+1Zn+1

] un+1

=

[1 t

2

t2

1

] [YnZn

] un

We then perform this so-called trapezoidal method. (See Problem 30.)[Yn+1Zn+1

] un+1

=

[1 t2t2

1

]1 [1 t2

t2

1

] [YnZn

] un

=1

1 +(t2

)2[1 (t2 )2 tt 1 (t2 )2

]

|eigenvalues|=1 for all t>0

[YnZn

] un

-

6.3 Applications to dierential equations 6-56

(Problem 30, Section 6.3) Another good idea for y = y is the trapezoidal method(half forward/half back): This may be the best way to keep (Yn, Zn) exactly on a

circle.

Trapezoidal

[1 t/2

t/2 1

] [Yn+1Zn+1

]=

[1 t/2

t/2 1] [

YnZn

].

(a) Invert the left matrix to write this equation as Un+1 = AUn. Show that A is an

orthogonal matrix: ATA = I. These points Un never leave the circle.

A = (I B)1(I + B) is always an orthogonal matrix if BT = B (See theproof on next page).

(b) (Optional MATLAB) Take 32 steps from U0 = (1, 0) to U32 with t = 2/32. Is

U32 = U0? I think there is a small error.

-

6.3 Applications to dierential equations 6-57

Proof:

A = (I B)1(I +B) (I B)A = (I +B) AT(I B)T = (I + B)T AT(I BT) = (I + BT) AT(I +B) = (I B) AT = (I B)(I +B)1

ATA = (I B)(I +B)1(I B)1(I + B)= (I B)[(I B)(I +B)]1(I +B)= (I B)[(I + B)(I B)]1(I +B)= (I B)(I B)1(I +B)1(I + B)= I

-

6.3 Applications to dierential equations 6-58

Question: What if the number of eigenvectors is smaller than n?

Recall that it is convenient to say that the solution of du/dt = Au is eAtu(0)

for some constant vecor u(0).

Conveniently, we can presume that eAtu(0) is the solution of du/dt = Au even if

A does not have n eigenvectors.

Example. Solve y 2y + y = 0 with initially y(0) = 1 and y(0) = 0.Answer.

Let z = y. Then, the problem is reduced to{y = z

z = y + 2z =du

dt=

[y

z

]=

[0 1

1 2]

A

[y

z

]= Au

The solution is still eAtu(0) buteAt = SetS1

because there is only one eigenvector forA (it doesnt matter whether we regard

this case as S does not exist or we regard this case as S exists but has no

inverse).

-

6.3 Applications to dierential equations 6-59

[0 1

1 2]

A

[1 1

1 1

]

S

=

[1 1

1 1

]

S

[1 0

0 1

]

and eAt = eIte(AI)t = eIte(AI)t

eAt = eIt+(AI)t ?= eIte(AI)t (since (It)((A I)t) = ((A I)t)(It). See below.)

=

( k=0

1

k!Iktk

)( k=0

1

k!(A I)ktk

)

=

(( k=0

1

k!tk

)I

)(1

k=0

1

k!(A I)ktk

)Can we use eAt =

k=0

1

k!Aktk?

where we know Ik = I for every k 0 and (A I)k =all-zero matrix fork 2. This gives

eAt =(etI)(I + (A I)t) = et (I + (A I)t)

and

u(t) = eAtu(0) = eAt[1

0

]= et (I + (A I)t)

[1

0

]= et

[1 tt

]

Note that eAeB, eBeA and eA+B may not be equal except AB = BA!

-

6.3 Applications to dierential equations 6-60

Properities of eAt

The eigenvalues of eAt are et for all eigenvalues of A.For example, eAt = et (I + (A I)t) has repeated eigenvalues et, et.

The eigenvectors of eAt remain the same as A.For example, eAt = et (I + (A I)t) has only one eigenvector

[1

1

].

The inverse of eAt always exist (hint: et = 0), and is equal to eAt.For example, eAt = eIte(IA)t = et(I (A I)t).

The transpose of eAt is(eAt)T

=

( k=0

1

k!Aktk

)T=

( k=0

1

k!(AT)ktk

)= eA

Tt.

Hence, if AT = A (skew-symmetric), then (eAt)T eAt = eATteAt = I ; so, eAtis an orthogonal matrix (Recall that a matrix Q is orthogonal if QTQ = I).

-

6.3 Quick tip 6-61

For a 2 2 matrix A =[a1,1 a1,2a2,1 a2,2

], the eigenvector corresponding to the

eigenvalue 1 is

[a1,2

1 a1,1

].

(A 1I)[

a1,21 a1,1

]=

[a1,1 1 a1,2

a2,1 a2,2 1

] [a1,2

1 a1,1

]=

[0

?

]where ?= 0 because the two row vectors of (A 1I) are parallel.

This is especially useful when solving the dierential equation because a1,1 = 0

and a1,2 = 1; hence, the eigenvector v1 corresponding to the eigenvalue 1 is

v1 =

[1

1

].

Solve y 2y + y = 0. Let z = y. Then, the problem is reduced to{y = z

z = y + 2z =du

dt=

[y

z

]=

[0 1

1 2]

A

[y

z

]= Au = v1 =

[1

1

]

-

6.3 Problem discussions 6-62

Problem 6.3B and Problem 31: A convenient way to solve d2u/dt = A2u.(Problem 31, Section 6.3) The cosine of a matrix is dened like eA, by copying

the series for cos t:

cos t = 1 12!t2 +

1

4!t4 cosA = I 1

2!A2 +

1

4!A4

(a) If Ax = x, multiply each term times x to nd the eigenvalue of cosA.

(b) Find the eigenvalues of A =

[

]with eigenvectors (1, 1) and (1,1). From

the eigenvalues and eigenvectors of cosA, nd that matrix C = cosA.

(c) The second derivative of cos(At) is A2 cos(At).

u(t) = cos(At)u(0) solved2u

dt2= A2u starting from u(0) = 0.

Construct u(t) = cos(At)u(0) by the usual three steps for that specic A:

1. Expand u(0) = (4, 2) = c1x1 + c2x2 in the eigenvectors.

2. Multiply those eigenvectors by and (instead of et).

3. Add up the solution u(t) = c1 x1 + c2 x2. (Hint: See Slide 6-48.)

-

6.3 Problem discussions 6-63

(Problem 6.3B, Section 6.3) Find the eigenvalues and eigenvectors of A and write

u(0) = (0, 22, 0) as a combination of the eigenvectors. Solve both equations

u = Au and u = Au:

du

dt=

2 1 01 2 1

0 1 2

u and d2u

dt2=

2 1 01 2 1

0 1 2

u with du

dt(0) = 0.

The 1,2, 1 diagonals make A into a second dierence matrix (like a secondderivative).

u = Au is like the heat equation u/t = 2u/x2.

Its solution u(t) will decay (negative eigenvalues).

u = Au is like the wave equation 2u/t2 = 2u/x2.

Its solution will oscillate (imaginary eigenvalues).

-

6.3 Problem discussions 6-64

Thinking over Problem 6.3B and Problem 31: How about solvingd2u

dt= Au.

Solution. The answer should be eA1/2tu(0). Note that A1/2 has the same eigenvec-

tors as A but its eigenvalues are the square root of A.

eA1/2t = S

e

1/21 t 0 00 e

1/22 t 0

... ... . . . ...

0 0 e1/2n t

S1.

As an example from Problem 6.3B, A =

2 1 01 2 1

0 1 2

.

The eigenvalues of A are 2 and 22.So,

eA1/2t = S

e2 t 0

0 e

22 t 0

0 0 e

2+2 t

S1.

-

6.3 Problem discussions 6-65

Final reminder on the approach delivered in the textbook

In solving du(t)dt

= At with initial u(0), it is convenient to nd the solution as{u(0) = Sc = c1v1 + + cnvnu(t) = Setc = c1e

1v1 + + cnenvnThis is what the textbook always does in Problems.

-

6.3 Problem discussions 6-66

You should practice these problems by yourself!

Problem 15: How to solvedu

dt= Au b (for invertible A)?

(Problem 15, Section 6.3) A particular solution to du/dt = Aub is up = A1b,if A is invertible. The usual solutions to du/dt = Au give un. Find the complete

solution u = up + un:

(a)du

dt= u 4 (b) du

dt=

[1 0

1 1

]u

[4

6

].

Problem 16: How to solvedu

dt= Au ectb (for non-eigenvalue c of A)?

(Problem 16, Section 6.3) If c is not an eigenvalue of A, substitute u = ectv and

nd a particular solution to du/dt = Auectb. How does it break down when c isan eigenvalue of A? The nullspace of du/dt = Au contains the usual solutions

eitxi.

Hint: The particular solution vp satises (A cI)vp = b.

-

6.3 Problem discussions 6-67

Problem 23: eAeB, eBeA and eA+B are not necessarily equal. (They are equal

when AB = BA.)

(Problem 23, Section 6.3) Generally eAeB is dierent from eBeA. They are both

dierent from eA+B. Check this using Problems 21-2 and 19. (If AB = BA, all

these are the same.)

A =

[1 4

0 0

]B =

[0 40 0

]A +B =

[1 0

0 0

]

Problem 27: An interesting brain-storming problem!

(Problem 27, Section 6.3) Find a solution x(t), y(t) that gets large as t . Toavoid this instability a scientist exchanges the two equations:

dx/dt = 0x 4ydy/dt = 2x + 2y becomes

dy/dt = 2x + 2ydx/dt = 0x 4y

Now the matrix

[2 20 4

]is stable. It has negative eigenvalues. How can this be?

Hint: The matrix is not the right one to be used to describe the transformed linear

equations.

-

6.4 Symmetric matrices 6-68

Definition (Symmetric matrix): A matrix A is symmetric if A = AT.

When A is symmetric, its eigenvalues are all reals;

it has n orthogonal eigenvectors (so it can be diagonalized). We can then

normalize these orthogonal eigenvectors to obtain an orthonormal basis.

Definition (Symmetric diagonalization): A symmetric matrix A can be

written as

A = QQ1 = QQT with Q1 = QT

where is a diagonal matrix with real eigenvalues at the diagonal.

-

6.4 Symmetric matrices 6-69

Theorem (Spectral theorem or principal axis theorem) A symmetric

matrix A with distinct eigenvalues can be written as

A = QQ1 = QQT with Q1 = QT

where is a diagonal matrix with real eigenvalues at the diagonal.

Proof:

We have proved on Slide 6-16 that a symmetric matrix has real eigenvaluesand a skew-symmetric matrix has pure imaginary eigenvalues.

Next, we prove that eigenvectors are orthogonal, i.e., vT1v2 = 0, for unequaleigenvalues 1 = 2.

Av1 = 1v1 and Av2 = 2v2

= (1v1)Tv2 = (Av1)Tv2 = vT1ATv2 = vT1Av2 = vT12v2which implies

(1 2)vT1v2 = 0.Therefore, vT1v2 = 0 because 1 = 2.

-

6.4 Symmetric matrices 6-70

A = QQT implies thatA = 1q1q

T1 + + nqnqTn

= 1P1 + + nPnRecall from Slide 4-37, the projection matrix Pi onto a unit vector qi is

Pi = qi(qTiqi)

1qTi = qiqTi

So, Ax is the sum of projections of vector x onto each eigenspace.

Ax = 1P1x + + nPnx.

The spectral theorem can be extended to a symmetric matrix with repeated

eigenvalues.

To prove this, we need to introduce the famous Schurs theorem.

-

6.4 Symmetric matrices 6-71

Theorem (Schurs Theorem): Every square matrix A can be factorized into

A = QTQ1 with T upper triangular and Q = Q1,

where denotes Hermitian transpose operation (and Q and T can generallyhave complex entries!)

Further, if A has real eigenvalues (and hence has real eigenvectors), then Q and T

can be chosen real.

Proof: The existence of Q and T such that A = QTQ and Q = Q1 can beproved by induction.

The theorem trivially holds when A is a 1 1 matrix. Suppose that Schurs Theorem is valid for all (n1) (n1) matrices. Then,we claim that Schurs Theorem will hold true for all n n matrices. This is because we can take t1,1 and q1 to be the eigenvalue and unit

eigenvector of Ann (as there must exist at least one pair of eigenvalue andeigenvector for Ann). Then, choose any unit p2, . . ., pn such that theytogether with q1 span the n-dimensional space, and

P =[q1 p2 pn

]is a (unitary) orthonormal matrix, satisfying P = P1.

-

6.4 Symmetric matrices 6-72

We derive

P AP =

q1p2...

pn

[Aq1 Ap2 Apn]

=

q1p2...

pn

[t1,1q1 Ap2 Apn] (since A q1

eigenvector

= t1,1eigenvalue

q1)

=

t1,1 t1,2 t1,n0...0

A(n1)(n1)

where t1,j = q1Apj and

A(n1)(n1) =

p

2Ap2 p2Apn... . . . ...

pnAp2 pnApn

.

-

6.4 Symmetric matrices 6-73

Since Schurs Theorem is true for any (n 1) (n 1) matrix, we can ndQ(n1)(n1) and T(n1)(n1) such that

A(n1)(n1) = Q(n1)(n1)T(n1)(n1)Q(n1)(n1)

and

Q(n1)(n1) = Q1(n1)(n1).

Finally, dene

Qnn = P[1 0T

0 Q(n1)(n1)

]and

Tnn =

t1,1 [t1,2 t1,n]Q(n1)(n1)0...0

T(n1)(n1)

.

They satisfy that

QnnQnn =

[1 0T

0 Q(n1)(n1)

]P P

[1 0T

0 Q(n1)(n1)

]= Inn

-

6.4 Symmetric matrices 6-74

and

QnnTnn = Pnn

[1 0T

0 Q(n1)(n1)

]t1,1 [t1,2 t1,n]Q(n1)(n1)0...0

T(n1)(n1)

= Pnn

t1,1 [t1,2 t1,n]Q(n1)(n1)0...0

Q(n1)(n1)T(n1)(n1)

= Pnn

t1,1 [t1,2 t1,n]Q(n1)(n1)0...0

A(n1)(n1)Q(n1)(n1)

= Pnn

t1,1 t1,2 t1,n0...0

A(n1)(n1)

[1 0T

0 Q(n1)(n1)

]

= Pnn(PnnAnnPnn)

[1 0T

0 Q(n1)(n1)

]= AnnQnn

-

6.4 Symmetric matrices 6-75

It remains to prove that if A has real eigenvalues, then Q and T can be chosenreal, which can be similarly proved by induction. Suppose If A has real

eigenvalues, then Q and T can be chosen real. is true for all (n 1) (n 1)matrices. Then, the claim should be true for all n n matrices. For a given Ann with real eigenvalues (and real eigenvectors), we cancertainly have the real t1,1 and q1, and so are p2, . . . ,pn. This makes real

the resultant A(n1)(n1) and t1,2, . . . , t1,n.

The eigenvector associated with a real eigenvalue can be chosen real. For

complex v, and real and A, by its denition,

Av = v

is equivalent to

A Re{v} = A Re{v} and A Im{v} = A Im{v}.

-

6.4 Symmetric matrices 6-76

The proof is completed by noting that the eigenvalues of A(n1)(n1),satisfying

P AP =

t1,1 t1,2 t1,n0...0

A(n1)(n1)

,

are also the eigenvalues ofAnn; hence, they are all reals. So, by the validityof the claimed statement for (n 1) (n 1) matrices, the existence ofreal Q(n1)(n1) and real T(n1)(n1) satisfying

A(n1)(n1) = Q(n1)(n1)T(n1)(n1)Q(n1)(n1)

and

Q(n1)(n1) = Q1(n1)(n1)

is conrmed.

-

6.4 Symmetric matrices 6-77

Two important facts:

P AP and A have the same eigenvalues but possibly dierent eigenvectors. Asimple proof is that for v = Pv,

(P AP )v = P Av = P (v) = P v = v.

(Section 6.1: Problem 26 or see slide 6-25)

det(A) = det

([B C

0 D

])= det(B) det(D)

Thus,

P AP =

t1,1 t1,2 t1,n0...0

A(n1)(n1)

implies that the eigenvalues of A(n1)(n1) should be the eigenvalues ofP AnnP .

-

6.4 Symmetric matrices 6-78

Theorem (Spectral theorem or principal axis theorem) A symmetric

matrix A (not necessarily with distinct eigenvalues) can be written as

A = QQ1 = QQT with Q1 = QT

where is a diagonal matrix with real eigenvalues at the diagonal.

Proof:

A symmetric matrix certainly satises Schurs Theorem.A = QTQ with T upper triangular and Q = Q1.

A has real eigenvalues. So, both T and Q are reals. By AT = A, we have

AT = QT TQT = QTQT = A.

This immediately gives

T T = T

which implies the o-diagonals are zeros.

-

6.4 Symmetric matrices 6-79

By AQ = QT , equivalently, Aq1 = t1,1q1

Aq2 = t2,2q2...

Aqn = tn,nqn

we know that Q is the matrix of eigenvectors (and there are n of them) and T

is the matrix of eigenvalues.

This result immediately indicates that a symmetric A can always be diagonalized.

Summary

A symmetric matrix has real eigenvalues and n real orthogonal eigenvec-tors.

-

6.4 Problem discussions 6-80

(Problem 21, Section 6.4)(Recommended) This matrix M is skew-symmetric and

also . Then all its eigenvalues are pure imaginary and they also have

|| = 1. (Mx = x for every x so x = x for eigenvectors.) Find allfour eigenvalues from the trace of M :

M =13

0 1 1 1

1 0 1 11 1 0 11 1 1 0

can only have eigenvalues or .

Thinking over Problem 21: The eigenvalues of an orthogonal matrix satises

|| = 1.Proof:

Qv2 = (Qv)Qv = vQQv = vv = v2implies

v = v.

|| = 1 and pure imaginary implies = .

-

6.4 Problem discussions 6-81

(Problem 15, Section 6.4) Show that A (symmetric but complex) has only

one line of eigenvectors:

A =

[ 1

1 ]is not even diagonalizable: eigenvalues = 0, 0.

AT = A is not such a special property for complex matrices. The good property

is AT = A (Section 10.2). Then all s are real and eigenvectors are orthogonal.

Thinking over Problem 15: That a symmetric matrix A satisfying AT = A has

real eigenvalues and n orthogonal eigenvectors is only true for real symmetric

matrices.

For a complex matrix A, we need to rephrase it as A Hermitian symmetric

matrix A satisfying A = A has real eigenvalues and n orthogonal eigenvectors.

Inner product and norm for complex vectors:

v w = wv and v2 = vv

-

6.4 Problem discussions 6-82

Proof of the red-color claim (in the previous slide): Suppose Av = v. Then,

Av = v

= (Av)v = (v)v (i.e., (Av)Tv = (v)Tv)= vAv = vv= vAv = vv= vv = vv= v2 = v2= =

(Problem 28, Section 6.4) For complex matrices, the symmetry AT = A that

produces real eigenvalues changes to AT = A. From det(A I) = 0, nd theeigenvalues of the 2 by 2 Hermitian matrix A =

[4 2 + ; 2 0] = AT. To

see why eigenvalues are real when AT = A, adjust equation (1) of the text to

Ax = x. (See the green box above.)

-

6.4 Problem discussions 6-83

(Problem 27, Section 6.4) (MATLAB) Take two symmetric matrices with dierent

eigenvectors, say A =

[1 0

0 2

]and B =

[8 1

1 0

]. Graph the eigenvalues 1(A+ tB)

and 2(A + tB) for 8 < t < 8. Peter Lax says on page 113 of Linear Algebrathat 1 and 2 appear to be on a collision course at certain values of t. Yet at

the last minute they turn aside. How close do they come?

Correction for Problem 27: The problem should be . . . Graph the eigenvalues

1 and 2 of A + tB for 8 < t < 8. . . ..

Hint: Draw the pictures of 1(t) and 2(t) with respect to t and check 1(t)2(t).

-

6.4 Problem discussions 6-84

(Problem 29, Section 6.4)Normal matrices have ATA = AAT. For real matrices,ATA = AAT

includes symmetric, skew symmetric, and orthogonal matrices. Those have real , imaginary

and || = 1. Other normal matrices can have any complex eigenvalues .Key point: Normal matrices have n orthonormal eigenvectors. Those vectors xiprobably will have complex components. In that complex case orthogonality means xTixj = 0

as Chapter 10 explains. Inner products (dot products) become xTy.

The test for n orthonormal columns in Q becomes QTQ = I instead of QTQ = I.

A has n orthonormal eigenvectors (A = QQT) if and only if A is normal.

(a) Start from A = QQT with QTQ = I . Show that ATA = AAT.

(b) Now start from ATA = AAT. Schur found A = QTQT for every matrix A, with a triangular

T . For normal matrices we must show (in 3 steps) that this T will actually be diagonal.

Then T = .

Step 1. Put A = QTQT into ATA = AAT to nd T TT = T T T.

Step 2: Suppose T =

[a b

0 d

]has T TT = T T T. Prove that b = 0.

Step 3: Extend Step 2 to size n. A normal triangular T must be diagonal.

-

6.4 Problem discussions 6-85

Important conclusion from Problem 29: A matrix A has n orthogonal eigenvec-

tors if, and only if, A is normal.

Definition (Normal matrix): A matrix A is normal if AA = AA.

Proof:

Schurs theorem: A = QTQ with T upper triangular and Q = Q1. AA = AA = T T = TT = T diagonal = Q is the matrix ofeigenvectors by AQ = QT .

-

6.5 Positive denite matrices 6-86

Definition (Positive definite): A symmetric matrix A is positive defi-

nite if its eigenvalues are all positive.

The above denition only applies to a symmetric matrix because a non-symmetricmatrix may have complex eigenvalues (which cannot be compared with zero)!

Properties of positive definite (symmetric) matrices

Equivalent Definition: (symmetric) A is positive denite if, and only if,xTAx > 0 for all non-zero x.

xTAx is usually referred to as the quadratic form!

The proofs can be found in Problems 18 and 19.

(Problem 18, Section 6.5) If Ax = x then xTAx = . If xTAx > 0,

prove that > 0.

-

6.5 Positive denite matrices 6-87

(Problem 19, Section 6.5) Reverse Problem 18 to show that if all > 0 then

xTAx > 0. We must do this for every nonzero x, not just the eigenvectors.

So write x as a combination of the eigenvectors and explain why all cross

terms are xTixj = 0. Then xTAx is

(c1x1+ +cnxn)T(c11x1+ +cnnxn) = c211xT1x1+ +c2nnxTnxn > 0.

Proof (Problems 18):

(only if part : Problem 19) Avi = ivi implies vTi Avi = iv

Tivi > 0 for all

eigenvalues {i}ni=1 and eigenvectors {vi}ni=1. The proof is completed bynoting that with x =

ni=1 civi and {vi}ni=1 orthogonal,

xT(Ax) =

(ni=1

civTi

) nj=1

cjjvj

= n

i=1

c2i ivi2 > 0.

(if part : Problem 18) Taking x = vi, we obtain that vTi (Avi) = v

Ti (vi) =

ivi2 > 0, which implies i > 0.

-

6.5 Positive denite matrices 6-88

Based on the above proposition, we can conclude similar to two positive scalars(as if a and b are both positive, so is a + b) that

Proposition: If A and B are both positive denite, so is A +B.

The next property provides an easy way to construct positive denite matrices.

Proposition: If Ann = RTnmRmn and Rmn has linearly independentcolumns, then A is positive denite.

Proof:

x non-zero = Rx non-zero. Then, xT(RTR)x = (Rx)T(Rx) = Rx2 > 0.

-

6.5 Positive denite matrices 6-89

Since (symmetric) A = QQT, we can choose R = Q1/2QT, which requires

R to be a square matrix. This observation gives another equivalent denition

of positive denite matrices.

Equivalent Definition: Ann is positive denite if, and only if, there existsRmn with independent columns such that A = RTR.

-

6.5 Positive denite matrices 6-90

Equivalent Definition: Ann is positive denite if, and only if, all pivotsare positive.

Proof:

(By LDU decomposition,) A = LDLT, where D is a diagonal matrix with

pivots as diagonals, and L is a lower triangular matrix with 1 as diagonals.

Then,

xTAx = xT(LDLT)x = (LTx)TD(LTx)

= yTDy where y = LTx =

lT1x...lTnx

= d1y21 + + dny2n

= d1(lT1x

)2+ + dn

(lTnx

)2.

So, if all pivots are positive, xTAx > 0 for all non-zero x. Conversely, if

xTAx > 0 for all non-zero x, which in turns implies yTDy > 0 for all

non-zero y (as L is invertible), then pivot di must be positive for the choice

of yj = 0 except for j = i .

-

6.5 Positive denite matrices 6-91

An extension of the previous proposition is:

Suppose A = BCBT with B invertible and C diagonal. Then, A is positive

denite if, and only if, diagonals of C are all positive! See Problem 35.

(Problem 35, Section 6.5) Suppose C is positive denite (so yTCy > 0 when-

ever y = 0) and A has independent columns (so Ax = 0 whenever x = 0).Apply the energy test to xTATCAx to show that ATCA is positive denite:

the crucial matrix in engineering.

-

6.5 Positive denite matrices 6-92

Equivalent Definition: Ann is positive denite if, and only if, the n upperleft determinants (i.e., the (n 1) leading principle minors and det(A)) areall positive.

Definition (Minors, Principle minors and leading principle mi-

nors):

A minor of a matrix A is the determinant of some smaller square matrix,

obtained by removing one or more of its rows or columns.

The rst-order (respectively, second-order, etc) minor is a minor, obtained

by removing (n 1) (respectively, (n 2), etc) rows or columns. A principle minor of a matrix is a minor, obtained by removing the same

rows and columns.

A leading principle minor of a matrix is a minor, obtaining by removing

the last few rows and columns.

-

6.5 Positive denite matrices 6-93

The below example gives three upper left determinants (or two leading prin-

ciple minors and det(A)), det(A11), det(A22) and det(A33).

a1,1 a1,2a2,1 a2,2

a1,3a2,3

a3,1 a3,2 a3,3

Proof of the equivalent denition: This can be proved based on Slide 5-18:

By LU decomposition,

A =

1 0 0 0l2,1 1 0 0l3,1 l3,2 1 0... ... ... . . . ...

ln,1 ln,2 ln,3 1

d1 u1,2 u1,3 u1,n0 d2 u2,3 d2,n0 0 d3 d3,n... ... ... . . . ...

0 0 0 dn

= dk = det(Akk)det(A(k1)(k1))

-

6.5 Positive denite matrices 6-94

Equivalent Definition: Ann is positive denite if, and only if, all (2n 2)principle minors and det(A) are positive.

Based on the above equivalent denitions, a positive denite matrix cannothave either zero or negative value in its main diagonals. See Problem 16.

(Problem 16, Section 6.5) A positive denite matrix cannot have a zero (or even

worse, a negative number) on its diagonal. Show that this matrix fails to have

xTAx > 0:

[x1 x2 x3

] 4 1 11 0 21 2 5

x1x2x3

is not positive when (x1, x2, x3) = ( , , ).

With the above discussion of positive denite matrices, we can proceed to de-

ne similar notions like negative definite, positive semidefinite, negative

semidefinite matrices.

-

6.5 Positive semidenite (or nonnegative denite) 6-95

Definition (Positive semidefinite): A symmetric matrix A is positive

semidefinite if its eigenvalues are all nonnegative.

Equivalent Definition: A is positive semidenite if, and only if, xTAx0 forall non-zero x.

Equivalent Definition: Ann is positive semidenite if, and only if, there existsRmn (perhaps with dependent columns) such that A = RTR.

Equivalent Definition: Ann is positive semidenite if, and only if, all pivotsare nonnegative.

Equivalent Definition: Ann is positive semidenite if, and only if, all (2n2)principle minors and det(A) are non-negative.

Example. Non-positive semidenite A=

1 0 00 0 00 0 1

has (232) principle minors.

det

([1 0

0 0

]), det

([1 0

0 1])

, det

([0 0

0 1])

, det([1]), det

([0]), det

([1]) .Note: It is not sucient to dene the positive semidenite based on the non-

negativity of leading principle minors and det(A). Check the above example.

-

6.5 Negative denite, negative semidenite, indenite 6-96

We can similarly dene negative definite.

Definition (Negative definite): A symmetric matrix A is negative def-

inite if its eigenvalues are all negative.

Equivalent Definition: A is negative denite if, and only if, xTAx

-

6.5 Negative denite, negative semidenite, indenite 6-97

We can also similarly dene negative semidefinite.

Definition (Negative definite): A symmetric matrix A is negative

semidefinite if its eigenvalues are all non-positive.

Equivalent Definition: A is negative semidenite if, and only if, xTAx0 forall non-zero x.

Equivalent Definition: Ann is negative semidenite if, and only if, thereexists Rmn (possibly with dependent columns) such that A = RTR.Equivalent Definition: Ann is negative semidenite if, and only if, all pivotsare non-positive.

Equivalent Definition: Ann is negative denite if, and only if, all odd-orderprinciple minors are non-positive and all even-order principle minors and det(A)

are non-negative.

Note: It is not sucient to dene the negative semidenite based on the non-

positivity of leading principle minors and det(A).

Finally, if some of the eigenvalues of a symmetric matrix are positive, and some are

negative, the matrix will be referred to as indefinite.

-

6.5 Quadratic form 6-98

For a positive denite matrix A22,xTAx = xT(QQT)x = (QTx)T(QTx)

= yTy where y = QTx =

[qT1x

qT2x

]= 1

(qT1x

)2+ 2

(qT2x

)2So, xTAx = c gives an ellipse if c > 0.

Example. A =

[5 4

4 5

]

1 = 9 and 2 = 1, and q1 =12

[1

1

]and q2 =

12

[1

1].

xTAx = 1 (qT1x)

2+ 2 (q

T2x)

2= 9

(x1 + x2

2

)2+

(x1 x2

2

)2

{qT1x is an axis perpendicular to q1. (Not along q1 as the textbook said.)

qT2x is an axis perpendicular to q2.

Tip: A = QQT is called the principal axis theorem (cf. Slide 6-69) because

xTAx = yTy = c is an ellipse with axes along the eigenvectors.

-

6.5 Problem discussions 6-99

(Problem 13, Section 6.5) Find a matrix with a > 0 and c > 0 and a + c > 2b

that has a negative eigenvalue.

Missing point in Problem 13: The matrix to be determined is of the shape

[a b

b c

].

-

6.6 Similar matrices 6-100

Definition (Similarity): Two matrices A and B are similar if B = M1AMfor some invertible M .

Theorem: A and M1AM have the same eigenvalues.

Proof: For eigenvalue and eigenvector v of A, dene v = M1v. We then derive

Bv = (M1AM)v = M1AM(M1v) = M1Av = M1v = M1v = v

So, is also the eigenvalue of M1AM (associated with eigenvector v = M1v).

Notes:

The LU decomposition over a symmetric matrixA givesA = LDLT but A andD (pivot matrix) apparently may have dierent eigenvalues. Why? Because

(LT)1 = L. Similarity is defined based on M1,not MT. The converse to the above theorem is wrong!. In other words, we cannotsay that two matrices with the same eigenvalues are similar.

Example. A =

[0 1

0 0

]and B =

[0 0

0 0

]have the same eigenvalues 0, 0, but

they are not similar.

-

6.6 Jordan form 6-101

Why introducing matrix similarity?Answer: We can then extend the diagonalization of a matrix with less than

n eigenvectors to the Jordan form.

For an un-diagonalizable matrix A, we can nd invertible M such thatA = MJM1,

where

J =

J1 0 00 J2 0... ... . . . ...

0 0 Js

and s is the number of distinct eigenvalues, and the size of Ji is equal to the

multiplicity of eigenvalue i, and Ji is of the form

Ji =

i 1 0 00 i 1 00 0 i ...... ... ... . . . 1

0 0 0 i

-

6.6 Jordan form 6-102

The idea behind the Jordan form is that A is similar to J . Based on this, we can now compute

A100 = MJ100M1 = M

J1001 0 00 J1002 0... ... . . . ...

0 0 J100s

M1.

How to nd Mi corresponding to Ji?Answer: By AM = MJ with M =

[M1 M2 Ms

], we know

AMi = MiJi for 1 i s.Specically, assume that the multiplicity of i is two. Then,

A[v(1)i v

(2)i

]=[v(1)i v

(2)i

] [i 10 i

]which is equivalent to{

Av(1)i = iv

(1)i

Av(2)i = v

(1)i + iv

(2)i

={(A iI)v(1)i = 0(A iI)v(2)i = v(1)i

-

6.6 Problem discussions 6-103

(Problem 14, Section 6.6) Prove that AT is always similar to A (we know the s

are the same):

1. For one Jordan block Ji: Find Mi so that M1i JiMi = J

Ti .

2. For any J with blocks Ji: Build M0 from blocks so that M10 JM0 = J

T.

3. For any A = MJM1: Show that AT is similar to JT and so to J and to A.

-

6.6 Problem discussions 6-104

Thinking over Problem 14: AT and A are always similar.

Answer:

It can be easily checked thatu1,1 u1,2 u1,nu2,1 u2,2 u2,n... ... . . . ...

un,1 un,2 un,n

=

0 0 1... 1 0... ... ...1 0 0

un,n un,2 un,1... . . . ... ...... u2,2 u2,1

u1,n u1,2 u1,1

0 0 1... 1 0... ... ...1 0 0

= V 1

un,n un,2 un,1... . . . ... ...... u2,2 u2,1

u1,n u1,2 u1,1

V

where here V represents a matrix with zero entries except vi,n+1i = 1 for1 i n. We note that V 1 = V T = V .So, we can use the proper size of Mi = V to obtain

JTi = M1i JiMi.

-

6.6 Problem discussions 6-105

Dene

M =

M1 0 00 M2 0... ... . . . ...

0 0 Ms

We have

JT = MJM1,

where

J =

J1 0 00 J2 0... ... . . . ...

0 0 Js

and s is the number of distinct eigenvalues, and the size of Ji is equal to the

multiplicity of eigenvalue i, and Ji is of the form

Ji =

i 1 0 00 i 1 00 0 i ...... ... ... . . . 1

0 0 0 i

-

6.6 Problem discussions 6-106

Finally, we know

A is similar to J ;

AT is similar to JT;

JT is similar to J .

So, AT is similar to A.

(Problem 19, Section 6.6) If A is 6 by 4 and B is 4 by 6, AB and BA have dierent

sizes. But with blocks,

M1FM =[I A0 I

] [AB 0

B 0

] [I A

0 I

]=

[0 0

B BA

]= G.

(a) What sizes are the four blocks (the same four sizes in each matrix)?

(b) This equation is M1FM = G, so F and G have the same eigenvalues. Fhas the 6 eigenvalues of AB plus 4 zeros; G has the 4 eigenvalues of BA plus

6 zeros. AB has the same eigenvalues as BA plus zeros.

-

6.6 Problem discussions 6-107

Thinking over Problem 19: AmnBnm and BnmAmn have the same eigenval-ues except for additional (m n) zeros.Solution: The example shows the usefulness of the similarity.

[Imm Amn0nm Inn

] [AmnBnm 0mn

Bnm 0nn

] [Imm Amn0nm Inn

]=

[0mm 0mnBnm BnmAmn

]

Hence,[AmnBnm 0mn

Bnm 0nn

]and

[0mm 0mnBnm BnmAmn

]are similar and have the

same eigenvalues.

From Problem 26 in Section 6.1 (see Slide 6-25), the desired claim (as indicatedabove by red-color text) is proved.

-

6.6 Problem discussions 6-108

(Problem 21, Section 6.6) If J is the 5 by 5 Jordan block with = 0, nd J2 and

count its eigenvectors (are these the eigenvectors?) and nd its Jordan form (there

will be two blocks).

Problem 21 : Find the Jordan form of A = J2, where

J =

0 1 0 0 0

0 0 1 0 0

0 0 0 1 0

0 0 0 0 1

0 0 0 0 0

.

Solution.

A = J2 =

0 0 1 0 0

0 0 0 1 0

0 0 0 0 1

0 0 0 0 0

0 0 0 0 0

, and the eigenvalues of A are 0, 0, 0, 0, 0.

-

6.6 Problem discussions 6-109

Av1 = A

v1,1v2,1v3,1v4,1v5,1

=

v3,1v4,1v5,10

0

= 0 = v3,1 = v4,1 = v5,1 = 0 = v1 =

v1,1v2,10

0

0

.

Av2 = A

v1,2v2,2v3,2v4,2v5,2

=

v3,2v4,2v5,20

0

= v1 =

v3,2 = v1,1

v4,2 = v2,1

v5,2 = 0

= v2 =

v1,2v2,2v1,1v2,10

Av3 = A

v1,3v2,3v3,3v4,3v5,3

=

v3,3v4,3v5,30

0

= v2 =

v3,3 = v1,2

v4,3 = v2,2

v5,3 = v1,1

v2,1 = 0

= v3 =

v1,3v2,3v1,2v2,2v1,1

-

6.6 Problem discussions 6-110

To summarize,

v1 =

v1,1v2,10

0

0

= v2 =

v1,2v2,2v1,1v2,10

= If v2,1 = 0, then v3 =

v1,3v2,3v1,2v2,2v1,1

v(1)1 =

1

0

0

0

0

= v(1)2 =

v(1)1,2

v(1)2,2

1

0

0

= v(1)3 =

v(1)1,3

v(1)2,3

v(1)1,2

v(1)2,2

1

v(2)1 =

0

1

0

0

0

= v(2)2 =

v(2)1,2

v(2)2,2

0

1

0

-

6.6 Problem discussions 6-111

Since we wish to choose each of v(1)2 and v

(2)2 to be orthogonal to both v

(1)1 and

v(2)1 ,

v(1)1,2 = v

(1)2,2 = v

(2)1,2 = v

(2)2,2 = 0,

i.e.,

v(1)1 =

1

0

0

0

0

= v(1)2 =

0

0

1

0

0

= v(1)3 =

v(1)1,3

v(1)2,3

0

0

1

v(2)1 =

0

1

0

0

0

= v(2)2 =

0

0

0

1

0

Since we wish to choose v(1)3 to be orthogonal to all of v

(1)1 , v

(2)1 , v

(1)2 and v

(2)2 ,

v(1)1,3 = v

(1)2,3 = 0.

-

6.6 Problem discussions 6-112

As a result,

A[v(1)1 v

(1)2 v

(1)3 v

(2)1 v

(2)2

]=[v(1)1 v

(1)2 v

(1)3 v

(2)1 v

(2)2

]0 1 0 0 0

0 0 1 0 0

0 0 0 0 0

0 0 0 0 1

0 0 0 0 0

Are all v(1)1 ,v(1)2 ,v(1)3 ,v(2)1 and v(2)2 eigenvectors of A (satisfying Av = v)?

Hint: A does not have 5 eigenvectors. More specically, A only has 2 eigen-

vectors? Which two? Think of it.

Hence, the problem statement may not be accurate as I mark (are these theeigenvectors?).

-

6.7 Singular value decomposition (SVD) 6-113

Now we can represent all square matrix in the Jordan form:A = M1JM.

What if the matrix Amn is not a square one?

Problem: Av = v is not possible! Specically, v cannot have two dierent

dimensionalities.

Amnvn1 = vn1 infeasible if n = m. So, we can only have

Amnvn1 = um1.

-

6.7 Singular value decomposition (SVD) 6-114

If we can nd enough numbers of orthogonal u and v such that

Amn[v1 v2 vn

]nn

V

=[u1 u2 um

]mm

U

1 0 0 0... . . . ... ... . . . ...

0 r 0 00 0 0 0... . . . ... ... . . . ...

0 0 0 0

mn

.

where r is the rank of A, then we can perform the so-called singular value

decomposition (SVD)

A = UV 1.

Note again that the required enough number of orthogonal u and v may be

impossible when A has repeated eigenvalues.

-

6.7 Singular value decomposition (SVD) 6-115

If V is chosen to be an orthogonal matrix satisfying V 1 = V T, then we havethe so-called reduced SVD

A = UV T

=r

i=1

iuivTi

=[u1 ur

]mr

1 0... . . . ...0 r

rr

vT1...vTr

rn

Usually, we prefer to choose an orthogonal V (as well as orthogonal U). In the sequel, we will assume the found U and V are orthogonal matrices inthe rst place; later, we will conrm that orthogonal U and V can always be

found.

-

6.7 How to determine U , V and {i}? 6-116 Amn = UmmmnV Tnn

= ATnmAmn =(UmmmnV Tnn

)T (UmmmnV Tnn

)= VnnTnmU

TmmUmmmnV

Tnn = Vnn

2nnV

Tnn.

So, V is the (orthogonal) matrix of n eigenvectors of symmetric ATA.

Amn = UmmmnV Tnn= AmnATnm =

(UmmmnV Tnn

) (UmmmnV Tnn

)T= UmmmnV TnnVnn

TnmU

Tmm = Umm

2mmU

Tmm.

So, U is the (orthogonal) matrix of m eigenvectors of symmetric AAT.

Remember that ATA and AAT have the same eigenvalues except for additional(m n) zeros.Section 6.6, Problem 19 (See Slide 6-107): AmnBnm and BnmAmn havethe same eigenvalues except for additional (m n) zeros.

In fact, there are only r non-zero eigenvalues for ATA and AAT, which satisfy

i = 2i , where 1 i r.

-

6.7 How to determine U , V and {i}? 6-117Example (Problem 21 in Section 6.6): Find the SVD of A = J2, where

J =

0 1 0 0 0

0 0 1 0 0

0 0 0 1 0

0 0 0 0 1

0 0 0 0 0

.

Solution.

ATA =

0 0 0 0 0

0 0 0 0 0

0 0 1 0 0

0 0 0 1 0

0 0 0 0 1

and AA

T =

1 0 0 0 0

0 1 0 0 0

0 0 1 0 0

0 0 0 0 0

0 0 0 0 0

.

So V =

0 0 0 1 0

0 0 0 0 1

1 0 0 0 0

0 1 0 0 0

0 0 1 0 0

and U =

1 0 0 0 0

0 1 0 0 0

0 0 1 0 0

0 0 0 1 0

0 0 0 0 1

for =

2 =

1 0 0 0 0

0 1 0 0 0

0 0 1 0 0

0 0 0 0 0

0 0 0 0 0

.

-

6.7 How to determine U , V and {i}? 6-118 Hence,

0 0 1 0 0

0 0 0 1 0

0 0 0 0 1

0 0 0 0 0

0 0 0 0 0

A

=

1 0 0 0 0

0 1 0 0 0

0 0 1 0 0

0 0 0 1 0

0 0 0 0 1

U

1 0 0 0 0

0 1 0 0 0

0 0 1 0 0

0 0 0 0 0

0 0 0 0 0

0 0 1 0 0

0 0 0 1 0

0 0 0 0 1

1 0 0 0 0

0 1 0 0 0

V T

We may compare this with the Jordan form.0 0 1 0 0

0 0 0 1 0

0 0 0 0 1

0 0 0 0 0

0 0 0 0 0

A

=

1 0 0 0 0

0 0 0 1 0

0 1 0 0 0

0 0 0 0 1

0 0 1 0 0

M

0 1 0 0 0

0 0 1 0 0

0 0 0 0 0

0 0 0 0 1

0 0 0 0 0

J

1 0 0 0 0

0 0 1 0 0

0 0 0 0 1

0 1 0 0 0

0 0 0 1 0

M1

-

6.7 How to determine U , V and {i}? 6-119Remarks on the previous example

In the above example, we only know = 2 =

1 0 0 0 0

0 1 0 0 0

0 0 1 0 0

0 0 0 0 0

0 0 0 0 0

.

Hence, =

1 0 0 0 00 1 0 0 00 0 1 0 00 0 0 0 0

0 0 0 0 0

.

However, by Avi = iui = (i)(ui), we can always choose positive i byadjusting the sign of ui.

-

6.6 SVD 6-120

Important notes:

In terminology, i, where 1 i r, is called the singular value.Hence, the name of singular value decomposition is used.

The singular value is always non-zero (even though the eigenvalues of A canbe zeros).

Example. A =

0 0 1 0 0

0 0 0 1 0

0 0 0 0 1

0 0 0 0 0

0 0 0 0 0

but =

1 0 0 0 0

0 1 0 0 0

0 0 1 0 0

0 0 0 0 0

0 0 0 0 0

.

It is good to know that:The rst r columns of V row space R(A) and are bases of R(A)The last (n r) columns of V null space N (A) and are bases of N (A)The rst r columns of U column space C(A) and are bases of C(A)The last (m r) columns of U left null spaceN (AT) and are bases ofN (AT)How useful the above facts are can be seen from the next example.

-

6.6 SVD 6-121

Example. Find the SVD of A43 = x41yT13.

Solution.

A is a rank-1 matrix. So, r = 1 and =

1 0 0

0 0 0

0 0 0

0 0 0

, where 1 = 0. (Actually,

1 = 1.)

The base of the row space is v1 = yy; pick up perpendicular v2 and v3 thatspan the null space.

The base of the column space is u1 = xx; pick up perpendicular u2,u3,u4that span the left null space.

The SVD is then

A43 =

[x

xcolumn space

u2 u3 u4 left null space

]44

43

yT

yvT2vT3

33

row space}null space

Related Documents