1 Procedure Cloning and Procedure Cloning and Integration Integration for Converting Parallelism for Converting Parallelism from Coarse to Fine Grain from Coarse to Fine Grain Won So & Alex Dean Center for Embedded Systems Research Department of Electrical and Computer Engineering NC State University

1 Procedure Cloning and Integration for Converting Parallelism from Coarse to Fine Grain Won So & Alex Dean Center for Embedded Systems Research Department.

Dec 27, 2015

Welcome message from author

This document is posted to help you gain knowledge. Please leave a comment to let me know what you think about it! Share it to your friends and learn new things together.

Transcript

1

Procedure Cloning and IntegrationProcedure Cloning and Integrationfor Converting Parallelism for Converting Parallelism from Coarse to Fine Grainfrom Coarse to Fine Grain

Won So & Alex DeanCenter for Embedded Systems ResearchDepartment of Electrical and Computer

EngineeringNC State University

2

OverviewOverview• I. Introduction

• II. Integration Methods

• III. Overview of the Experiment

• IV. Experimental Results

• V. Conclusions and Future Work

3

I. IntroductionI. Introduction• Motivation

– Multimedia applications are pervasive and require a higher level of performance than previous workloads.

– Digital signal processors are adopting ILP architectures such as VLIW/EPIC.

• Philips Trimedia TM100, TI VelociTI architecture, BOPS ManArray, and StarCore SC120, etc.

– Typical utilization is low from 1/8-1/2.• Not enough independent instructions within

limited instruction window.• A single instruction stream has limited ILP.

– Exploit thread level parallelism (TLP) with ILP.• Find far more distant independent instructions

(coarse-grain parallelism): Exists various levels (e.g. loop level, procedure level)

4

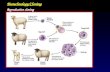

I. Introduction (cont.)I. Introduction (cont.)• Software Thread Integration (STI)

– Software technique which interleaves multiple threads at the machine instruction level

– Previous work focused on Hardware-to-Software Migration (HSM) for low-end embedded processors.

• STI for high-end embedded processors– Integration can produce better performance

• Increases the number of independent instructions.• Compiler generates a more efficient instruction schedule.

– Fusing/jamming multiple procedure calls into one– Convert procedure level parallelism

to ILP

• Goal: Help programmers make multithreaded programs run faster on a uniprocessor

Original scheduled executionof thread1 and thread2

Run t

ime

Thread1

Thread2

Idle issueslots

Scheduled execution of theintegrated thread

Run t

ime

Performance Enhancement

5

I. Introduction (cont.)I. Introduction (cont.)• Previous work I: Multithreaded architectures

– SMT, Multiscalar– SM (Speculative Multithreading), DMT, XIMD– Welding, Superthreading

STI achieves multithreading on uniprocessors with no architectural support.

• Previous work II: Software Techniques– Loop jamming or fusion

STI fuses entire procedures, removing the loop-boundary constraints.

– Procedure Cloning STI makes procedure clones which do the work of multiple procedures/calls concurrently.

6

II. Integration MethodsII. Integration Methods1. Identify the candidate procedures

2. Examine parallelism

3. Perform integration

4. Select an execution model to invoke best clone

7

II-1. Identify the Candidate ProceduresII-1. Identify the Candidate Procedures• Profile application• In multimedia

applications, those procedures would be DSP-Kernels : filter operations (FIR/IIR), frequency-time transformations (FFT, DCT)

• Example: JPEG. (from gprof)

Execution time of CJPEG and DJPEGand function breakdown

0.0E+00

2.0E+07

4.0E+07

6.0E+07

8.0E+07

1.0E+08

1.2E+08

1.4E+08

CJPEG DJPEG

CP

U C

ycle

s

Etc.Pre/Post ProcessEncode/DecodeFDCT/IDCT

8

II-2. Examine ParallelismII-2. Examine Parallelism• Integration requires concurrent execution.• If not already identified, find purely independent

procedure-level data parallelism.1) Each procedure call handles its own data set, input

and output.2) Those data sets are independent of each other.– Abundant because multimedia applications typically

process their large data by separating into blocks. (e.g. FDCT/IDCT)

– More details in [So02]

Goal: Help programmers make multithreaded programs run faster on a uniprocessor

Call 1

Call 2

Call 3

Call 4

o1i1

i2

i3

i4

o2

o3

o4

Original function calls

inputs outputs

...

Integrating2 threads

IntegratedFunctionCall 1 +Call 2

IntegratedFunctionCall 3 +Call 4

o1i1

i2

i3

i4

o2

o3

o4

Modified function calls

inputs outputs

...

9

II-3. Perform IntegrationII-3. Perform Integration• Design the control structure of the integrated

procedure.– Use Control Dependence Graph (CDG).– Care for data-dependent predicates.– Techniques can be applied repeatedly and

hierarchically.

– Case a: Function with a loop (P1 is data-independent)Code

Predicate

Loop

Thread1

Thread2

IntegratedThread

Key

2

1

P1

3

CFG

1

2

3

Thread1

P1

L1

Thread2

P1

L11'

2'

3'

Integratedthread

P1

L11+1'

2+2'

3+3'

10

II-3. Perform Integration (cont.)II-3. Perform Integration (cont.)– Case b: Loop with a conditional (P1 is data-

dependent and P2 is not)

– Case c: Loop with different iterations (P1 is data-dependent)

1

2 3

4

P1

P2

CFG

1

2 3

4P1 P2

L1

T F

Thread1

1'

2' 3'

4'P1' P2

L1

T F

Thread2

1+1' 4+4'P1 P2

L1

T F

2+2' 2+3' 3+2' 3+3'

P1' P1'

T F T F

Integratedthread

2

1

P1

CFG

Thread1

1 2 P1

L1

L1Thread2

1' 2' P1'

1 2 P1

L1

P1&P2

L1

Integratedthread

1+1' 2+2'

L1

1' 2' P1'

11

II-3. Perform Integration (cont.)II-3. Perform Integration (cont.)• Two levels of integration: assembly and HLL

– Assembly: Better control but requires scheduler.– HLL: Use compilers to schedule instructions.– Which is better depends on capabilities of the tools and

compilers• Code transform: Fusing (or jamming) two blocks

– Duplicate and interleave the code.– Rename and allocate new local variables and parameters.

• Superset of loop jamming– Not only jamming the loops but also the rest of the

function.– Allows larger variety of threads to be jammed together.

• Two side effects: Negative impact on the performance– Code size increase: If it exceeds I-cache size– Register pressure: If it exceeds the # of physical registers

12

II-4. Select an Execution ModelII-4. Select an Execution Model• Two approaches: ‘Direct call’ and ‘Smart RTOS’

1) Direct call: Statically bind at compile time• Modify the caller to invoke a specific version of the procedure every

time (e.g. 2-threaded clone).• Simple and appropriate for a simple system.• Same approach is used in Procedure Cloning.• If multiple procedures have been cloned, each may have a different

optimal # of threads2) Call via RTOS: Dynamically bind at run time

• The RTOS selects a version at run time based on expected performance.

• Adaptive and appropriate for a more complex system.Application

Int. Proc.Clones

Other Procedures

Application

Int. Proc. Clones

Other Procedures

RTOS

1) Direct Call 2) Call via RTOS

13

II-4. Select an Execution Model (cont.)II-4. Select an Execution Model (cont.)• Smart RTOS model: 3 levels of execution

– Applications: Thread-forking requests for kernel procedures

– Thread library: Contains discrete and integrated versions.

– Smart RTOS: Chooses efficient version of the thread.

Scheduler

fork request

TA TB TCApplications

T1 T2 T3

T1_2 T2_3 T1_3

Discrete Threads

(Low ILP, slow)

Integrated Threads

(High ILP, fast)

Thread library

Queue for pending requests

Smart RTOS

14

III. Overview of the ExperimentIII. Overview of the Experiment• Objective

– Build up the general approach to perform STI.– Examine performance benefits and bottlenecks of

STI.– Did not focus on a Smart RTOS model.

• Sample application: JPEG– Standard image compression algorithm.– Obtained from Mediabench– Input: 512x512x24bit lena.ppm– 2 applications: Compress and decompress JPEG

Encodedimage

lena.ppm

[Preoprocess]Read image

Image preparationBlock preparation

[FDCT]Forward DCT

Quatize

[Encoding]Huffman/Differential

Encoding

[Postprocess]Frame buildWrite image

Encodedimage

lena.jpg

Algorithm: CJPEG

Encodedimage

lena.jpg

[Preprocess]Read image

Frame decode

[Decoding]Huffman/Differential

Decoding

[IDCT]Dequatize

Inverse DCT

[Postprocess]Image buildWrite image

Decodedimage

lena.ppm

Algorithm: DJPEG

15

III. Overview of the Experiment (cont.)III. Overview of the Experiment (cont.)• Integration method

– Integrated procedures• FDCT (Forward DCT) in CJPEG• Encode (Huffman Encoding) in CJPEG• IDCT (Inverse DCT) in DJPEG

– Methods• Manually integrate threads at C source level.• Build 2 integrated versions: integrating 2 and 3

threads• Executed them with a ‘direct call’ model.

• Experiment– Compile with various compilers: GCC, SGI Pro64, ORC

(Open Research Compiler) and Intel C++ Compiler.– Run on EPIC machine: ItaniumTM running Linux for IA-64.– Evaluate the performance: With the PMU (Performance

Monitoring Unit) in ItaniumTM using the software tool pfmon.

16

III. Overview of the Experiment (cont.)III. Overview of the Experiment (cont.)DJPEGApplications CJPEG

PlatformLinux for IA-64

Run

ItaniumTM processor

GCC: GNU C Compiler

Pro64: SGI Pro64 Compiler

ORCC: Open Research Compiler

Intel: Intel C++ Compiler

-O2: Level 2 optimization

-O3: Level 3 optimization

-O2u0: -O2 without loop unrolling

Compilersand

OptimizationsGCC-O2

Pro64-O2

ORCC-O2 / -O3

Intel-O2 / -O3/ -O2u0

Compile

Threads IDCT_NOSTI

IDCT_STI2

IDCT_STI3

FDCT_NOSTI

FDCT_STI2

FDCT_STI3

Encode_NOSTI

Encode_STI2

Encode_STI3

Applications

Results Performance / IPC Cycle breakdown

Measure

Platform

17

IV. Experimental ResultsIV. Experimental Results• Measured and plotted data

– CPU Cycles (execution time), speedup by STI, and IPC.• Normalized performance: compared with NOSTI/GCC-

O2.• Speedup by STI: compared with NOSTI compiled with

each compiler.• IPC = number of instructions retired / CPU cycles.

– Cycle and speedup breakdown.• Cycle breakdown

– 2 categories of cycle: Inherent execution and stall– 7 sources of stall: Instruction access, data access, RSE,

dependencies, issue limit, branch resteer, taken branches

• Speedup breakdown: sources of speedup and slowdown– Code Size

• Code size of the procedure

18

[FDCT/ CJ PEG] Code Size

0

10000

20000

30000

40000

50000

60000

70000

GCC-O2

Pro64-O2

ORCC-O2

ORCC-O3

Intel-O2

Intel-O3

Intel-O2-u0

Byt

es

I-cache: 16K

[FDC T/C J PEG] Speedup by STI

-20%

-10%

0%

10%

20%

30%

40%

GCC-O2

Pro64-O2

ORCC-O2

ORCC-O3

Intel-O2

Intel-O3

Intel-O2-u0

% S

peedup

[FDC T/C J PEG] IPC Variations

0

0.5

1

1.5

2

2.5

3

3.5

4

GCC-O2 Pro64-O2

ORCC-O2

ORCC-O3

Intel-O2 Intel-O3 Intel-O2-u0

Inst

ruct

ions

/ C

ycle

[FDCT/ CJ PEG] Performance

00.20.40.60.8

11.21.41.61.8

2

GCC-O2

Pro64-O2

ORCC-O2

ORCC-O3

Intel-O2

Intel-O3

Intel-O2-u0

Norm

alize

d P

erf

orm

ance NOSTI

STI2STI3

IV. Experimental Results – FDCT in CJPEGIV. Experimental Results – FDCT in CJPEG

STI speeds up best compiler (Intel-O2-u0) by

17%

Sweet spot varies between one, two and three threads

Code expansion for

function is 75% to 255%

IPC does NOT correlate well with

performance

19

IV. Cycle Breakdown – FDCT in CJPEGIV. Cycle Breakdown – FDCT in CJPEGI-cache miss is crucial in Intel. (big code size by loop unrolling)

I-cache misses are reduced significantly after disabling loop unrolling.

Sources of speedup: Inh.Exe, DataAcc, Dep, IssueLim

Sources of slowdown: InstAcc, BrRes

[FDCT/ CJ PEG] CPU Cycle Breakdown

0.E+00

5.E+06

1.E+07

2.E+07

2.E+07

3.E+07

3.E+07

NO

STI

STI2

STI3

NO

STI

STI2

STI3

NO

STI

STI2

STI3

NO

STI

STI2

STI3

NO

STI

STI2

STI3

NO

STI

STI2

STI3

NO

STI

STI2

STI3

Cycl

es

TakenBr

Br.Res.

IssueLim.

Dep.

RSE

DataAcc

Inst.Acc

Inh.Exe

GCC-O2 Pro64-O2 ORCC-O2 ORCC-O3 Intel-O2 Intel-O3 Intel-O2-u0

[FDCT/ CJ PEG] Speedup Breakdown

-40%

-20%

0%

20%

40%

Inh.Exe Inst.Acc DataAcc RSE Dep. IssueLim. Br.Res. TakenBr

Cycle Category

% S

pee

dup

GCC-O2 STI2 GCC-O2 STI3 Pro64-O2 STI2 Pro64-O2 STI3ORCC-O2 STI2 ORCC-O2 STI3 ORCC-O3 STI2 ORCC-O3 STI3Intel-O2 STI2 Intel-O2 STI3 Intel-O3 STI2 Intel-O3 STI3Intel-O2-u0 STI2 Intel-O2-u0 STI3

20

IV. Experimental Results – EOB in CJPEGIV. Experimental Results – EOB in CJPEG

[EOB/ CJPEG]Performance

0

0.2

0.4

0.6

0.8

1

1.2

1.4

1.6

1.8

GCC-O2

Pro64-O2

ORCC-O2

ORCC-O3

Intel-O2

Intel-O3

Compilers

Norm

aliz

ed P

erfo

rman

ce

NOSTISTI2STI3

[EOB/ CJPEG]Speedup by STI

0%

2%

4%

6%

8%

10%

12%

14%

16%

18%

20%

GCC-O2

Pro64-O2

ORCC-O2

ORCC-O3

Intel-O2

Intel-O3

Compilers

% S

peedu

p

NOSTISTI2STI3

NOSTI: best with Intel-O3.

Speedup by STI: 0.69%~17.38%

13.61% speedup over best compiler

21

IV. Cycle Breakdown – EOB in CJPEGIV. Cycle Breakdown – EOB in CJPEG

I-cache miss is not crucial though it tends to increase after integration.

Sources of speedup: Inh.Exe, DataAcc, IssueLim

Sources of slowdown: InstAcc, Dep

[EOB/C J PEG] CPU Cycle Breakdown

0.E+00

2.E+06

4.E+06

6.E+06

8.E+06

1.E+07

1.E+07

1.E+07

2.E+07

NO

STI

STI2

STI3

NO

STI

STI2

STI3

NO

STI

STI2

STI3

NO

STI

STI2

STI3

NO

STI

STI2

STI3

NO

STI

STI2

STI3

TakenBr

Br.Res.

IssueLim.

Dep.

RSE

DataAcc

Inst.Acc

Inh.Exe

GCC-O2 Pro64-O2 ORCC-O2 ORCC-O3 Intel-O2 Intel-O3

[EOB/ CJ PEG] Speedup Breakdown

-15%

-10%

-5%

0%

5%

10%

15%

Inh.Exe Inst.Acc DataAcc RSE Dep. IssueLim. Br.Res. TakenBr

Cycle Category

% S

peedup

GCC-O2 STI2 GCC-O2 STI3 Pro64-O2 STI2 Pro64-O2 STI3ORCC-O2 STI2 ORCC-O2 STI3 ORCC-O3 STI2 ORCC-O3 STI3Intel-O2 STI2 Intel-O2 STI3 Intel-O3 STI2 Intel-O3 STI3

22

[IDCT/ DJPEG]Speedup by STI

-200%

-150%

-100%

-50%

0%

50%

100%

GCC-O2

Pro64-O2

ORCC-O2

ORCC-O3

Intel-O2

Intel-O3

Intel-O2-u0

Compilers

% S

peedup

NOSTI STI2 STI3

[IDCT/DJ PEG]Performance

0

0.5

1

1.5

2

2.5

3

GCC-O2

Pro64-O2

ORCC-O2

ORCC-O3

Intel-O2

Intel-O3

Intel-O2-u0

Compilers

Nor

mal

ized

Per

form

ance

NOSTISTI2STI3

IV. Experimental Results – IDCT in DJPEGIV. Experimental Results – IDCT in DJPEGWide performance variation for code from different compilers.

Wide variation in STI impact too…

23

IV. Cycle Breakdown - IDCT in DJPEGIV. Cycle Breakdown - IDCT in DJPEG

I-cache miss is crucial in both ORCC and Intel.

I-cache misses are reduced significantly after disabling loop unrolling.

[IDCT/ DJ PEG] CPU Cycle Breakdown

0.E+00

5.E+06

1.E+07

2.E+07

2.E+07

3.E+07

NO

STI

STI2

STI3

NO

STI

STI2

STI3

NO

STI

STI2

STI3

NO

STI

STI2

STI3

NO

STI

STI2

STI3

NO

STI

STI2

STI3

NO

STI

STI2

STI3

Cyc

les

TakenBr

Br.Res.

IssueLim.

Dep.

RSE

DataAcc

Inst.Acc

Inh.Exe

GCC-O2 Pro64-O2 ORCC-O2 ORCC-O3 Intel-O2 Intel-O3 Intel-O2-u0

[IDCT/ DJ PEG] Speedup Breakdown

-90%-80%

-70%-60%

-50%-40%-30%

-20%-10%

0%10%

Inh.Exe Inst.Acc DataAcc RSE Dep. IssueLim. Br.Res. TakenBr

Cycle Category

%Spee

dup

GCC-O2 STI2 GCC-O2 STI3 Pro64-O2 STI2Pro64-O2 STI3 ORCC-O2 STI2 ORCC-O2 STI3ORCC-O3 STI2 ORCC-O3 STI3 Intel-O2 STI2Intel-O3 STI2 Intel-O2-u0 STI2 Intel-O2-u0 STI3

26

IV. Experimental Results (cont.)IV. Experimental Results (cont.)• Speedup by STI

– Procedure speedup up to 18%.– Application speedup up to 11%.– STI does not always improve the performance. – Limited Itanium I-cache is a major bottleneck.

• Compiler variations– ‘Good’ compilers – compilers other than GCC – have

many optimization features. (e.g. speculation, predication)

– Number of instructions are greater than that of GCC.– Absolute performance and speedup by STI is bigger.– But more susceptible to code size limitation.– Carefully apply the optimizations like loop unrolling.

27

V. Conclusions and Future WorkV. Conclusions and Future Work• Summary

– Developed STI technique for converting abundant TLP to ILP on VLIW/EPIC architectures

– Introduced static and dynamic execution models– Demonstrated potential for significant amount of

performance improvement by STI.– Relevant to high-end embedded processors with ILP support

running multimedia applications.

• Future Work– Extend proposed methodology to various threads– Examine the performance with other realistic workloads– Develop a tool to automate integration process at

appropriate level– Build a detailed model and algorithm for a dynamic

approach

[email protected] ------ www.cesr.ncsu.edu/agdean

Related Documents