TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester 1 The Performance of High Throughput Data Flows for e-VLBI in Europe Multiple vlbi_udp Flows, Constant Bit-Rate over TCP & Multi-Gigabit over GÉANT2 Richard Hughes-Jones The University of Manchester www.hep.man.ac.uk/~rich/ then “Talks”

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester 1 The Performance of High Throughput Data Flows for e-VLBI in Europe Multiple.

Mar 27, 2015

Welcome message from author

This document is posted to help you gain knowledge. Please leave a comment to let me know what you think about it! Share it to your friends and learn new things together.

Transcript

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester1

The Performance of High Throughput Data Flows for e-VLBI in Europe

Multiple vlbi_udp Flows,Constant Bit-Rate over TCP

&Multi-Gigabit over GÉANT2

Richard Hughes-Jones The University of Manchester

www.hep.man.ac.uk/~rich/ then “Talks”

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester2

What is VLBI ?

Data wave front sent over the network to the Correlator

VLBI signal wave front

Resolution Baseline

Sensitivity

Bandwidth B is as important

as time τ : Can use as many Gigabits as we can get!

B/1

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester3

DedicatedDWDM link

OnsalaSweden

Gbit link

Jodrell BankUK

DwingelooNetherlands

MedicinaItaly

Chalmers University

of Technolog

y, Gothenbur

g

TorunPoland

Gbit link

MetsähoviFinland

European e-VLBI Test Topology

2* 1 Gbit links

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester4

iGrid2002 monolithic code Convert to use pthreads

control Data input Data output

Work done on vlbi_recv: Output thread polled for data in the ring buffer – burned CPU Input thread signals output thread when there is work to do – else wait on

semaphore – had packet loss at high rate, variable throughput Output thread uses sched_yield() when no work to do

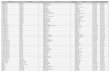

Multi-flow Network performance – set up in Dec06 3 Sites to JIVE: Manc UKLight; Manc production; Bologna GEANT PoP Measure: throughput, packet loss, re-ordering, 1-way delay

vlbi_udp: UDP on the WAN

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester5

vlbi_udp: Some of the Problems

JIVE made Huygens, mark524 (.54) and mark620 (.59) available Within minutes of Arpad leaving, the Alteon NIC of mark524 lost the

data network! OK used mark623 (.62) – faster CPU

Firewalls needed to allow vlbi_udp ports Aarrgg (!!!) Huygens is SUSE Linux

Routing – well this ALWAYS needs to be fixed !!! AMD Opteron did not like sched_getaffinity() sched_setaffinity()

Comment out this bit udpmon flows Onsala to JIVE look good udpmon flows JIVE mark623 to Onsala & Manc UKL don’t work

Firewall down stops after 77 udpmon loops Firewall up udpmon cant communicate with Onsala

CPU load issues on the MarkV systems Don’t seem to be able to keep up with receiving UDP flow AND

emptying the ring buffer Torun PC / Link lost as the test started

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester6

vlbi_udp_3flows_6Dec06

0

200

400

600

800

1000

0 2000 4000 6000 8000 10000 12000 14000

Time during the transfer s

Wir

e R

ate

Mb

it/s

0

0.2

0.4

0.6

0.8

1

% P

acke

t lo

ss

Multiple vlbi_udp Flows Gig7 Huygens

UKLight 15 us spacing 816 Mbit/s sigma <1Mbit/s

step 1 Mbit/s Zero packet loss Zero re-ordering

Gig8 mark623 Academic Internet 20 us spacing 612 Mbit/s 0.6 falling to 0.05% packet loss 0.02 % re-ordering

Bologna mark620 Academic Internet 30 us spacing 396 Mbit/s 0.02 % packet loss 0 % re-ordering

vlbi_udp_3flows_6Dec06

0

200

400

600

800

1000

0 2000 4000 6000 8000 10000 12000 14000

Time during the transfer s

Wir

e R

ate

Mb

it/s

0

0.2

0.4

0.6

0.8

1

% P

acke

t lo

ss

vlbi_udp_3flows_6Dec06

0

200

400

600

800

1000

0 2000 4000 6000 8000 10000 12000 14000

Time during the transfer s

Wir

e R

ate

Mb

it/s

0

0.2

0.4

0.6

0.8

1

% P

acke

t lo

ss

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester7

The Impact of Multiple vlbi_udp Flows Gig7 Huygens UKLight 15 us spacing 800

Mbit/s Gig8 mark623 Academic Internet 20 us spacing 600

Mbit/s Bologna mark620 Academic Internet 30 us spacing 400 Mbit/s

SURFnet Access link

SJ5 Access link

GARR Access link

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester8

Microquasar GRS1915+105 (11 kpc) on 21 April 2006 at 5 Ghz using 6 EVN telescopes, during a weak flare (11 mJy), just resolved in jet direction (PA140 deg).(Rushton et al.)

Microquasar Cygnus X-3 (10 kpc) on 20 April (a) and 18 May 2006 (b). The source as in a semi-quiescent state in (a) and in a flaring state in (b), The core of the source is probably ~20 mas to the N of knot A. (Tudose et al.)

a b

e-VLBI: Driven by Science

128 Mbit/s from each telescope 4 TBytes raw samples data over 12 hours 2.8 GBytes of correlated data

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester9

RR001 The First Rapid Response Experiment (Rushton Spencer)

The experiment was planned as follows:

1. Operate EVN 6 telescope in real time on 29th Jan 2007

2. Correlate and Analyse results in double quick time

3. Select sources for follow up observations

4. Observe selected sources 1 Feb 2007

The experiment worked – we successfully observed and analysed 16 sources (weak microquasars), ready for the follow up run but we found that none of the sources were suitably active at that time. – a perverse universe!

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester10

Constant Bit-Rate Data over TCP/IP

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester11

CBR Test Setup

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester12

Moving CBR over TCP

0 1 2 3 4 5 6 7 8 9 10

x 104

5

10

15

20

25

30

35

40

45

50

Message number

Tim

e / s

Effect of loss rate on message arrival time

Drop 1 in 5k

Drop 1 in 10k

Drop 1 in 20kDrop 1 in 40k

No loss

Data delayed

0 1 2 3 4 5 6 7 8 9 10

x 104

5

10

15

20

25

30

35

40

45

50

Message number

Tim

e / s

Effect of loss rate on message arrival time

Drop 1 in 5k

Drop 1 in 10k

Drop 1 in 20kDrop 1 in 40k

No loss

Data delayed

Timely arrivalof data

Effect of loss rate on message arrival time.

TCP buffer 1.8 MB (BDP) RTT 27 ms

When there is packet lossTCP decreases the rate.TCP buffer 0.9 MB (BDP)RTT 15.2 ms

Can TCP deliver the data on time?

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester13

Message number / Time

Packet lossDelay in stream

Expected arrival time at CBR

Arrival time

throughput

1Slope

Resynchronisation

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester14

0 20 40 60 80 100 1200

1

2

x 106

CurC

wnd

0 20 40 60 80 100 1200

500

1000

Mbit/s

0 20 40 60 80 100 1200

1

2

Pkts

Retr

ans

0 20 40 60 80 100 1200

500

1000

DupA

cksIn

Time in sec

Message size: 1448 Bytes Data Rate: 525 Mbit/s Route:

Manchester - JIVE RTT 15.2 ms

TCP buffer 160 MB Drop 1 in 1.12 million packets

Throughput increases Peak throughput ~ 734 Mbit/s Min. throughput ~ 252 Mbit/s

CBR over TCP – Large TCP Buffer

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester15

2,000,000 3,000,000 4,000,000 5,000,0000

500

1000

1500

2000

2500

3000

Message number

One w

ay d

ela

y /

ms

Message size: 1448 Bytes Data Rate: 525 Mbit/s Route:

Manchester - JIVE RTT 15.2 ms

TCP buffer 160 MB Drop 1 in 1.12 million packets

OK you can recover BUT: Peak Delay ~2.5s TCP buffer RTT4

CBR over TCP – Message Delay

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester16

Multi-gigabit tests over GÉANT

But will 10 Gigabit Ethernet work on a PC?

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester17

High-end Server PCs for 10 Gigabit

Boston/Supermicro X7DBE Two Dual Core Intel Xeon Woodcrest 5130

2 GHz Independent 1.33GHz FSBuses

530 MHz FD Memory (serial) Parallel access to 4 banks

Chipsets: Intel 5000P MCH – PCIe & MemoryESB2 – PCI-X GE etc.

PCI 3 8 lane PCIe buses 3* 133 MHz PCI-X

2 Gigabit Ethernet SATA

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester18

10 GigE Back2Back: UDP Latency Motherboard: Supermicro X7DBE Chipset: Intel 5000P MCH CPU: 2 Dual Intel Xeon 5130

2 GHz with 4096k L2 cache Mem bus: 2 independent 1.33 GHz PCI-e 8 lane Linux Kernel 2.6.20-web100_pktd-plus Myricom NIC 10G-PCIE-8A-R Fibre myri10ge v1.2.0 + firmware v1.4.10

rx-usecs=0 Coalescence OFF MSI=1 Checksums ON tx_boundary=4096

MTU 9000 bytes

Latency 22 µs & very well behaved Latency Slope 0.0028 µs/byte B2B Expect: 0.00268 µs/byte

Mem 0.0004 PCI-e 0.00054 10GigE 0.0008 PCI-e 0.00054 Mem 0.0004

gig6-5_Myri10GE_rxcoal=0

y = 0.0028x + 21.937

0

10

20

30

40

50

60

0 1000 2000 3000 4000 5000 6000 7000 8000 9000 10000

Message length bytes

La

ten

cy

us

64 bytes gig6-5

0

2000

4000

6000

8000

10000

12000

0 20 40 60 80

Latency us

N(t

)

8900 bytes gig6-5

0

1000

2000

3000

4000

5000

6000

0 20 40 60 80Latency us

N(t

)

3000 bytes gig6-5

0

2000

4000

6000

8000

10000

12000

0 20 40 60 80Latency us

N(t

)

Histogram FWHM ~1-2 us

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester19

10 GigE Back2Back: UDP Throughput Kernel 2.6.20-web100_pktd-plus Myricom 10G-PCIE-8A-R Fibre

rx-usecs=25 Coalescence ON

MTU 9000 bytes Max throughput 9.4 Gbit/s

Notice rate for 8972 byte packet

~0.002% packet loss in 10M packetsin receiving host

Sending host, 3 CPUs idle For <8 µs packets,

1 CPU is >90% in kernel modeinc ~10% soft int

Receiving host 3 CPUs idle For <8 µs packets,

1 CPU is 70-80% in kernel modeinc ~15% soft int

gig6-5_myri10GE

0

1000

2000

3000

4000

5000

6000

7000

8000

9000

10000

0 10 20 30 40Spacing between frames us

Re

cv W

ire r

ate

Mb

it/s 1000 bytes

1472 bytes

2000 bytes

3000 bytes

4000 bytes

5000 bytes

6000 bytes

7000 bytes

8000 bytes

8972 bytes

gig6-5_myri10GE

0

20

40

60

80

100

0 5 10 15 20 25 30 35 40Spacing between frames us

%c

pu

1 k

ern

el

sn

d

1000 bytes

1472 bytes

2000 bytes

3000 bytes

4000 bytes

5000 bytes

6000 bytes

7000 bytes

8000 bytes

8972 bytes

C

gig6-5_myri10GE

0

20

40

60

80

100

0 10 20 30 40Spacing between frames us

% c

pu

1

ke

rne

l re

c

1000 bytes

1472 bytes

2000 bytes

3000 bytes

4000 bytes

5000 bytes

6000 bytes

7000 bytes

8000 bytes

8972 bytes

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester20

10 GigE UDP Throughput vs packet size Motherboard: Supermicro X7DBE Linux Kernel 2.6.20-web100_

pktd-plus Myricom NIC 10G-PCIE-8A-R Fibre myri10ge v1.2.0 + firmware v1.4.10

rx-usecs=0 Coalescence ON MSI=1 Checksums ON tx_boundary=4096

Steps at 4060 and 8160 byteswithin 36 bytes of 2n boundaries

Model data transfer time as t= C + m*Bytes C includes the time to set up transfers Fit reasonable C= 1.67 µs m= 5.4 e4 µs/byte Steps consistent with C increasing by 0.6 µs

The Myricom driver segments the transfers, limiting the DMA to 4096 bytes – PCI-e chipset dependent!

gig6-5_myri_udpscan

0

1000

2000

3000

4000

5000

6000

7000

8000

9000

10000

0 2000 4000 6000 8000 10000Size of user data in packet bytes

Rec

v W

ire

rate

Mbi

t/s

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester21

10 GigE X7DBEX7DBE: TCP iperf No packet loss MTU 9000 TCP buffer 256k BDP=~330k Cwnd

SlowStart then slow growth Limited by sender !

Duplicate ACKs One event of 3 DupACKs

Packets Re-Transmitted

Iperf TCP throughput 7.77 Gbit/s

Web100 plots of TCP parameters

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester22

OK so it works !!!

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester23

ESLEA-FABRIC:4 Gbit flows over GÉANT2 Set up 4 Gigabit Lightpath Between GÉANT2 PoPs

Collaboration with DANTE GÉANT2 Testbed London – Prague – London PCs in the DANTE London PoP with 10 Gigabit NICs

VLBI Tests: UDP Performance

Throughput, jitter, packet loss, 1-way delay, stability Continuous (days) Data Flows – VLBI_UDP and udpmon Multi-Gigabit TCP performance with current kernels Multi-Gigabit CBR over TCP/IP Experience for FPGA Ethernet packet systems

DANTE Interests: Multi-Gigabit TCP performance The effect of (Alcatel 1678 MCC 10GE port) buffer size on bursty TCP

using BW limited Lightpaths

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester24

The GÉANT2 Testbed

10 Gigabit SDH backbone Alcatel 1678 MCCs GE and 10GE client interfaces Node location:

London Amsterdam Paris Prague Frankfurt

Can do lightpath routingso make paths of different RTT

Locate the PCs in London

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester25

Provisioning the lightpath on ALCATEL MCCs

Some jiggery-pokery needed with the NMS to force a “looped back” lightpath London-Prague-London

Manual XCs (using element manager) possible but hard work 196 needed + other operations!

Instead used RM to create two parallel VC-4-28v (single-ended) Ethernet private line (EPL) paths Constrained to transit DE

Then manually joined paths in CZ Only 28 manually created XCs

required

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester26

Provisioning the lightpath on ALCATEL MCCs

Paths come up (Transient) alarms clear

Result: provisioned a path of 28 virtually concatenated VC-4sUK-NL-DE-NL-UK

Optical path ~4150 km With dispersion compensation

~4900 km RTT 46.7 ms

YES!!!YES!!!

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester27

Photos at The PoP

10 GE

Test-bed SDHProduction SDH

Optical Transport

ProductionRouter

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester28

exp2-1_prag_15May07

0

20

40

60

80

100

0 5 10 15 20 25 30 35 40Spacing between frames us

%cp

u1

kern

el s

nd

1000 bytes

1472 bytes

2000 bytes

3000 bytes

4000 bytes

5000 bytes

6000 bytes

7000 bytes

8972 bytes

8000 bytes

exp2-1_prag_15May07

0

1000

2000

3000

4000

5000

6000

7000

8000

9000

10000

0 5 10 15 20 25 30 35 40Spacing between frames us

Rec

v W

ire

rate

Mb

it/s

1000 bytes

1472 bytes

2000 bytes

3000 bytes

4000 bytes

5000 bytes

6000 bytes

7000 bytes

8972 bytes

8000 bytes

4 Gig Flows on GÉANT: UDP Throughput Kernel 2.6.20-web100_pktd-

plus Myricom 10G-PCIE-8A-R Fibre

rx-usecs=25 Coalescence ON

MTU 9000 bytes Max throughput 4.199 Gbit/s

Sending host, 3 CPUs idle For <8 µs packets,

1 CPU is >90% in kernel modeinc ~10% soft int

Receiving host 3 CPUs idle For <8 µs packets,

1 CPU is ~37% in kernel modeinc ~9% soft int

exp2-1_prag_15May07

0

20

40

60

80

100

0 5 10 15 20 25 30 35 40Spacing between frames us

% c

pu

1 ke

rnel

re

c

1000 bytes

1472 bytes

2000 bytes

3000 bytes

4000 bytes

5000 bytes

6000 bytes

7000 bytes

8972 bytes

8000 bytes

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester29

4 Gig Flows on GÉANT: 1-way delay Kernel 2.6.20-web100_pktd-plus Myricom 10G-PCIE-8A-R Fibre

Coalescence OFF

1-way delay stable at 23.435 µs Peak separation 86 µs ~40 µs extra delay

W18 exp1-2_prag_rxcoal0_16May07

23430

23440

23450

23460

23470

23480

23490

0 100 200 300 400 500Packet No.

1-w

ay d

elay

us

0

10002000

30004000

50006000

70002

34

30

23

43

2

23

43

4

23

43

6

23

43

8

23

44

0

1-way delay us

N(t

)

Lab Tests: Peak separation 86 µs ~40 µs extra delay

Lightpath adds no unwanted effects

W20 gig6-g5Cu_myri_MSI_30Mar07

60

70

80

90

100

110

120

130

140

150

160

0 100 200 300 400 500

Packet No.

1-w

ay d

elay

us

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester30

4 Gig Flows on GÉANT: Jitter hist Kernel 2.6.20-web100_pktd-plus Myricom 10G-PCIE-8A-R Fibre

Coalescence OFF Peak separation ~36 µs Factor 100 smaller

8900 bytes w=100 exp1-2_rxcoal0_16May07

1

10

100

1000

10000

100000

0 50 100 150 200 250 300

Latency us

N(t

)

8900 bytes w=300 exp1-2_rxcoal0_16May07

1

10

100

1000

10000

100000

100 150 200 250 300 350 400

Latency us

N(t

)

Packet separation 300 µsPacket separation 100 µs

8900 bytes w=100 gig6-5_Lab_30Mar07

1

10

100

1000

10000

100000

0 50 100 150 200 250 300

Latency us

N(t

)

8900 bytes w=300 gig6-5_Lab_30Mar07

1

10

100

1000

10000

100000

100 150 200 250 300 350 400

Latency us

N(t

)

Lab Tests: Lightpath adds no effects

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester31

4 Gig Flows on GÉANT: UDP Flow Stability

Kernel 2.6.20-web100_pktd-plus

Myricom 10G-PCIE-8A-R Fibre Coalescence OFF

MTU 9000 bytes Packet spacing 18 us Trials send 10 M packets Ran for 26 Hours

Throughput very stable3.9795 Gbit/s

Occasional trials have packet loss ~40 in 10M - investigating

exp2-1_w18_i500_udpmon_21May

3979.5

3979.6

3979.7

3979.8

3979.9

3980

3980.1

3980.2

3980.3

3980.4

3980.5

0 20000 40000 60000 80000 100000

Time during the transfer s

Wir

e R

ate

Mb

it/s

Our thanks go to all our collaborators DANTE really provided “Bandwidth on Demand” A record 6 hours ! including

Driving to the PoP Installing the PCs Provisioning the Light-path

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester32

Any Questions?

TERENA Networking Conference, Lyngby, 21-24 May 2007, R. Hughes-Jones Manchester33

Provisioning the lightpath on ALCATEL MCCs

Create a virtual network element to a planned port (non-existing)in Prague VNE2

Define end points Out port 3 in UK & VNE2 CZ In port 4 in UK & VNE2 CZ

Add Constraint: to go via DE Or does OSPF

Set capacity ( 28 VC-4s ) Alcatel Resource Manager

allocates routing of EXPReS_outVC-4 trails

Repeat for EXPReS_ret Same time slots used in CZ for

EXPReS_out & EXPReS_ret paths

Related Documents