Shannon Inequalities in Distributed Storage - Part I Birenjith Sasidharan and P. Vijay Kumar (joint work with Myna Vajha and Kaushik Senthoor) Department of Electrical Communication Engineering, Indian Institute of Science, Bangalore Workshop on Advanced Information Theory Commemorating the 100th Birthday of Claude Shannon Organized by the IISc-IEEE ComSoc Student Chapter (in association with IEEE Bangalore Section & ECE Department, IISc) Indian Institute of Science, April 30, 2016

Welcome message from author

This document is posted to help you gain knowledge. Please leave a comment to let me know what you think about it! Share it to your friends and learn new things together.

Transcript

Shannon Inequalities in Distributed Storage - Part I

Birenjith Sasidharan and P. Vijay Kumar(joint work with Myna Vajha and Kaushik Senthoor)

Department of Electrical Communication Engineering,Indian Institute of Science, Bangalore

Workshop on Advanced Information TheoryCommemorating the 100th Birthday of Claude Shannon

Organized by the IISc-IEEE ComSoc Student Chapter(in association with IEEE Bangalore Section & ECE Department, IISc)

Indian Institute of Science, April 30, 2016

Outline

1 Basic Definitions

2 Sets of Random Variables

3 Entropic Vectors

4 Backup Slides

References

Raymond Yeung, “Facets of Entropy’http://www.inc.cuhk.edu.hk/EII2013/entropy.pdf

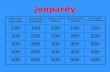

Outline

1 Basic Definitions

2 Sets of Random Variables

3 Entropic Vectors

4 Backup Slides

Entropy of a Random Variable

Let X be a discrete random variable taking on values1 from an alphabet X .Then

H(X ) := −∑x∈X

p(x) log p(x)

= −∑x

p(x) log p(x) for simplicity.

Clearly

H(X ) ≥ 0.

1All random variables encountered here will take on values from a finite alphabet.

Joint and Conditional Entropy of 2 Random Variables

If X ,Y are a pair of discrete random variables we define their jointentropy via:

H(X ,Y ) := −∑x ,y

p(x , y) log p(x , y).

We define the conditional entropy of X given Y via:

H(X/Y ) :=∑y

p(y)

{−∑x

p(x/y) log p(x/y)

}= −

∑x ,y

p(x , y) log p(x/y).

Clearly

H(X ,Y ) ≥ 0

H(X/Y ) ≥ 0.

Joint and Conditional Entropy of 2 RV (continued)

Morover

H(X ,Y ) := −∑x ,y

p(x , y) log p(x , y)

= −∑x ,y

p(x , y) log p(y) −∑x ,y

p(x , y) log p(x/y)

= H(Y ) + H(X/Y ).

Mutual Information

The mutual information between X and Y is given by:

I (X ;Y ) := H(X )− H(X/Y )

= −∑x ,y

p(x , y) logp(x)

p(x/y)

= −∑x ,y

p(x , y) logp(x)p(y)

p(x , y)

= H(X ) + H(Y )− H(X ,Y ).

Mutual Information is Non-Negative

Since the function − log(·) is convex, we have that :

I (X ;Y ) := H(X )− H(X/Y )

=∑x ,y

p(x , y){− logp(x)p(y)

p(x , y)}

≥ − log

(∑x ,y

p(x , y)p(x)p(y)

p(x , y)

)

= − log

(∑x ,y

p(x)p(y)

)= 0.

Conditional Mutual Information

The conditional mutual information between X and Y , conditioned on Z , isgiven by:

I (X ;Y /Z ) := H(X/Z )− H(X/Y ,Z )

= −∑z

p(z)

{∑x ,y

p(x , y/z) logp(x/z)

p(x/y , z)

}

= −∑z

p(z)

{∑x ,y

p(x , y/z) logp(x/z)p(y/z)

p(x , y/z)

}

= −∑x ,y

p(x , y , z) logp(x/z)p(y/z)

p(x , y/z).

Clearly

I (X ;Y /Z ) ≥ 0.

Outline

1 Basic Definitions

2 Sets of Random Variables

3 Entropic Vectors

4 Backup Slides

Sets of Random Variables

Let

[n] = {1, 2, · · · , n}X = {X1,X2, · · · ,Xn}

Then if

A = {i1, i2, · · · , i`} ⊆ [n]

we set XA = {xi1 , xi2 , · · · , xi`} ⊆ X .

Basic Inequalities

Can show just as we have shown above that

H(XA) ≥ 0

H(XA/XB) ≥ 0

I (XA/XB) ≥ 0

I (XA;XB/Xc) ≥ 0.

Note that

H(XA/XB) = H(XA∩B ,XA\B/XB)

= H(XA\B/XB),

etc.

Polymatroidal Axioms

These state the following:

H(XA) ≥ 0

H(XA) ≤ H(XB) if A ⊆ B

H(XA) + H(XB) ≥ H(XA∪B) + H(XA∩B).

The first statement, we have already established.

Proof of Monotonicity

To see that

H(XA) ≤ H(XB) if A ⊆ B ,

note that

H(XB)− H(XA) = H(XB\A,XA)− H(XA)

= H(XB\A/XA)

≥ 0.

Proof of Submodularity

To see that

H(XA) + H(XB) ≥ H(XA∪B) + H(XA∩B),

note that

H(XA) + H(XB)− H(XA∪B)− H(XA∩B) ≥ 0

⇔ (H(XA)− H(XA∩B))− (H(XA∪B)− H(XB)) ≥ 0

⇔ H(XA\B/XA∩B)− H(XA\B/XB) ≥ 0

⇔ I (XA\B ;XB\A/XA∩B) ≥ 0

which we know to be true.In turns out that the basic inequalities and the polymatroidal axioms can bederived from each other.

Outline

1 Basic Definitions

2 Sets of Random Variables

3 Entropic Vectors

4 Backup Slides

Entropic Vectors

Let n = 3. Then

X = {X1,X2,X3}

The vector

h(X ) :=

(H(X1), H(X2),H(X3),H(X1,X2),H(X1,X3),H(X2,X3),H(X1,X2,X3))

is called the entropy vector associated to X . Note that in general

h(·) : X → <2n−1.

The Entropic Vector Region

A vector is called entropic if it is the entropy vector associated to someset of RV X

The entropic vector region Γ∗n is the region containing precisely the setof all entropic vectors h(X ) for all possible X .

Both the basic inequalities (or equivalently, the polymatroidal axioms)can be re-expressed in terms of joint entropies

Thus every entropic vector must satisfy the basic inequalities

Does an entropic vector necessarily have to satisfy any otherinequalities ?

Aside: Inequalities that “Always Hold”f(h) � 0 Always holds

!n

f(h) > 0

*

Figure taken from:

Raymond Yeung, “Facets of Entropy’ http://www.inc.cuhk.edu.hk/EII2013/entropy.pdf

Aside: Inequalities that do not “Always Hold”f(h) � 0 Does Not Always holds

f(h) > 0

*!n

. h0

Figure taken from:

Raymond Yeung, “Facets of Entropy’ http://www.inc.cuhk.edu.hk/EII2013/entropy.pdf

The Region Γn

Let Γn denote the region in <2n−1 of vectors that satisfy the basicinequalities. The big question, is:

Γ∗n = Γn?

Turns out that:

Γ∗2 = Γ2

Γ∗3 6= Γ3

but Γ̄∗3 = Γ3

In general,

Γ∗n 6= Γn

The Zhang-Yeung Counter Example when n = 4

Turns out 2

2I (X3;X4) ≤I (X1;X2) + I (X1;X3,X4) + 3I (X3;X4/X1) + I (X3;X4/X2),

is an example of an inequality that is satisfied by all vectors in Γ∗4, but thatthis inequality is not derivable from the inequalities defining Γ4.

2Zhang and Yeung, “On characterization of entropy function via informationinequalities,” T-IT, 1998.

The Consequent Picture for n = 4

!!

!!

"*

ZY98

An Illustration of ZY98

Figure taken from:

Raymond Yeung, “Facets of Entropy’ http://www.inc.cuhk.edu.hk/EII2013/entropy.pdf

Pipenger (1986)

Raymond Yeung

Zhen Zhang and Others

Zhen ZhangUniversity of Southern California

Terence ChanUniversity of South Australia

Imre CsiszárHungarian Academy of Sciences

Outline

1 Basic Definitions

2 Sets of Random Variables

3 Entropic Vectors

4 Backup Slides

Polyhedra

a closed half space:

H+ = {x ∈ V | λ(x) + a0 ≥ 0}.

a subset P ⊆ V is called a polyhedron if it is the intersection of finitelymany closed half spaces.

a polytope is a bounded polyhedron

a subset P ⊆ V is called a cone if it is the intersection of finitely manylinear closed half spaces (i.e., half spaces where a0 = 0)

Other Results

hZ = hX + hY ∈ Γ∗n; proof use Zi = (Xi ,Yi ), X ,Y independent

Hence for any integer k, kh ∈ Γ∗n

0 ∈ Γ∗n (use the constant random variable)

Γ∗n is convex by appropriate construction of random variables

Related Documents