G RAND DIGITAL PIANO : MULTI - MODAL TRANSFER OF LEARNING S ANCHES S HAYDA E RNESTO E VGENIY ( ESANCHES ), I LKYU L EE ( LQLEE ) I NTRODUCTION Reproduction of the touch and sound of real instruments has been a challeng- ing problem throughout the years. For example, while current digital pianos at- tempt to achieve this task via simplified, finite sample-based methods, there is still much room for improvement in regards to mimicking an acoustic piano. In this study, we aim to improve upon such methods by utilizing machine learn- ing to train a physically-based model of an acoustic piano, allowing for the gener- ation of novel, more realistic sounds. O UR A PPROACH We utilize a multi-modal transfer of learning method, wherein digital piano data regarding touch can ultimately gen- erate realistic sound of an acoustic piano: 1. A laser sensor is used on digital pi- ano to gather touch data via key ve- locity and is used to train a model to predict intermediary modalities, such as MIDI. 2. The model is then applied on the acoustic piano by inputting touch data to predict the intermediary modalities that is not naturally avail- able on acoustic pianos. 3. With these intermediary modalities as input, another model is trained to predict the sound of the acoustic pi- ano. 4. Finally, the previous model is exe- cuted on the digital piano to gener- ate realistic sound from sensor data only available on the digital piano. I MPLEMENTATION Figure 1: Full model architecture E XPERIMENTAL R ESULTS Figure 2: RNN architecture for Laser → MIDI training (Left). RNN training result (Right). Figure 3: Using Kalman filter for Laser → MIDI task. Right is a zoomed-in sight. C ONCLUSIONS We have utilized a multi-modal ap- proach to the problem of predicting in- termediary modalities such as MIDI ve- locity from touch data using a binary out- put RNN, with greater success by using a Kalman filter. While the touch → MIDI training showed signs of success, more work must be done on the sound generation aspect, with conditioning to pitch and MIDI velocity. WaveNet provides a good starting point; however, more research and modifications are necessary for full completion of the entire model. F UTURE W ORK I Utilize a greater dataset for sound generation training and explore par- allelization and other tools for time efficiency. I Work on real-time conversion of the process from key press to sound generation. I Consider other advanced models such as conditional Generative Ad- versarial networks for sound gener- ation. A CKNOWLEDGMENTS We would like to thank the CS229 Course Staff and Anand Avati for helpful suggestions.

Welcome message from author

This document is posted to help you gain knowledge. Please leave a comment to let me know what you think about it! Share it to your friends and learn new things together.

Transcript

GRAND DIGITAL PIANO: MULTI-MODAL TRANSFER OF LEARNINGSANCHES SHAYDA ERNESTO EVGENIY (ESANCHES), ILKYU LEE (LQLEE)

INTRODUCTION

Reproduction of the touch and soundof real instruments has been a challeng-ing problem throughout the years. Forexample, while current digital pianos at-tempt to achieve this task via simplified,finite sample-based methods, there is stillmuch room for improvement in regardsto mimicking an acoustic piano.

In this study, we aim to improve uponsuch methods by utilizing machine learn-ing to train a physically-based model ofan acoustic piano, allowing for the gener-ation of novel, more realistic sounds.

OUR APPROACH

We utilize a multi-modal transfer oflearning method, wherein digital pianodata regarding touch can ultimately gen-erate realistic sound of an acoustic piano:

1. A laser sensor is used on digital pi-ano to gather touch data via key ve-locity and is used to train a modelto predict intermediary modalities,such as MIDI.

2. The model is then applied on theacoustic piano by inputting touchdata to predict the intermediarymodalities that is not naturally avail-able on acoustic pianos.

3. With these intermediary modalitiesas input, another model is trained topredict the sound of the acoustic pi-ano.

4. Finally, the previous model is exe-cuted on the digital piano to gener-ate realistic sound from sensor dataonly available on the digital piano.

IMPLEMENTATION

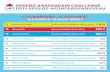

Figure 1: Full model architecture

EXPERIMENTAL RESULTS

Figure 2: RNN architecture for Laser → MIDI training (Left). RNN training result (Right).

Figure 3: Using Kalman filter for Laser → MIDI task. Right is a zoomed-in sight.

CONCLUSIONS

We have utilized a multi-modal ap-proach to the problem of predicting in-termediary modalities such as MIDI ve-locity from touch data using a binary out-put RNN, with greater success by using aKalman filter.

While the touch → MIDI trainingshowed signs of success, more workmust be done on the sound generationaspect, with conditioning to pitch andMIDI velocity. WaveNet provides a goodstarting point; however, more researchand modifications are necessary for fullcompletion of the entire model.

FUTURE WORK

I Utilize a greater dataset for soundgeneration training and explore par-allelization and other tools for timeefficiency.

I Work on real-time conversion of theprocess from key press to soundgeneration.

I Consider other advanced modelssuch as conditional Generative Ad-versarial networks for sound gener-ation.

ACKNOWLEDGMENTS

We would like to thank the CS229Course Staff and Anand Avati for helpfulsuggestions.

Related Documents