VO Sandpit, November 2009 Open All The Things! Open Data and the Scholarly Literature Sarah Callaghan* [email protected] @sorcha_ni Meeting Place Open Access, Malmo, Sweden, 14 th April 2015 * and a lot of others, including, but not limited to: the NERC data citation and publication project team, the PREPARDE project team and the CEDA team

Open All The Things! Open Data and the Scholarly … Sandpit, November 2009 Open All The Things! Open Data and the Scholarly Literature Sarah Callaghan* [email protected]

Mar 13, 2018

Welcome message from author

This document is posted to help you gain knowledge. Please leave a comment to let me know what you think about it! Share it to your friends and learn new things together.

Transcript

VO Sandpit, November 2009

Open All The Things! Open Data and the Scholarly Literature

Sarah Callaghan*

[email protected] @sorcha_ni

Meeting Place Open Access, Malmo, Sweden, 14th April 2015

* and a lot of others, including, but not limited to: the NERC data citation and publication project team, the PREPARDE project team and the CEDA team

VO Sandpit, November 2009

http://knowyourmeme.com/memes/x-all-the-y

http://hyperboleandahalf.blogspot.co.uk/2010/06/this-is-why-ill-never-be-adult.html

VO Sandpit, November 2009

The Scientific Method

http://www.mrsaverettsclassroom.com/bio2-scientific-method.php

This is often the only part of the process that anyone other than the originating scientist sees. We want to change this.

A key part of the scientific method is that it should be reproducible – other people doing the same experiments in the same way should get the same results. Unfortunately observational data is not reproducible (unless you have a time machine!)

The way data is organised and archived is crucial to the reproducibility of science and our ability to test conclusions.

VO Sandpit, November 2009

Why make data open?

http://www.evidencebased-management.com/blog/2011/11/04/new-evidence-on-big-bonuses/

• Pressure from government to make data from publicly funded research available for free.

• Scientists want attribution and credit for their work • Public want to know what the scientists are doing • Good for the economy if new industries can be built

on scientific data/research

• Research funders want reassurance that they’re getting value for money

• Relies on peer-review of science publications (well established) and data (starting to be done!)

• Allows the wider research community and industry to find and use datasets, and understand the quality of the data

Need reward structures and incentives for researchers to encourage them to make their data open – data citation and publication

VO Sandpit, November 2009

It’s not just data!

• Experimental protocols • Workflows • Software code • Metadata • Things that went wrong! • …

VO Sandpit, November 2009

The UK’s Natural Environment Research Council (NERC)

funds six data centres which between them have

responsibility for the long-term management of NERC's

environmental data holdings.

We deal with a variety of environmental measurements,

along with the results of model simulations in:

• Atmospheric science

• Earth sciences

• Earth observation

• Marine Science

• Polar Science

• Terrestrial & freshwater science, Hydrology and

Bioinformatics

Who are we and why do we care about data?

VO Sandpit, November 2009

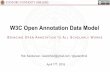

What is a Dataset?

DataCite’s definition (http://www.datacite.org/sites/default/files/Business_Models_Principles_v1.0.pdf):

Dataset: "Recorded information, regardless of the form or medium on which it may be recorded including writings, films, sound recordings, pictorial reproductions, drawings, designs, or other graphic representations, procedural manuals, forms, diagrams, work flow, charts, equipment descriptions, data files, data processing or computer programs (software), statistical records, and other research data."

(from the U.S. National Institutes of Health (NIH) Grants Policy Statement via DataCite's Best Practice Guide for Data Citation).

In my opinion a dataset is something that is: • The result of a defined

process • Scientifically meaningful • Well-defined (i.e. clear

definition of what is in the dataset and what isn’t)

VO Sandpit, November 2009

Some examples of data (just from the Earth Sciences)

1. Time series, some still being updated e.g. meteorological measurements

2. Large 4D synthesised datasets, e.g. Climate, Oceanographic, Hydrological and Numerical Weather Prediction model data generated on a supercomputer

3. 2D scans e.g. satellite data, weather radar data

4. 2D snapshots, e.g. cloud camera

5. Traces through a changing medium, e.g. radiosonde launches, aircraft flights, ocean salinity and temperature

6. Datasets consisting of data from multiple instruments as part of the same measurement campaign

7. Physical samples, e.g. fossils

VO Sandpit, November 2009

What the data set looks like on disk

What the raw data files look like.

I could make these files open easily, but no one would have

a clue how to use them!

The Understandability

Challenge: Data

VO Sandpit, November 2009

Creating a dataset is hard work!

"Piled Higher and Deeper" by Jorge Cham www.phdcomics.com

Documenting a dataset so that it is usable and understandable by others is extra work!

VO Sandpit, November 2009

“I’m all for the free sharing of information, provided it’s them sharing their information with us.”

http://discworld.wikia.com/wiki/Mustrum_Ridcully

Mustrum Ridcully, D.Thau., D.M., D.S., D.Mn., D.G., D.D., D.C.L., D.M. Phil., D.M.S., D.C.M., D.W., B.El.L, Archancellor, Unseen University, Anhk-Morpork, Discworld - As quoted in “Unseen Academicals”, by Terry Pratchett

VO Sandpit, November 2009

Getting scooped http://www.phdcomics.com/comics/archive.php?comicid=795

It happened to me! I shared my data with another research group. They published

the first results using that data. I wasn’t a co-author. I didn’t get an acknowledgement.

VO Sandpit, November 2009

Knowledge is power!

Data may mean the difference between getting a grant and not.

There is (currently) no universally accepted mechanism for data creators to obtain academic credit for their dataset creation efforts.

Creators (understandably) prefer to hold the data until they have extracted all the possible publication value they can.

This behaviour comes at a cost for the wider scientific community.

But if we publish the data, precedence is established and credit is given!

VO Sandpit, November 2009

http://www.naa.gov.au/records-management/capability-development/keep-the-knowledge/index.aspx

Citing Data

• We can extend citation to other things like:

• data • code • multimedia

And the best bit is, researchers don’t need to learn a new method of linking – they cite like they normally would!

• We already have a working method for linking between publications which is: • commonly used • understood by the research community • used to create metrics to show how much of an impact something has

(citation counts) • applied to digital objects (digital versions of journal articles)

VO Sandpit, November 2009

Open/Closed/Published/unpublished

Openness

Qualit

y

CD Webpage

OA journal

Subs journal

Data repository

We want to encourage researchers to make their data: • Open • Persistent • Quality assured:

• through scientific peer review • or repository-managed processes

Unless there’s a very good reason not to!

Publishing = making something public after some formal process which adds value for the consumer:

e.g. peer review and provides commitment to persistence

Shared work

space

VO Sandpit, November 2009

Open is not enough!

“When required to make the data available by my program manager, my collaborators, and ultimately by law, I will grudgingly do so by placing the raw data on an FTP site, named with UUIDs like 4e283d36-61c4-11df-9a26-edddf420622d. I will under no circumstances make any attempt to provide analysis source code, documentation for formats, or any metadata with the raw data. When requested (and ONLY when requested), I will provide an Excel spreadsheet linking the names to data sets with published results. This spreadsheet will likely be wrong -- but since no one will be able to analyze the data, that won't matter.” - http://ivory.idyll.org/blog/data-management.html https://flic.kr/p/awnCQu

VO Sandpit, November 2009

It’s ok, I’ll just put it out there and if it’s important other people will figure it out

These documents have been preserved for thousands of years! But they’ve both been translated many times, with different meanings each time.

We need Metadata to preserve Information!

Phaistos Disk, 1700BC

VO Sandpit, November 2009

Metadata

It is generally agreed that we need methods to:

• define and document datasets of importance.

• augment and/or annotate data

• amalgamate, reprocess and reuse data

To do this, we need metadata – data about data

http://www.kcoyle.net/meta_purpose.html

For example: Longitude and latitude are metadata about the planet. • They are artificial • They allow us to communicate about places on a sphere • They were principally designed by those who needed to navigate the oceans, which are lacking in visible features!

Metadata can often act as a surrogate for the real thing, in this case the planet.

VO Sandpit, November 2009

Data needs metadata!

Dataset

Metadata

http://biteofcinnamon.com/another-sponge-cake-recipe/

https://gollygoshgirl.wordpress.com/2013/06/05/a-little-twist-on-the-classic-victoria-sponge/

VO Sandpit, November 2009

Good and Bad Metadata

(By Sweet Bakes)

VO Sandpit, November 2009

Usability, trust, metadata

http://trollcats.com/2009/11/im-your-friend-and-i-only-want-whats-best-for-you-trollcat/

When you read a journal paper, it’s easy to read and get a quick understanding of the quality of the paper. You don’t want to be downloading many GB of dataset to open it and see if it’s any use to you. Need to use proxies for quality: • Do you know the data

source/repository? Can you trust it? • Is there enough metadata so that you

can understand and/or use the data?

In the same way that not all journal publishers are created equal, not all data repositories are created equal

Example metadata from a published dataset: “rain.csv contains rainfall in mm for each month at Marysville, Victoria from January 1995 to February 2009”

VO Sandpit, November 2009

Part of the Italsat data archive – on CDs in a shelf in my office

Making data open – how not to do it!

VO Sandpit, November 2009

• Stick it up on a webpage somewhere • Issues with stability, persistence,

discoverability… • Maintenance of the website

• Put it in the cloud

• Issues with stability, persistence, discoverability…

• Attach it to a journal paper and store it as

supplementary materials • Journals not too keen on archiving lots of

supplementary data, especially if it’s large volume.

• Put it in a disciplinary/institutional repository

• Write a data article about it and publish it in a

data journal

How to publish data/make data open

By David Fletcher http://www.cloudtweaks.com/2011/05/the-lighter-side-of-the-cloud-data-transfer/

VO Sandpit, November 2009

Why should I bother putting my data into a repository?

"Piled Higher and Deeper" by Jorge Cham www.phdcomics.com

VO Sandpit, November 2009

Repositories and Libraries

Domain specific repositories can:

– Pick and choose what data to keep

– Ask for (and get) more detailed metadata

– Provide specific tools and services (visualisations, server-side processing,…)

– Deal with Big Data!

Libraries will need to:

– Pick up and manage/archive the long-tail data where there isn’t a domain repository

– Have generalised, widely applicable systems that can cope with subjects from astronomy to zoology

– Be prepared to cope with anything!

VO Sandpit, November 2009

Journals have always published data…

Suber cells and mimosa leaves. Robert Hooke, Micrographia, 1665

The Scientific Papers of William Parsons, Third Earl of Rosse 1800-1867

…but datasets have gotten so big, it’s not useful to publish them in hard copy anymore

VO Sandpit, November 2009

Why bother linking the data to the publication? Surely the important stuff is in the journal paper?

If you can’t see/use the data, then you can’t test the conclusions or reproduce the results! It’s not science!

VO Sandpit, November 2009

BADC

Data Data BODC

Data Data

A Journal (Any online

journal system)

PDF PDF PDF PDF PDF Word processing software

with journal template

Data Journal (Geoscience Data Journal)

html html html html

1) Author prepares the paper using word processing software.

3) Reviewer reviews the PDF file against the journal’s acceptance criteria.

2) Author submits the paper as a PDF/Word file.

Word processing software with journal template

1) Author prepares the data paper using word processing software and the dataset using appropriate tools.

2a) Author submits the data paper to the journal.

3) Reviewer reviews the data paper and the dataset it points to against the journals acceptance criteria.

The traditional online journal model

Overlay journal model for publishing data

2b) Author submits the dataset to a repository.

Data

VO Sandpit, November 2009

What is a data article?

A data article describes a dataset, giving details of its collection, processing, software, file formats, etc., without the requirement of novel analyses or ground breaking conclusions.

• the when, how and why data was collected and what the data-product is.

Many data journals already exist – see a list (in no particular order) at: http://proj.badc.rl.ac.uk/preparde/blog/DataJournalsList

VO Sandpit, November 2009

Why bother publishing the dataset in a data journal? Why not just publish a normal journal paper citing the

data?

Data Journals: • Peer-review the data • Publish negative results • Make it quicker to publish the data as they

don’t require analysis or novelty – the dataset is published “as-is”

• Provide attribution and credit for the data collectors who might not be involved with the analysis

• Make it easier to find datasets, understand them and be sure of their quality and provenance.

VO Sandpit, November 2009

Peer review, data and data journals

• Peer-review of a scientific publication is generally only applied to analysis, interpretation and conclusions, and not the

underlying data.

• But if the conclusions are valid, the data must be of good quality.

• We need quality assurance of the data underlying research publications – either through peer-review or data repository checking.

• Researchers need credit for creating, managing and opening their data.

• Data journals provide that credit in an environment where academic status is solely based on publication record.

http://libguides.luc.edu/content.php?pid=5464&sid=164619

VO Sandpit, November 2009

Citeable does not equal Open!

Just like you can cite a paper that is behind a paywall, you can cite a dataset that isn’t open.

Making something citeable means that:

• You know it exists

• You know who’s responsible for it

• You know where to find it

• You know a little bit about it (title, abstract,…)

Even if you can’t download/read the thing yourself.

Citation gives benefits that encourage researchers to

make their data open

VO Sandpit, November 2009

Should ALL data be open?

Most data produced through publically funded research should be open.

But!

• Confidentiality issues (e.g. named persons’ health records)

• Conservation issues (e.g. maps of locations of rare animals at risk from poachers)

• Security issues (e.g. data and methodologies for building biological weapons)

There should be a very good reason for publically funded data to not be open.

VO Sandpit, November 2009

How to quantify impact?

The impact of a dataset can only be determined by time! • Would an 18th century ship’s

captain have realised how important their logs of meteorological measurements would be to climate scientists in the 21st century?

But we can know that if a dataset isn’t useable now, it’s going to be no use in the future.

Impact needs usability!

VO Sandpit, November 2009

Summary and maybe conclusions?

• We need to open the products of research

• to encourage innovation and collaboration

• to give credit to the people who’ve created them

• to be transparent and trustworthy

• Openness does come at a cost!

• It’s not enough for data to be open

• it needs to be usable and understandable too

• Data citation and publication are ways of encouraging researchers to make their data open

• or at least tell the world that their data exists!

• We need a culture change – but it’s already happening!

http://www.keepcalm-o-matic.co.uk/default.aspx#createposter

VO Sandpit, November 2009

Thanks!

Any questions?

@sorcha_ni

http://citingbytes.blogspot.co.uk/

Image credit: Borepatch http://borepatch.blogspot.com/2010/06/its-not-what-you-dont-know-that-hurts.html

“Publishing research without data is simply advertising, not science” - Graham Steel

http://blog.okfn.org/2013/09/03/publishing-research-without-data-is-simply-advertising-not-science/

Related Documents