Matrix Completion: Fundamental Limits and Efficient Algorithms Sewoong Oh PhD Defense Stanford University July 23, 2010 1 / 33

Welcome message from author

This document is posted to help you gain knowledge. Please leave a comment to let me know what you think about it! Share it to your friends and learn new things together.

Transcript

Matrix Completion:Fundamental Limits and Efficient Algorithms

Sewoong Oh

PhD DefenseStanford University

July 23, 2010

1 / 33

Matrix completion

Find the missing entries in a huge data matrix

2 / 33

Example 1. Recommendation systems

?

13

4

1

1

5

4 4

4

4

4

4

4

4

4

4

4

4

4

4

3 3

3

3

1

1

1

11

5

5

5

2

2

2

2

3

?

?

?

?

5 · 105 users

2·10

4m

ovies

106 queries

Given less than 1% of the movie ratings

Goal: Predict missing ratings3 / 33

Example 2. Positioning

Distance Matrix

Only distances between close-by sensors are measured

Goal: Find the sensor positions up to a rigid motion

4 / 33

Matrix completion

More applications:I Computer vision: Structure-from-motionI Molecular biology: MicroarrayI Numerical linear algebra: Fast low-rank approximationsI etc.

5 / 33

Outline

1 Background

2 Algorithm and main results

3 Applications in positioning

6 / 33

Background

7 / 33

The model

Low-rank M U

Σ VT

=αn

n r

r

Rank-r matrix M

Random uniform sample set E

Sample matrix ME

MEij =

{Mij if (i , j) ∈ E

0 otherwise

8 / 33

The model

Sample MEαn

n

Rank-r matrix M

Random uniform sample set E

Sample matrix ME

MEij =

{Mij if (i , j) ∈ E

0 otherwise

8 / 33

Which matrices?

Pathological example

M =

1 0 · · · 00 0 · · · 0...

.... . .

...0 0 · · · 0

=

10...0

· [1] · [1 0 · · · 0]

[Candes, Recht ’08] M = UΣVT has coherence µ if

A0. max1≤i≤αn

r∑k=1

U2ik ≤ µ

r

n, max

1≤j≤n

r∑k=1

V2jk ≤ µ

r

n

A1. maxi,j

∣∣∣ r∑k=1

UikVjk

∣∣∣ ≤ µ√r

n

IntuitionI µ is small if singular vectors are well balancedI We need low-coherence for matrix completion

9 / 33

Which matrices?

Pathological example

M =

1 0 · · · 00 0 · · · 0...

.... . .

...0 0 · · · 0

=

10...0

· [1] · [1 0 · · · 0]

[Candes, Recht ’08] M = UΣVT has coherence µ if

A0. max1≤i≤αn

r∑k=1

U2ik ≤ µ

r

n, max

1≤j≤n

r∑k=1

V2jk ≤ µ

r

n

A1. maxi,j

∣∣∣ r∑k=1

UikVjk

∣∣∣ ≤ µ√r

n

IntuitionI µ is small if singular vectors are well balancedI We need low-coherence for matrix completion

9 / 33

Previous work

Rank minimization

minimize rank(X)subject to Xij = Mij , (i , j) ∈ E

Heuristic [Fazel ’02]

minimize ‖X‖∗subject to Xij = Mij , (i , j) ∈ E

NP-hard

Convex relaxation

Nuclear norm

‖X‖∗ =n∑

i=1

σi (X )

Can be solved using SemidefiniteProgramming(SDP)

10 / 33

Previous work

Rank minimization

minimize rank(X)subject to Xij = Mij , (i , j) ∈ E

Heuristic [Fazel ’02]

minimize ‖X‖∗subject to Xij = Mij , (i , j) ∈ E

NP-hard

Convex relaxation

Nuclear norm

‖X‖∗ =n∑

i=1

σi (X )

Can be solved using SemidefiniteProgramming(SDP)

10 / 33

Previous work

[Candes, Recht ’08]

I Nuclear norm minimization reconstructs M exactly with highprobability, if

|E | ≥ C µ r n6/5 log n

I Surprise?

I Open questionsF Optimality: Do we need n6/5 log n samples?F Complexity: SDP is computationally expensiveF Noise: Can not deal with noise

A new approach to Matrix Completion: OptSpace

11 / 33

Previous work

[Candes, Recht ’08]

I Nuclear norm minimization reconstructs M exactly with highprobability, if

|E | ≥ C µ r n6/5 log n

I Degrees of freedom ' (1 + α)rnI Open questions

F Optimality: Do we need n6/5 log n samples?F Complexity: SDP is computationally expensiveF Noise: Can not deal with noise

A new approach to Matrix Completion: OptSpace

11 / 33

Previous work

[Candes, Recht ’08]

I Nuclear norm minimization reconstructs M exactly with highprobability, if

|E | ≥ C µ r n6/5 log n

I Degrees of freedom ' (1 + α)rnI Open questions

F Optimality: Do we need n6/5 log n samples?F Complexity: SDP is computationally expensiveF Noise: Can not deal with noise

A new approach to Matrix Completion: OptSpace

11 / 33

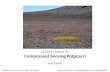

Example: 2000× 2000 rank-8 random matrixlow-rank matrix M sampled matrix ME

OptSpace output M squared error (M− M)2

0.25% sampled

12 / 33

Example: 2000× 2000 rank-8 random matrixlow-rank matrix M sampled matrix ME

OptSpace output M squared error (M− M)2

0.50% sampled

12 / 33

Example: 2000× 2000 rank-8 random matrixlow-rank matrix M sampled matrix ME

OptSpace output M squared error (M− M)2

0.75% sampled

12 / 33

Example: 2000× 2000 rank-8 random matrixlow-rank matrix M sampled matrix ME

OptSpace output M squared error (M− M)2

1.00% sampled

12 / 33

Example: 2000× 2000 rank-8 random matrixlow-rank matrix M sampled matrix ME

OptSpace output M squared error (M− M)2

1.25% sampled

12 / 33

Example: 2000× 2000 rank-8 random matrixlow-rank matrix M sampled matrix ME

OptSpace output M squared error (M− M)2

1.50% sampled

12 / 33

Example: 2000× 2000 rank-8 random matrixlow-rank matrix M sampled matrix ME

OptSpace output M squared error (M− M)2

1.75% sampled

12 / 33

Algorithm

13 / 33

Naıve approachSingular Value Decomposition (SVD)

ME =n∑

i=1

σixiyTi

Compute rank-r approximation MSVD

MSVD ,αn2

|E |

r∑i=1

σixiyTi

ME MSVD

14 / 33

Naıve approach failsSingular Value Decomposition (SVD)

ME =n∑

i=1

σixiyTi

Compute rank-r approximation MSVD

MSVD ,αn2

|E |

r∑i=1

σixiyTi

ME MSVD

14 / 33

Naıve approach failsSingular Value Decomposition (SVD)

ME =n∑

i=1

σixiyTi

Compute rank-r approximation MSVD

MSVD ,αn2

|E |

r∑i=1

σixiyTi

ME MSVD

14 / 33

Trimming

ME =

MEij =

0 if deg(rowi ) > 2|E |/αn0 if deg(colj) > 2|E |/n

MEij otherwise

deg(·) is the number of samples in that row/column

15 / 33

Trimming

ME =

MEij =

0 if deg(rowi ) > 2|E |/αn0 if deg(colj) > 2|E |/n

MEij otherwise

deg(·) is the number of samples in that row/column

15 / 33

Trimming

ME =

MEij =

0 if deg(rowi ) > 2|E |/αn0 if deg(colj) > 2|E |/n

MEij otherwise

deg(·) is the number of samples in that row/column

15 / 33

Algorithm

OptSpace

Input : sample indices E , sample values ME , rank r

Output : estimation M1: Trimming

2: Compute MSVD using SVD3: Greedy minimization of the residual error

MSVD can be computed efficiently for sparse matrices

16 / 33

Algorithm

OptSpace

Input : sample indices E , sample values ME , rank r

Output : estimation M1: Trimming

2: Compute MSVD using SVD

3: Greedy minimization of the residual error

MSVD can be computed efficiently for sparse matrices

16 / 33

Main results

Theorem

For any |E |, MSVD achieves, with high probability,

RMSE ≤ CMmax

√nr

|E |

RMSE =(

1αn2

∑i,j(M− MSVD)2

ij

)1/2

Mmax , maxi,j |Mij |

Keshavan, Montanari, Oh, IEEE Trans. Information Theory, 201017 / 33

Main results

Theorem

For any |E |, MSVD achieves, with high probability,

RMSE ≤ CMmax

√nr

|E |

[Achlioptas, McSherry ’07]

If |E | ≥ n(8 log n)4, with highprobability,

RMSE ≤ 4Mmax

√nr

|E |

For n = 105, (8 log n)4 ' 7.2 · 107

Keshavan, Montanari, Oh, IEEE Trans. Information Theory, 201017 / 33

Main results

Theorem

For any |E |, MSVD achieves, with high probability,

RMSE ≤ CMmax

√nr

|E |

[Achlioptas, McSherry ’07]

If |E | ≥ n(8 log n)4, with highprobability,

RMSE ≤ 4Mmax

√nr

|E |

For n = 105, (8 log n)4 ' 7.2 · 107

Netflix dataset

A single user rated 17,000 movies.

“Miss Congeniality”: 200,000 ratings.

Keshavan, Montanari, Oh, IEEE Trans. Information Theory, 201017 / 33

Can we do better?

18 / 33

Greedy minimization of residual error

Starting from (X0,Y0) for MSVD = X0S0YT0 , use gradient descent

methods to solve

minimize F (X,Y)subject to XT X = I, YT Y = I

F (X,Y) , minS∈Rr×r

∑(i ,j)∈E

(ME

ij − (XSYT )ij

)2

X S YT

rank

Can be computed efficiently for sparse matrices

19 / 33

Algorithm

OptSpace

Input : sample indices E , sample values ME , rank r

Output : estimation M1: Trimming

2: Compute MSVD using SVD3: Greedy minimization of the residual error

20 / 33

Main results

Theorem (Trimming+SVD)

MSVD achieves, with high probability,

RMSE ≤ CMmax

√nr

|E |

Theorem (Trimming+SVD+Greedy minimization)

OptSpace reconstructs M exactly, with high probability, if

|E | ≥ C µ r n max{µr , log n}

Keshavan, Montanari, Oh, IEEE Trans. Information Theory, 201021 / 33

OptSpace is order-optimal

Theorem

If µ and r are bounded, OptSpace reconstructs M exactly, with highprobability, if

|E | ≥ C n log n

Lower bound (coupon collector’s problem):If |E | ≤ C ′n log n, then exact reconstruction is impossible

Nuclear norm minimization:[Candes, Recht ’08, Candes, Tao ’09, Recht ’09, Gross et al. ’09]

If |E | ≥ C ′′n (log n)2, then exact reconstruction by SDP

22 / 33

OptSpace is order-optimal

Theorem

If µ and r are bounded, OptSpace reconstructs M exactly, with highprobability, if

|E | ≥ C n log n

Lower bound (coupon collector’s problem):If |E | ≤ C ′n log n, then exact reconstruction is impossible

Nuclear norm minimization:[Candes, Recht ’08, Candes, Tao ’09, Recht ’09, Gross et al. ’09]

If |E | ≥ C ′′n (log n)2, then exact reconstruction by SDP

22 / 33

Comparison1000× 1000 rank-10 matrix M

0

0.5

1

0 0.02 0.04 0.06 0.08 0.1 0.12 0.14

Sampling rate

PsuccessFundamental Limit�

OptSpace�

FPCA�

SVT�

ADMiRA�

Fundamental Limit [Singer, Cucuringu ’09], FPCA [Ma, Goldfarb, Chen ’09],

SVT [Cai, Candes, Shen ’08], ADMiRA [Lee, Bresler ’09]23 / 33

Story so far

OptSpace reconstructs M from a few sampled entries, when M isexactly low-rank and samples are exact

In reality,I M is only approximately low-rankI samples are corrupted by noise

24 / 33

The model with noise

Low-rank M U

Σ VT

=αn

n r

r

Rank-r matrix M

Random sample set E

Sample noise ZE

Sample matrix NE = ME + ZE

25 / 33

The model with noise

Sample NEαn

n

Rank-r matrix M

Random sample set E

Sample noise ZE

Sample matrix NE = ME + ZE

25 / 33

Main results

Theorem

For |E | ≥ Cµrn max{µr , log n}, OptSpace achieves, with high probability,

RMSE ≤ C ′n√

r

|E |||ZE ||2 ,

provided that the RHS is smaller than σr (M)/n.

‖ · ‖2 is the spectral norm

RMSE ≥ σz

√2 r n|E |

Keshavan, Montanari, Oh, Journ. Machine Learning Research, 201026 / 33

OptSpace is order-optimal when noise is i.i.d. Gaussian

Theorem

For |E | ≥ Cµrn max{µr , log n}, OptSpace achieves, with high probability,

RMSE ≤ C ′ σz

√r n

|E |

provided that the RHS is smaller than σr (M)/n.

Lower bound: [Candes, Plan ’09]

RMSE ≥ σz

√2 r n|E |

Trimming + SVD

RMSE ≤ CMmax

√r n

|E |︸ ︷︷ ︸missing entries

+ C ′σz

√r n

|E |︸ ︷︷ ︸sample noise

27 / 33

Comparison500× 500 rank-4 matrix M, Gaussian noise with σz = 1Example from [Candes, Plan ’09]

0

0.5

1

1.5

0.2 0.4 0.6 0.8 1

Sampling rate

RMSE

Lower Bound���*

OptSpace

6

Trim+SVD���

FPCA [Ma, Goldfarb, Chen ’09], ADMiRA [Lee, Bresler ’09]

28 / 33

Comparison500× 500 rank-4 matrix M, Gaussian noise with σz = 1Example from [Candes, Plan ’09]

0

0.5

1

1.5

0.2 0.4 0.6 0.8 1

Sampling rate

RMSE

Lower Bound���*

OptSpace

6

Trim+SVD���

FPCA���� ADMiRA�

���

FPCA [Ma, Goldfarb, Chen ’09], ADMiRA [Lee, Bresler ’09]28 / 33

Positioning

29 / 33

The model

R

Distance Matrix D

n wireless devices uniformly distributed in a bounded convex region

Distance measurements between devices within radio range R

Find the locations up to a rigid motion

30 / 33

The model

R

Distance Matrix D

How is it related to Matrix Completion?I Need to find the missing entriesI rank(D) = 4

How is it different?I Non-uniform samplingI Rich information not used in Matrix Completion

30 / 33

The model

R

Distance Matrix D

MDS-MAP [Shang et al. ’03]

1. Fill in the missing entries with shortest paths2. Compute rank-4 approximation

30 / 33

Main results

Theorem

For R > C√

log nn , with high probability,

RMSE ≤ C

R

√log n

n+ o(1) .

RMSE =(

1n2

∑i,j

(D− D

)2ij

)1/2

Lower Bound:

If R <√

log nπn , then the graph is disconnected

Generalized to quantized measurements and distributed algorithms

We can add Greedy Minimization step

Oh, Karbasi, Montanari, Information Theory Workshop, 2010Karbasi, Oh, ACM SIGMETRICS, 2010

31 / 33

Numerical simulation

0

0.05

0.1

0.15

0.2

0.25

1 1.5 2 2.5 3 3.5 4 4.5

n=500n=1,000n=2,000

0

0.05

0.1

0.15

0.2

0.25

2 3 4 5 6 7 8 9 10 11 12

n=500n=1,000n=2,000

R/√

log nπn R/

√1πn

RMSE

32 / 33

Conclusion

Matrix Completion is an important problem with many practicalapplications

OptSpace is an efficient algorithm for Matrix Completion

OptSpace achieves performance close to the fundamental limit

33 / 33

Special thanks to:

PMCRQOAVNBETHRDAZOKNWXAOURHLIO

33 / 33

Special thanks to:

PMCRQOAVNBETHRDAZOKNWXAOURHLIO

33 / 33

Special thanks to:

PMCRQOAVNBETHRDAZOKNWXAOURHLIO

33 / 33

Special thanks to:

PMCRQOAVNBETHRDAZOKNWXAOURHLIO

33 / 33

Special thanks to:

PMCRQOAVNBETHRDAZOKNWXAOURHLIO

33 / 33

Special thanks to:

Officemates: Morteza, Yash, Jose, Raghu, Satish

Friends: Mohsen, Adel, Farshid, Fernando, Arian, Haim, Sachin,Ivana, Ahn, Cha, Choi, Rhee, Kang, Kim, Lee, Na, Park, Ra, Seok,Song

33 / 33

Special thanks to:

My family and Kyung Eun

33 / 33

Thank you!

33 / 33

Related Documents