Learning Local Affine Learning Local Affine Representations for Texture and Representations for Texture and Object Recognition Object Recognition Svetlana Lazebnik Svetlana Lazebnik Beckman Institute, University of Illinois at Beckman Institute, University of Illinois at Urbana-Champaign Urbana-Champaign (joint work with Cordelia Schmid, Jean Ponce) (joint work with Cordelia Schmid, Jean Ponce)

Learning Local Affine Representations for Texture and Object Recognition Svetlana Lazebnik Beckman Institute, University of Illinois at Urbana-Champaign.

Jan 20, 2016

Welcome message from author

This document is posted to help you gain knowledge. Please leave a comment to let me know what you think about it! Share it to your friends and learn new things together.

Transcript

Learning Local Affine Representations Learning Local Affine Representations for Texture and Object Recognitionfor Texture and Object Recognition

Svetlana Lazebnik Svetlana Lazebnik Beckman Institute, University of Illinois at Urbana-ChampaignBeckman Institute, University of Illinois at Urbana-Champaign

(joint work with Cordelia Schmid, Jean Ponce)(joint work with Cordelia Schmid, Jean Ponce)

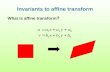

OverviewOverview• Goal:Goal:

– Recognition of 3D textured surfaces, object classes

• Our contribution:Our contribution: – Texture and object representations based on

local affine regions

• Advantages of proposed approach:Advantages of proposed approach: – Distinctive, repeatable primitives– Robustness to clutter and occlusion – Ability to approximate 3D geometric transformations

The ScopeThe Scope1. Recognition of single-texture images (CVPR 2003)

2. Recognition of individual texture regions in multi-texture images (ICCV 2003)

3. Recognition of object classes (BMVC 2004, work in progress)

1. Recognition of Single-Texture Images1. Recognition of Single-Texture Images

Affine Region DetectorsAffine Region DetectorsHarris detector (H) Laplacian detector (L)

Mikolajczyk & Schmid (2002), Gårding & Lindeberg (1996)

Affine Rectification ProcessAffine Rectification Process

Patch 2Patch 1

Rectified patches (rotational ambiguity)

Rotation-Invariant Descriptors 1: Rotation-Invariant Descriptors 1: Spin ImagesSpin Images

• Based on range spin images (Johnson & Hebert 1998)• Two-dimensional histogram:

distance from center × intensity value

Rotation-Invariant Descriptors 2: RIFTRotation-Invariant Descriptors 2: RIFT• Based on SIFT (Lowe 1999)• Two-dimensional histogram:

distance from center × gradient orientation• Gradient orientation is measured w.r.t. to the direction

pointing from the center of the patch

Signatures and EMDSignatures and EMD

• SignaturesS = {(m1, w1), … , (mk, wk)} mi — cluster center wi — relative weight

• Earth Mover’s Distance (Rubner et al. 1998)– Computed from ground distances d(mi, m'j) – Can compare signatures of different sizes – Insensitive to the number of clusters

Database: Textured SurfacesDatabase: Textured Surfaces

25 textures, 40 sample images each (640x480)

EvaluationEvaluation

• Channels: HS, HR, LS, LR– Combined through addition of EMD matrices

• Classification results– 10 training images per class, rates averaged over

200 random training subsets

Comparative EvaluationComparative EvaluationOur method Varma & Zisserman

(2003)

Spatial selection Harris and Laplacian detectors

None (every pixel location is used)

Neighborhood shape selection

Affine adaptation None (support of descriptors is fixed)

Descriptors Spin images, RIFT Raw pixel values

Textons Separate set of textons for each image

Universal texton dictionary

Representing/comparing texton distributions

Signatures/EMD Histograms/ chi-squared distance

Results of Evaluation:Results of Evaluation:Classification rate vs. number of training samplesClassification rate vs. number of training samples

• Conclusion:Conclusion: an intrinsically invariant representation is necessary to deal with intra-class variations when they are not adequately represented in the training set

(H+L)(S+R) VZ-Joint VZ-MRF

SummarySummary

• A sparse texture representation based on local affine regions

• Two novel descriptors (spin images, RIFT)• Successful recognition in the presence of viewpoint

changes, non-rigidity, non-homogeneity• A flexible approach to invariance

2. Recognition of Individual Regions in 2. Recognition of Individual Regions in Multi-Texture ImagesMulti-Texture Images

• A two-layer architecture: – Local appearance + neighborhood relations

• Learning:– Represent the local appearance of each texture class

using a mixture-of-Gaussians model– Compute co-occurrence statistics of sub-class labels over

affinely adapted neighborhoods

• Recognition:– Obtain initial class membership probabilities from the

generative model– Use relaxation to refine these probabilities

Two Learning ScenariosTwo Learning Scenarios

• Fully supervised:Fully supervised: every region in the training image is labeled with its texture class

• Weakly supervised:Weakly supervised: each training image is labeled with the classes occurring in it

brick

brick, marble, carpet

Estimate:• probability p(c,c')• correlation r(c,c')

Neighborhood StatisticsNeighborhood Statistics

Neighborhood definition

Relaxation (Rosenfeld et al. 1976)Relaxation (Rosenfeld et al. 1976)

• Iterative process: – Initialized with posterior probabilities p(c|xi) obtained from

the generative model– For each region i and each sub-class label c, update the

probability pi(c) based on neighbor probabilities pj(c') and correlations r(c,c')

• Shortcomings:– No formal guarantee of convergence– After the initialization, the updates to the probability values

do not depend on the image data

Experiment 1: 3D Textured SurfacesExperiment 1: 3D Textured SurfacesSingle-texture images

Multi-texture images

T1 (brick) T2 (carpet) T3 (chair) T4 (floor 1) T5 (floor 2) T6 (marble) T7 (wood)

10 single-texture training images per class, 13 two-texture training images, 45 multi-texture test images

Effect of Relaxation on LabelingEffect of Relaxation on Labeling

Original image

Top: before relaxation, bottom: after relaxation

T1 (brick) T2 (carpet) T3 (chair) T4 (floor 1)

T5 (floor 2) T6 (marble) T7 (wood)

(single-texture training images)

RetrievalRetrieval

Successful Segmentation ExamplesSuccessful Segmentation Examples

Unsuccessful Segmentation ExamplesUnsuccessful Segmentation Examples

Experiment 2: AnimalsExperiment 2: Animals

• No manual segmentation• Training data: 10 sample images per class• Test data: 20 samples per class + 20 negative

images

cheetah, background zebra, background giraffe, background

Cheetah ResultsCheetah Results

Zebra ResultsZebra Results

Giraffe ResultsGiraffe Results

Future WorkFuture Work• Design an improved representation using a random

field framework, e.g., conditional random fields (Lafferty 2001, Kumar & Hebert 2003)

• Develop a procedure for weakly supervised learning of random field parameters

• Apply method to recognition of natural texture categories

SummarySummary• A two-level representation (local appearance +

neighborhood relations)• Weakly supervised learning of texture models

3. Recognition of Object Classes 3. Recognition of Object Classes

The approach:• Represent objects using multiple composite

semi-local affine parts– More expressive than individual regions– Not globally rigid

• Correspondence search is key to learning and detection

Correspondence SearchCorrespondence Search• Basic operation: a two-image matching procedure for finding

collections of affine regions that can be mapped onto each other using a single affine transformation

• Implementation: greedy search based on geometric and photometric consistency constraints

– Returns multiple correspondence hypotheses

– Automatically determines number of regions in correspondence

– Works on unsegmented, cluttered images (weakly supervised learning)

A

Matching: 3D ObjectsMatching: 3D Objects

Matching: 3D ObjectsMatching: 3D Objects

closeup closeup

Matching: FacesMatching: Faces

spurious match ???

Finding SymmetriesFinding Symmetries

Finding Repeated Patterns and Finding Repeated Patterns and SymmetriesSymmetries

Learning Object Models for RecognitionLearning Object Models for Recognition

• Match multiple pairs of training images to produce a set of candidate parts

• Use additional validation images to evaluate repeatability of parts and individual regions

• Retain a fixed number of parts having the best repeatability score

Recognition Experiment: ButterfliesRecognition Experiment: Butterflies

• 16 training images (8 pairs) per class• 10 validation images per class• 437 test images• 619 images total

Admiral Swallowtail Machaon Monarch 1 Monarch 2 Peacock Zebra

Butterfly PartsButterfly Parts

RecognitionRecognition

• Top 10 parts per class used for recognition• Relative repeatability score:• Classification results:

total number of regions detected

total part size

Total part size (smallest/largest)

Classification Rate vs. Classification Rate vs. Number of PartsNumber of Parts

Detection Results (ROC Curves)Detection Results (ROC Curves)

Circles: reference relative repeatability rates. Red square: ROC equal error rate (in parentheses)

Successful Detection ExamplesSuccessful Detection ExamplesTraining images

Test images (blue: occluded regions)

All ellipses found in the test images

Unsuccessful Detection ExamplesUnsuccessful Detection ExamplesTraining images

Test images (blue: occluded regions)

All ellipses found in the test image

• Semi-local affine parts for describing structure of 3D objects

• Finding a part vocabulary:– Correspondence search between pairs of images– Validation

• Additional application: – Finding symmetry and repetition

SummarySummarySummarySummary

Future WorkFuture Work

• Find a better affine region detector• Represent, learn inter-part relations• Evaluation: CalTech database, harder classes, etc.

BirdsBirdsEgret

Snowy Owl Mandarin Duck Wood Duck

Puffin

Birds: Candidate PartsBirds: Candidate PartsMandarin Duck

Puffin

Objects without Characteristic TextureObjects without Characteristic Texture

(LeCun’04)

Summary of TalkSummary of Talk

1. Recognition of single-texture images • Distribution of local appearance descriptors

2. Recognition of individual regions in multi-texture images• Local appearance + loose statistical neighborhood

relations

3. Recognition of object categories• Local appearance + strong geometric relations

For more information: http://www-cvr.ai.uiuc.edu/ponce_grp

Issues, ExtensionsIssues, Extensions

• Weakly supervised learning– Evaluation methods?– Learning from contaminated data?

• Probabilistic vs. geometric approaches to invariance• EM vs. direct correspondence search• Training set size• Background modeling• Strengthening the representation

– Heterogeneous local features– Automatic feature selection– Inter-part relations

Related Documents