Identifying Rater Types in TestDaF Writing Performance Assessments 2nd Annual Conference of EALTA Voss, Norway, 02-05 June 2005 Thomas Eckes TestDaF Institute, Hagen, Germany [email protected] Overview 1. TestDaF - Writing Section 2. Rater Variability 3. Identification of Rater Types 3.1 Rater type hypothesis 3.2 Questionnaire study 3.3 Rasch analysis of criterion ratings 3.4 Two-mode clustering analysis 4. Clustering Results 5. Conclusions

Welcome message from author

This document is posted to help you gain knowledge. Please leave a comment to let me know what you think about it! Share it to your friends and learn new things together.

Transcript

1

Identifying Rater Types in TestDaF Writing Performance

Assessments

2nd Annual Conference of EALTA

Voss, Norway, 02-05 June 2005

Thomas EckesTestDaF Institute, Hagen, Germany

Overview

1. TestDaF - Writing Section2. Rater Variability3. Identification of Rater Types

3.1 Rater type hypothesis3.2 Questionnaire study3.3 Rasch analysis of criterion ratings3.4 Two-mode clustering analysis

4. Clustering Results5. Conclusions

2

TestDaF - Writing Section

TestDaF: Aim and Scope

Designed for foreign students applying for entry to an institution of higher education in GermanyMeasures German language proficiency at an intermediate to high levelContinuously developed and evaluated at the TestDaF Institute, Hagen, Germany

TestDaF - Writing Section

All four language skills are examined in separate sections:

Reading (60min) Listening (40min) Writing (60min) Speaking (30min)

In each section, task and item content are closely related to the academic context

3

TestDaF - Writing Section

Writing Section

Measures the ability to produce a coherent and well-structured textConsists of a single complex task related to theacademic contextRaters score examinee performance accordingto 9 criteriaFour-point TDN rating scale(below TDN 3, TDN 3, TDN 4, TDN 5)

TestDaF - Writing Section

Scoring Criteria

1. Fluency2. Line of thought3. Structure

4. Completeness5. Description6. Argumentation

7. Syntax8. Vocabulary9. Correctness

Global impression

Treatment of the task

Linguistic realization

4

Rater Variability

The Rater Variability Issue

Do raters differ in the severity with which they rate examinees?How interchangeable are the raters?Can rater training enable raters to function interchangeably?

Answers from many-facet Rasch measurement studies . . .

Rater Variability

.9829TestDaF Writing Section

Eckes (in press)

.9416ESLPE Composition

Weigle (1998)

.8715Eighth Grade Writing Test

Engelhard (1994)

.71605AdvancedPlacement ELC

Engelhard & Myford (2003)

.983Japanese L2 Writing

Kondo-Brown (2002)

Sep. Rel.N RatersAssessmentStudy

Rater Variability Studies – Part I: Writing Ability

5

Rater Variability

.9831TestDaFSpeaking Section

Eckes (in press)

.9215Spanish (LAAS)Bachman, Lynch, & Mason (1995)

.8913OET Speaking Section

Lumley & McNamara (1995)

1.004Speaking Skills Module (access:)

Lynch & McNamara (1998)

Sep. Rel.N RatersAssessmentStudy

Rater Variability Studies – Part II: Speaking Ability

Rater Variability

.7120Physical attractiveness

Eckes (2005)

.9518Histology certi-fication exam

Lunz, Wright, & Linacre (1990)

.8556Medical oral examLunz & Stahl (1993)

.8943Product qualityLunz & Linacre (1998)

.9231Job performanceLunz & Linacre (1998)

Sep. Rel.N RatersAssessmentStudy

Rater Variability Studies – Part III: Other

6

Rater Variability

Lessons Learned So Far

In language as well as in non-language domains, rater variability is substantialRater training can raise within-rater consistency, but is unlikely to significantly reduce between-rater severity differences Research needs to address possible sourcesof rater variability

Rater Variability

Potential Sources of Rater Variability

Professional backgroundInterpretation of scoring criteriaScoring styles (cognitive, reading/listening, decision-making)Personality traitsMotivationAttitudesetc.

7

Rater Variability

Prior Research

Expertise effect (expert vs. lay raters, professional background, scoringproficiency)Differences between raters found in

the weighting of performance features (Chalhoub-Deville, 1995)the perception as well as the application of assessment criteria (Brown, 1995; Meiron & Schick, 2000; Schoonen, Vergeer & Eiting, 1997)the use of general vs. specific performance features (Wolfe, Kao & Ranney, 1998)

Rater Variability

These findings suggest that

a) Raters differ in the interpretation of scoring criteria, even when they were trained on the appropriate use of these criteria

b) Raters may form groups or types that are clearly distinguishable from one another, ie groups that are both internally homogeneous and externally separated

8

Identification of Rater Types

Rater Type Hypothesis

Well-trained, operational TestDaF raters fall into types that are characterized by distinctive patterns of attaching importance to routinely used scoring criteria

Identification of Rater Types

Questionnaire Study

RatersTotal sample: 83 TestDaF ratersWriting section sample: 64 raters (13 men, 51 women)Mean age: 45 years (SD = 9.3)81% with 10 or more years as DaF teacher45% with 10 or more years as DaF examiner

9

Identification of Rater Types

RatingsNine scoring criteria rated according to their general importance on a 4-point scale1 - less important2 - important 3 - very important 4 - extremely important

Identification of Rater Types

Preliminary Analysis: Many-Facet Rasch Modeling

IssuesDid raters differ significantly in the importance attached to criteria?Did criteria differ significantly in perceived importance?Was the 4-point rating scale functioning as intended?

10

Identification of Rater Types

Facets involvedRaters (64)Scoring Criteria (9)

Program: FACETS (Version 3.57; Linacre, 2005)

Variable Map

Importance Ratings

Separation reliability(a) Raters: .82(b) Criteria: .94

Logit Rater Criterion Scale

Attaching High Importance Highly Important (4) 3 06 29 16 32 2 41 01 ----- 25 31 34 02 line of thought 1 04 62 14 18 35 36 37 40 45 argumentation 3 03 38 60 fluency 49 56 0 11 12 43 48 53 ----- 09 39 59 64 completeness vocabulary

description

05 13 23 08 20 22 26 27 50 52 55 61

63 syntax structure

19 21 44 54 57 58 47 2

-1 07 17 24 46 10 15 33 correctness 28 ----- 51

-2 30 42

-3 Attaching Low Importance Less Important (1)

11

Identification of Rater Types

1.12Structure

1.28Argumentation

0.99Fluency

0.98Line of thought

1.21Completeness

0.94Description

0.93Correctness

0.70Syntax

0.70Vocabulary

Infit (Mean-Square)Criterion

Model Fit – Scoring Criteria

Identification of Rater Types

1.21

-0.22

-0.99

---

Threshold

1.36

0.34

-0.34

-1.27

Av. Meas.

21%

31%

26%

22%

Freq. %

1.0Very important

0.9Less important

1.5Important

0.9Extremely imp.

OutfitCategory

Rating Scale

Note. Av. Meas. = Average criterion importance measure per rating category. Outfit is a mean-square statistic.

12

Identification of Rater Types

Summary of Rasch Analysis Findings

Raters showed significant differences in the importance attached to criteriaCriteria differed significantly in their perceived importanceThe rating scale functioned as a four-point scale, and the scale categories were clearly distinguishable

Identification of Rater Types

Main Analysis: Two-Mode Clustering

Research QuestionsDid raters show distinctive patterns of attaching importance to particular criteria?Which raters and which criteria combined to form a rater type?How many rater types could be identified and how strongly were they separated from one another?

13

Identification of Rater Types

Clustering Method

„Error-variance approach“ (Eckes, 1996; Eckes & Orlik, 1993)Main objective: Joint hierarchical classification of two different sets ofelements, ie raters and criteriaTwo-mode clusters with minimum internal heterogeneity (Delta index)

Identification of Rater Types

Preprocessing of the input data (ie columnwise duplication and reflection) to cluster important criteria separately from less important onesAdditionally: Overlapping clustering solutionUseful reference: Everitt, B. S., Landau, S., & Leese, M. (2001). Cluster analysis (4th ed., pp. 154-161). London: Arnold.

14

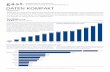

Determining the Number of Two-Mode Clusters

1 3 5 7 9 11 13 15 17 19 21 23 25 27 29 31 33 35 37 39 41 43 45 47 49 51 53 55 57 59 61 63 65 67 69 71 73 75 77 79 81

Fusion

0

1

2

3

4

5

Del

ta /

rpbi

s

Six-Cluster Solution

rpbis

Delta

Two-Mode ClusteringSolution

Overlapping solution added

A B C D E F

45, 40, 04, 11, 21, 29, 32, 34, 35, 48, 12, 14, 38, 62, 60, 23, 24 vocab. argum. syntax compl. -struct.

41 correct.

49, 31, 37, 01, 02, 03, 06,16, 18, 25, 36, 56 descript.struct.

47, 64, 05, 09, 26, 27, 39, 53, 50, 08, 17, 61, 59, 15, 28, 33, 52, 58, 07, 22, 44, 46, 55 fluency line of t.-correct.-descrip.-vocab. -compl.

63, 13, 54, 51, 43 -syntax -fluency

57, 19, 20, 30, 42, 10 -argum. -line of t.

29, 32 argum. line of t.

07, 10, 15, 17, 21, 24, 28, 30, 42, 44, 58, 61, 33, 48 -correct.-struct.

-struct. -syntax

01, 06, 29, 32 compl. descript.argum.

41, 31 line of t.

15

Cluster A: Focus on treatment of the task and linguistic realization (N = 19)

Cluster B: Focus on treatment of the task and correctness (N = 5)

Cluster C: Partial focus on global impressionand treatment of the task (N = 14)

Cluster D: Focus on global impression, clearly less focus on treatment of the task and linguistic realization (N = 23)

Identification of Rater Types

Cluster E: Less focus on global impression and linguistic realization, particularly less focus on syntax and fluency (N = 19)

Cluster F: Less focus on a mixture of all kinds of criteria, particularly less focus on argumentation and line of thought(N = 6)

Identification of Rater Types

16

Trained raters differed significantly in the importance they attached to routinely-used scoring criteriaScoring criteria differed significantly in perceived importance

Conclusions

Six rater types were identified, showing distinct patterns of scoring criteria interpretationEach type was characterized by a focus on a subset of scoring criteriaSome types even evidenced complementaryways of focusing on criteria

Conclusions

17

Ideally, criteria carry equal weight in the scoring process – yet, raters deviated clearly from this idealRater training needs to take differential focusing types into accountSome raters need to direct their special attention to global impression criteria Others need to attend more on criteria referring to the treament of the task and to linguistic realization

Conclusions

Bachman, L. F., Lynch, B. K., & Mason, M. (1995). Investigating variability in tasks and rater judgements in a performance test of foreign language speaking. Language Testing, 12, 238–257.

Brown, A. (1995). The effect of rater variables in the development of an occupation-specific language performance test. Language Testing, 12, 1-15.

Chalhoub-Deville, M. (1995). Deriving oral assessments scales across different tests and rater groups. Language Testing, 12, 17-33.

Eckes, T. (1996). Recents developments in multimode clustering. In W. Gaul & D. Pfeifer (Eds.), From data to knowledge: Theoretical and practical aspects of classification, data analysis, and knowledge organization (pp. 151–158). Berlin: Springer-Verlag.

Eckes, T. (2005). Multifacetten-Rasch-Analyse von Personenbeurteilungen[Many-facet Rasch analysis of person judgments]. Manuscript submitted for publication.

Eckes, T. (in press). Examining rater effects in TestDaF writing and speaking performance assessments: A many-facet Rasch analysis. Language Assessment Quarterly.

References

18

Eckes, T., & Orlik, P. (1993). An error variance approach to two-mode hierarchical clustering. Journal of Classification, 10, 51–74.

Engelhard, G., Jr. (1994). Examining rater errors in the assessment of written composition with a many-faceted Rasch model. Journal of Educational Measurement, 31, 93–112.

Engelhard, G., Jr., & Myford, C. M. (2003). Monitoring faculty consultant performance in the Advanced Placement English Literature and Composition Program with a many-faceted Rasch model (College Board Research Report No. 2003-1). New York: College Entrance Examination Board.

Everitt, B. S., Landau, S., & Leese, M. (2001). Cluster analysis (4th ed.). London: Arnold.

Kondo-Brown, K. (2002). A FACETS analysis of rater bias in measuring Japanese second language writing performance. Language Testing, 19, 3–31.

Linacre, J. M. (1989). Many-facet Rasch measurement. Chicago: MESA Press.Linacre, J. M. (2005). A user’s guide to FACETS: Rasch-model computer

programs [Software manual]. Chicago: Winsteps.comLinacre, J. M., & Wright, B. D. (2002). Construction of measures from many-facet

data. Journal of Applied Measurement, 3, 484–509.

References

Lumley, T., & McNamara, T. F. (1995). Rater characteristics and rater bias: Implications for training. Language Testing, 12, 54–71.

Lunz, M. E., & Linacre, J. M. (1998). Measurement designs using multifacet Rasch modeling. In G. A. Marcoulides (Ed.), Modern methods for business research (pp. 47–77). Mahwah, NJ: Erlbaum.

Lunz, M. E., & Stahl, J. A. (1993). The effect of rater severity on person ability measure: A Rasch model analysis. American Journal of Occupational Therapy, 47, 311–317.

Lunz, M. E., Wright, B. D., & Linacre, J. M. (1990). Measuring the impact of judge severity on examination scores. Applied Measurement in Education, 3, 331–345.

Lynch, B. K., & McNamara, T. F. (1998). Using G-theory and many-facet Raschmeasurement in the development of performance assessments of the ESL speaking skills of immigrants. Language Testing, 15, 158–180.

McNamara, T. F. (1996). Measuring second language performance. London: Longman.

References

19

Meiron, B. E., & Schick, L. S. (2000). Ratings, raters and test performance: An exploratory study. In A. J. Kunnan (Ed.), Fairness and validation in language assessment (pp. 153-174). Cambridge: Cambridge University Press.

Schoonen, R., Vergeer, M., & Eiting, M. (1997). The assessment of writing ability: Expert readers versus lay readers. Language Testing, 14, 157-184.

Stahl, J. A., & Lunz, M. E. (1996). Judge performance reports: Media and message. In G. Engelhard & M. Wilson (Eds.), Objective measurement: Theory into practice (Vol. 3, pp. 113–125). Norwood, NJ: Ablex.

Weigle, S. C. (1998). Using FACETS to model rater training effects. Language Testing, 15, 263–287.

Wolfe, E. W., Kao, C.-W., & Ranney, M. (1998). Cognitive differences in proficient and nonproficient essay scorers. Written Communication, 15, 465-492.

Dr. Thomas Eckes, TestDaF Institute, University of Hagen, Feithstr. 188, 58084 Hagen.

e-mail: [email protected], web: www.testdaf.de

References

Related Documents