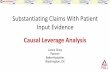

On making causal claims: A review and recommendations John Antonakis ⁎, Samuel Bendahan, Philippe Jacquart, Rafael Lalive Faculty of Business and Economics, University of Lausanne, Switzerland article info abstract Social scientists often estimate models from correlational data, where the independent variable has not been exogenously manipulated; they also make implicit or explicit causal claims based on these models. When can these claims be made? We answer this question by first discussing design and estimation conditions under which model estimates can be interpreted, using the randomized experiment as the gold standard. We show how endogeneity – which includes omitted variables, omitted selection, simultaneity, common-method variance, and measurement error – renders estimates causally uninterpretable. Second, we present methods that allow researchers to test causal claims in situations where randomization is not possible or when causal interpretation could be confounded; these methods include fixed-effects panel, sample selection, instrumental variable, regression discontinuity, and difference-in-differences models. Third, we take stock of the methodological rigor with which causal claims are being made in a social sciences discipline by reviewing a representative sample of 110 articles on leadership published in the previous 10 years in top-tier journals. Our key finding is that researchers fail to address at least 66% and up to 90% of design and estimation conditions that make causal claims invalid. We conclude by offering 10 suggestions on how to improve non-experimental research. © 2010 Elsevier Inc. All rights reserved. Keywords: Causality Quasi-experimentation Instrumental variables Common-method bias Difference-in-differences Simultaneous equations Monte Carlo simulations Regression discontinuity Mediation Social scientists make causal claims. Some come out and say it straight, using statements like “x causes, predicts, affects, influences, explains, or is an antecedent of y” or that “y depends on x.” Others shy away from using such explicit language, choosing instead to couch their claims in suggestive language stating instead that “y is associated or related to x.” Researchers must not hide from making causal claims (cf. Pearl, 2000; Shipley, 2000). Causal claims are important for society and it is crucial to know when scientists can make them. The failsafe way to generate causal evidence is to use randomized experiments. Unfortunately, randomization is often infeasible in social science settings, and depending on the phenomenon under investigation, results might not generalize from the laboratory to the real world. However, many recent methodological advances have been made allowing social scientists to have their causal cake and eat it (in the field!). These methods, though, have been slow to reach social science disciplines. Unfortunately, methods are still being used to estimate explicit (or implicit) causal models in design situations where the assumptions of the methods are violated, thus rendering uninformative results. Given the importance of understanding causality in non-experimental settings, the purpose of our paper was threefold, to (a) demonstrate the design and estimation conditions under which estimates can and cannot be causally interpreted (or indeed interpreted at all, even as associations), (b) review methods that will allow researchers to test causal claims in the field, particularly in situations where randomization is not possible, and (c) take stock of the methodological rigor with which causal claims are being made in leadership, which straddles the disciplines of management and applied psychology. What we care to show in this review are the necessary design and estimation conditions for causal interpretation. Our central focus will be on the consistency of parameter estimates; by consistent we mean that the estimate regarding the presumed causal The Leadership Quarterly 21 (2010) 1086–1120 ⁎ Corresponding author. Faculty of Business and Economics, University of Lausanne, Internef #618, CH-1015 Lausanne-Dorigny, Switzerland. Tel.: +41 21 692 3438; fax: +41 21 692 3305. E-mail address: [email protected] (J. Antonakis). 1048-9843/$ – see front matter © 2010 Elsevier Inc. All rights reserved. doi:10.1016/j.leaqua.2010.10.010 Contents lists available at ScienceDirect The Leadership Quarterly journal homepage: www.elsevier.com/locate/leaqua

Antonakis - 2010 - On Making Causal Claims

Nov 25, 2015

an article

Welcome message from author

This document is posted to help you gain knowledge. Please leave a comment to let me know what you think about it! Share it to your friends and learn new things together.

Transcript

-

Mediation

s. Sot of ytive la000;

sal evd dep

laboratory to the real world. However, many recent methodological advances have been made allowing social scientists to have

The Leadership Quarterly 21 (2010) 10861120

Contents lists available at ScienceDirect

The Leadership Quarterlytheir causal cake and eat it (in the eld!). These methods, though, have been slow to reach social science disciplines.Unfortunately, methods are still being used to estimate explicit (or implicit) causal models in design situations where theassumptions of the methods are violated, thus rendering uninformative results.

Given the importance of understanding causality in non-experimental settings, the purpose of our paper was threefold, to (a)demonstrate the design and estimation conditions under which estimates can and cannot be causally interpreted (or indeedinterpreted at all, even as associations), (b) review methods that will allow researchers to test causal claims in the eld,The failsafe way to generate cauinfeasible in social science settings, anparticularly in situations where randomizaclaims are being made in leadership, which

What we care to show in this review arefocus will be on the consistency of paramete

Corresponding author. Faculty of Business and Econfax: +41 21 692 3305.

E-mail address: [email protected] (J. Antona

1048-9843/$ see front matter 2010 Elsevier Inc.doi:10.1016/j.leaqua.2010.10.010idence is to use randomized experiments. Unfortunately, randomization is oftenending on the phenomenon under investigation, results might not generalize from thecausal claims in situations where randomization is not possible or when causal interpretationcould be confounded; these methods include fixed-effects panel, sample selection, instrumentalvariable, regression discontinuity, and difference-in-differences models. Third, we take stock ofthemethodological rigorwithwhich causal claims are beingmade in a social sciences discipline byreviewing a representative sample of 110 articles on leadership published in the previous 10 yearsin top-tier journals. Our key nding is that researchers fail to address at least 66% and up to 90% ofdesign and estimation conditions that make causal claims invalid. We conclude by offering 10suggestions on how to improve non-experimental research.

2010 Elsevier Inc. All rights reserved.

me come out and say it straight, using statements like x causes, predicts, affects, or that y depends on x.Others shy away from using such explicit language, choosingnguage stating instead that y is associated or related to x. Researchers must not hideShipley, 2000). Causal claims are important for society and it is crucial to know whenSocial scientists make causal claiminuences, explains, or is an antecedeninstead to couch their claims in suggesfrom making causal claims (cf. Pearl, 2scientists can make them.Regression discontinuityOn making causal claims: A review and recommendations

John Antonakis, Samuel Bendahan, Philippe Jacquart, Rafael LaliveFaculty of Business and Economics, University of Lausanne, Switzerland

a r t i c l e i n f o a b s t r a c t

Social scientists often estimate models from correlational data, where the independent variablehas not been exogenouslymanipulated; they alsomake implicit or explicit causal claims based onthesemodels.When can these claimsbemade?Weanswer this questionby first discussing designand estimation conditionsunderwhichmodel estimates can be interpreted, using the randomizedexperiment as the gold standard. We show how endogeneity which includes omitted variables,omitted selection, simultaneity, common-method variance, and measurement error rendersestimates causally uninterpretable. Second, we present methods that allow researchers to test

Keywords:CausalityQuasi-experimentationInstrumental variablesCommon-method biasDifference-in-differencesSimultaneous equationsMonte Carlo simulations

j ourna l homepage: www.e lsev ie r.com/ locate / l eaquation is not possible, and (c) take stock of the methodological rigor with which causastraddles the disciplines of management and applied psychology.the necessary design and estimation conditions for causal interpretation. Our centrar estimates; by consistent we mean that the estimate regarding the presumed causa

omics, University of Lausanne, Internef #618, CH-1015 Lausanne-Dorigny, Switzerland. Tel.: +41 21 692 3438

kis).

All rights reserved.l

ll

;

-

1087J. Antonakis et al. / The Leadership Quarterly 21 (2010) 10861120relationship converges to the correct population parameter as the sample size increases. We are concerned about the regressioncoefcient, , of a particular independent variable x and whether accurately reects the true treatment effect in predicting y.After model estimation, the result might seem to look good, particularly if an advanced statistical modeling programwas used, thep-value of the parameter estimate is below .0001 and themodel ts well because of high r-squares and in the case of simultaneousequation models because tests of model t cannot reject the model. However, if certain essential design and methodologicalconditions are not present the coefcient cannot be interpreted, not even in terms of an association or relation even in thecorrelational sense. That is, the coefcient may have an allure of authenticity but it is specious.

As we will demonstrate, correlation can mean causation in non-experimental settings if some essentials design conditions arepresent and the appropriate statistical methods are used. Knowing the conditions under which causal claims can be made andtheir resulting practical and policy recommendations is one of the most important tasks entrusted to scientists. Apart from theobvious importance and implications of understanding causality in the hard sciences, correctly modeling the causal relations thatexplain phenomena is also crucial in the social sciences.

Calls have been made before to pay attention to the correct estimation of non-experimental causal models; the major culprit isendogeneity, where the effect of x on y cannot be interpreted because it includes omitted causes. This problem of endogeneity hasbeen noted both in psychology (Foster & McLanahan, 1996) and management (Shaver, 1998), and these calls are being repeated(Bascle, 2008; Gennetian, Magnuson, & Morris, 2008; Larcker & Rusticus, 2010). Unfortunately, these calls have mostly fallen ondeaf ears. The results of our review are similar to a recent review that found that more than 90% of papers published in the premierstrategy journal (and one of the top journals in management), Strategic Management Journal (SMJ), were not correctly estimated(Hamilton & Nickerson, 2003)! Hamilton and Nickerson (2003, pp. 5354) went on to say, We believe that the low number ofpapers in SMJ that account for endogeneity may indicate a failure of empirical research in strategic management. Yet, ignoringendogeneity is perilous; the resulting parameter estimates are likely to be biased andmay therefore yield erroneous results andincorrect conclusions about the veracity of theory. Economics went though the same difcult period a couple of decades ago andeconomists have improved many of their practices regarding causal inference. Nowadays in economics it is virtually impossible topublish a non-experimental study in a top general or eld journal (e.g., American Economic Review, Quarterly Journal of Economics,Review of Economic Studies, Econometrica, Journal of Econometrics, and Journal of Labor Economics) without providing convincingevidence and arguments that endogeneity is not present.

Our paper is structured in three major sections, as follows: we rst explain what causality is; we then introduce thecounterfactual argument, and explain why it is important to have a control group so that causal conclusions to bemade.We look atthe randomized experiment as a point of departure showing precisely why it allows for causal claims. Although the randomizedexperiment is a very useful tool sometimes experiments are impossible to do (see Cook, Shadish, & Wong, 2008; Rubin, 2008). Atother times, researchers may come across a natural experiment of sorts, whose data they can exploit. We review these designsand methods and show that when correctly implemented they allow for causal inference in real-world settings. Unfortunately,many of thesemethods are rarely utilized in management and applied psychology research (cf. Grant &Wall, 2009). In our review,we borrow mostly from econometrics, which has made great strides in teasing-out causal relations in non-experimental settings(try randomly assigning an economy or a company to a treatment condition!), though, the natural experiment revolution hasdebts to pay to psychology given the contributions of Donald T. Campbell to quasi-experimentation (see Campbell & Stanley, 1963,1966; Cook & Campbell, 1979). Also, some of the approaches we discuss (e.g., regression discontinuity) that are popular ineconometrics nowadays were originally developed by psychologists (Thistlethwaite & Campbell, 1960).

Next, we discuss the intuition and provide step-by-step explanation behind the non-experimental causal methods; wemaintain statistical notation to aminimum tomake our review accessible to a large audience. Although the context of our review ismanagement and applied psychology research, the issueswe present and the recommendations and conclusionswemake are verygeneral and have application for any social science, even the hard sciences.

Finally, similar to the recent Leadership Quarterly review of Yammarino, Dionne, Uk Chun and Dansereau (2005) whoexamined the state of research with respect to levels-of-analysis issues (i.e., failure to correctly theorize and model multilevelphenomena), we examined a subset of the literature published in top management and applied psychology journals, makingexplicit or implicit causal claims about a hot social-sciences topic, leadership. The journals we included in the review were top-tier (in terms of 5-year impact factor), including: Academy of Management Journal, Journal of Applied Psychology, Journal ofManagement, Journal of Organizational Behavior, The Leadership Quarterly, Organizational Behavior & Human Decision Processes, andPersonnel Psychology. We coded studies from these journals to determine whether the method used allowed the researchers todraw causal claims from their data. Our results indicate that the statistical procedures used are far from being satisfactory. Moststudies had several problems that rendered estimates suspect. We complete our review with best-practice recommendations.

1. What is causality?

Wetakea simple, pragmatic, andwidely-sharedviewof causality;wearenot concernedabout thenatureof causes orphilosophicalfoundations of causality (cf. Pearl, 2000), butmore specically how tomeasure the effect of a cause. Tomeasure causal effects, we needan effect (y) and a presumed cause (x). Three classic conditions must exist so as to measure this effect (Kenny, 1979):

1. x must precede y temporally2. x must be reliably correlated with y (beyond chance)3. the relation between x and y must not explained by other causes

-

1088 J. Antonakis et al. / The Leadership Quarterly 21 (2010) 10861120The rst condition is rather straightforward; however, in the case of simultaneity which we discuss later a cause and aneffect could have feedback loops. Also, simply modeling variable x as a causemerely because it is temporal antecedent of y doesnotmean that it caused y (i.e., xmust be exogenous too, as we discuss in detail later); thus temporal ordering is a necessary but nota sufcient condition. The second condition requires a statistically reliable relationship (and thus quantitative data). The thirdcondition is the one that poses the most difculties and has to do with the exogeneity of x (i.e., that x varies randomly and is notcorrelated with omitted causes). Our review is essentially concerned with the rst and third conditions; these conditions,particularly the third one, have less to do with theoretical arguments and more to do with design and analysis issues (see alsoJames, Mulaik, & Brett, 1982; Mulaik & James, 1995).

If the relation between x and y is due, in part, to other reasons, then x is endogenous, and the coefcient of x cannot beinterpreted, not even as a simple correlation (i.e., the magnitude of the effect could be wrong as could be the sign). The limitationsoften invoked in non-experimental research that the relation between x and ymight be due to y causing x (i.e., reverse causalitymay be at play), common-method variance may explain the strong relationship, or this relationship is an association given thenon-experimental data are moot points. If x is endogenous the coefcient of x simply has no meaning. The true coefcient couldbe higher, lower, or even of a different sign.

1.1. The counterfactual argument

Suppose that we have conducted an experiment, where individuals were assigned by some method to an experimental and acontrol condition (x). The manipulation came before the outcome (y) and it correlates reliably with the outcome. How do we ruleout other causes? There could be an innite amount of potential explanations as to why the cause correlates with the effect. To testwhether a causal relation is real, the model's predictions must be examined from the counterfactual model (Morgan & Winship,2007; Rubin, 1974;Winship &Morgan, 1999). The counterfactual asks the following questions: (a) if the individuals who receivedthe treatment had in fact not received it, what would we observe on y for those individuals? Or, (b) if the individuals who did notreceive the treatment had in fact received it, what would we have observed on y?

As will become evident later, if the experimenter uses random assignment, the individuals in the control and treatment groupsare roughly equivalent at the start of the experiment; the two groups are theoretically interchangeable. So, the counterfactual forthose receiving the treatment are those who did not receive it (and vice-versa). The treatment effect is simply the difference in yfor the treatment and control group. In a randomized experiment the treatment effect is correctly estimated when using aregression (or ANOVA) model.

However, when the two groups of individuals are not the same on observable (or unobservable characteristics), and one grouphas received a treatment, we cannot observe the counterfactuals: the groups are not interchangeable. What would the treatedgroup's y had been had they not received the treatment and what would the untreated group's y be had they received thetreatment? The counterfactual cannot be observed because the two groups are systematically different in some ways, whichobscures the effect of the treatment. To obtain consistent estimates, therefore, this selection (to treatment and control group)mustbe modeled. Modeling this selection correctly is what causal analysis, in non-experimental settings, is all about.

Also, in this review, we are exclusively focusing on quantitative research because when done correctly it is only through thismode of inquiry that counterfactuals, and hence causality can be reliably established. Proponents of qualitative methods havesuggested that causality can also be studied using rigorous case-studies and the like (J. A. Maxwell, 1996; Yin, 1994). Yin (1994),for example, compares case study research to a single experiment althoughwhat Yin statesmight be intuitively appealing, a casestudy of a social-science phenomenon is nothing like a chemistry experiment. In the latter the experimenters have completecontrol of the system of variables that are studied and can add or remove molecules or perform interventions at will(experimenters have complete experimental control). If the experiment works and can be reliably repeated (and ideally, thisreliability is analyzed statistically), then causal inference can be made.

However in the post-hoc case study or even one where observation is real-time, there are a myriad of variables both observedor unobserved that cannot be controlled for and thus confound results. These latter problems are the same ones that plaguequantitative research; however, quantitative researchers can control for these problems if the model is correctly specied, andthus accounts for the bias. Qualitative research can be useful when quantied (cf. Diamond & Robinson, 2010); however, matchingpatterns in observations (i.e., nding qualitative correlations) cannot lead to reliable inference if sources of bias in the apparentpattern are not controlled for and the reliably of the relation is not tested statistically (and we will not get into another limitationof observer, particularly participantobserver, conrmation bias, Nickerson, 1998).

We begin our methodological voyage with the mainstay of psychology: the randomized eld experiment. A thoroughunderstanding of the mechanics of the randomized eld experiment is essential because it will be a stepping stone to exploringquasi-experimental methods that allow for causal deductions.

2. The gold standard: the randomized eld experiment

This design ensures that the correlation between an outcome and a treatment is causal; more specically, the origin of thechange in the dependent variable stems from no other cause other than that of the manipulated variable (Rubin, 2008; Shadish,Cook, & Campbell, 2002). What does random assignment actually do and why does it allow one to make causal conclusions?

We rst draw attention to how the Ordinary Least Squares (OLS) estimator (i.e., the estimator used in regression or ANOVA-type models that minimizes the sum of squared residuals between observation and the regression line) derives estimates for a

-

Fig. 1,algebr

In a

1089J. Antonakis et al. / The Leadership Quarterly 21 (2010) 10861120any confounds. The correlation of the treatment to the outcome variable must be due to the treatment and nothing else. Becausesubjects were randomly assigned to conditions, the characteristics of subjects (on the average) are approximately equal acrossconditions, whether they are measured or unmeasured characteristics; any differences that might be observed will be due tochance (and hence very unlikely). Having, subjects that are approximately the same in the treatment and control groups occurallows for solid conclusions and counterfactuals. If there is a change in y and this change is reliably (statistically) associated withthe manipulated variable x then nothing else could possibly have provoked the change in y but the treatment. Thus, in arandomized eld experiment, the selection process to treatment groups is correctly modeled (it is random) and the model isestimated in accordance with the assumptions of the OLS estimator (i.e., given the random assignment, the correlation of xwith eis truly zero). In other words, the assumption that OLS makes about selection is met by random assignment to treatment.

As we discuss later, if there has been systematic selection to treatment or any other reason that may affect consistency thenestimates could still be consistent if the appropriate methodological safeguards are taken. Note, that there is one situation inte will also be adjusted by the regression procedure to the extent that they correlate with the problematic variable). Refer towhich demonstrates these points graphically as path models (we explain this problem in more detail later using some basica).randomized eld experiment, causal inference is assured (Shadish et al., 2002); that is, it is very unlikely that there could bemodel. For simplicity, we will only discuss a treatment and control; however, the methods we discuss can be expanded to morethan two conditions (e.g., we could add an alternative/bogus treatment group) and interactions among conditions.

Assume a model where we have a dummy (binary) independent variable x reecting a randomly assigned treatment (amanipulation, leadership training, which is 1 if the subject received the treatment else it is 0) and a continuous independentvariable z, which is a covariate (e.g., IQ of leaders). This model is the typical ANCOVA model for psychologists; in an ANCOVAmodel, including a covariate that is strongly related to y (e.g., leadership effectiveness) reduces unexplained variance. Thus, it isdesirable to include such a covariate because power to detect a signicant effect in the treatment increases (Keppel & Wickens,2004; S. E. Maxwell, Cole, Arvey, & Salas, 1991). The covariate is also useful to adjust for any observed initial albeit it small, thatare due to chance differences in the intervention and control groups that may have occurred due to chance (Shadish et al., 2002).Let:

yi = 0 + 1xi + 2zi + ei 1

Where y is the dependent variable, i is from 1 to n observations, 0 is a constant (the intercept, where x=0 and z=0, and the line a two dimensional plane in this case given that the equation has two independent variables cuts the y axis), 1 and 2 areunstandardized regression coefcients of the independent variables x and z and refer how much a change in one unit of x and zrespectively affect y (i.e., 1=y/x and 2=y/z respectively), e is a disturbance term (also known as the error term),reecting unobserved causes of y as well as other sources of error (e.g., measurement error). The error term, which is anunobserved latent variable must not be confused with the residual term, which is the difference between the predicted and theobserved value of y. This residual term is orthogonal to the regressors regardless of whether the error term is or not.

Let us focus on x for the time being, which is the manipulated variable. When estimating the slopes (coefcients) of theindependent variables, OLSmakes an important assumption: That e is uncorrelatedwith x. This assumption is usually referred to asthat of the orthogonality of the error termwith the regressor. In other words, x is assumed to be exogenous. Exogenous means thatx does not correlate with the error term (i.e., it does not correlate with omitted causes).When x is not exogenous, that is, when it isendogenous (hence the problem of endogeneity) then it will correlate with the error term and this for a variety of reasons. Wediscuss some of these reasons in the next section.

To better understand the problem of endogeneity, suppose that extraversion is an important factor for leadership effectiveness.Now, if we assign the treatment randomly there will be an equal amount of extraverts in the treatment and control conditions. Ifwe nd that the treatment group is higher than the control group on effectiveness, this difference cannot be accounted for by anunmodeled potential cause (e.g., extraversion). Thus, random assignment assures that the groups are equal on all observed orunobserved factors because the probability that a particular individual has to be assigned to the treatment and control group isequal. In this condition, the effect of x on y can be cleanly interpreted.

When x correlates with e (i.e., x is endogenous) then the modeler has a serious problem and what happens next is somethingvery undesirable: In the process of satisfying the orthogonality assumption, the estimator (whether OLS or maximum likelihood)adjusts the slope, 1 of x, accordingly. The estimate thus becomes inaccurate (because it has been changed to the extent that xcorrelates with e). In this case suppose that selection to treatment was not random and that the treatment group had moreextraverts; thus, x will correlate with extraversion in these sense that the level of extraversion is higher in the treatment groupand that this level is correlated with y too because extraverts are usually more effective as leaders. Now because extraversion hasnot been measured, x will correlate with e (i.e., all omitted causes of y that are not expressly modeled). The higher thecorrelation of x with e the more inaccurate (inconsistent) the estimate will be. In such conditions, nding a signicant relationbetween x and y is completely useless; the estimate is not accurate because it includes the effects of unmeasured causes, andhaving a sample size approaching innity will not help to correct this bias. The estimate not only includes the effect of x on y butalso all other unobserved effects that correlate with x and predict y (and thus the coefcient could be biased upwards ordownwards)!

We cannot stress how important it is to satisfy the orthogonality assumption because not only will the coefcient of theproblematic variable be inconsistent; any variables correlating with the problematic variable will also be affected (because theirestima

-

manipulation (x) to amediator (m) in predicting y as follows: x>m>y. In this case, themediator is endogenous (themediator isnot randomly assigned and it depends on the manipulation; thus m cannot be modeled as exogenous). This model can only becorrectly estimated using the two-stage least squares procedure we describe later; the widely used procedure recommended byBaron and Kenny (1986), which models the causal mechanism by OLS will actually give biased estimates because it models themediator as exogenous. We discuss this problem in depth later.

3. Why could estimates become inconsistent?

There are many reasons why xmight be endogenous (i.e., correlate with e) thus rendering estimates inconsistent. We presentve threats towhat Shadish et al. (2002) referred to as internal validity (i.e., threats to estimate consistency).We introduce theseve threats below (for a more exhaustive list of examples see Meyer, 1995); we then discuss the basic remedial action that can betaken. In the following section, we discuss techniques to obtain consistent estimates for more complicated models. We alsoaddress threats to inference (validity of standard errors) and model misspecication in simultaneous equation models. For asummary of these threats refer to Table 1.

3.1. Omitted variables

Omitted variable bias comes in various forms, including omitted regressors or omitted interaction terms or polynomial termsWe discuss the simplest case rst and then more advanced cases later.

3.1.1. Omitting a regressorSuppose that the correctly specied regression model is the following, and includes two exogenous variables (traits); y is

leader effectiveness measured on some objective scale:

yi = 0 + 1xi + 2zi + ei 2

Assume that a researcher wants to examine whether a new construct x (e.g., emotional intelligencemeasured as an ability)predicts leadership effectiveness. However, this construct might not be unique and suppose it shares common variance with IQThus, the researcher should control for z (i.e., IQ) too, because x and z are correlated and, of course, because z predicts y as impliedin the above model. Although one should also control for personality in the above equation, to keep things simple for the time

1090 J. Antonakis et al. / The Leadership Quarterly 21 (2010) 10861120.

.experimental work where causality can be confounded, which would be in the case where the modeler attempts to link the

x y z y

x

y e

1 = 0

z

2 0

3 0

x

y e

1 = 0

z

2 0

3 =0

C D

Fig. 1.How endogeneity affects consistency. A: 1 is consistent because x does not correlate with e. B: 2 is inconsistent because z correlates with e. C: Although x isexogenous 1 is inconsistent because z, which correlates with e correlates with x too and thus passes-on the bias to x. D: 1 is consistent even if 2 is notconsistent because x and z do not correlate (though this is still not an ideal situation because 2 is not interpretable; all independent variables should be exogenousor corrected for endogeneity bias).e

1=consistent

e

2 = inconsistent1 = 0 2 0A B

-

being

to a Haalgebr

i 1 i i

Omitting z from Eq. (2) means that we have introduced endogeneity in the sense that x correlates with a new combined errorterm vi. The endogeneity is evident when substituting Eq. (4) into Eq. (2):

yi = 0 + 1xi + 21xi + ui + ei 5a

Multiplying out gives:

yi = 0 + 1xi + 21xi + 2ui + ei|{z}vi

5b

Or, rearranging as a function of x gives

yi = 0 + 1 + 21xi + 2ui + ei 5c

Whichever way we look at it, whereas the slope of x was correctly estimated in Eq. (2), it cannot be correctly estimated inEq. (3) because as shown in Eq. (5c), the slope will include the correlation of x with z (i.e., 1). Thus, x correlates with the errorterm (as per Eq. (5b)) and is inconsistent. In the presence of omitted variable bias, one does not estimate 1 as per Eq. (3), busomething else (1). Whether 1would go up or downwhen including zwill depend on the signs of 2 and 1. It is also clear that i2=0 or if 1=0 then vi reduces to ei and there is no omitted variable bias if z is excluded from the model.

Also, bear in mind that all other regressors that correlate with z and x will be inconsistent too when estimating the wrongmodel. What effect the regression coefcients capture is thus not clear when there are omitted variables, and this bias can increaseor decrease remaining coefcients or change their signs!

Thus, it is important that all possible sources of variance in y that correlate with the regressor are included in the regressionmodel. For instance, the construct of emotional intelligence has not been adequately tested in leadership or general work

3. Sim4. Me5. Com

1091J. Antonakis et al. / The Leadership Quarterly 21 (2010) 10861120

tfrespect to the relation between x and z; also, we omit the intercept for simplicity:

z = x + u 4biased estimate 1 one obtains 1; these two estimates may differ signicantly, as could be established by a what is referredusman (1978) test (see formula in Section 3.1.3). To see why these two estimates might not be the same, we use some basica and express z as a function of x and its unique cause u. Note, the next equation does not necessarily have to be causal withfollowing:

yi = 0 + 1xi + i 3

This model now omits z; because x and z correlate and z also predicts y, xwill correlate with i. In this case, instead of obtainingthe unassume that both x and z are orthogonal to personality. Now, assume that instead of the previous model one estimated thecomparing estimates to a limited information estimator (e.g., two stage-least squares).

Note: The 14 threats to validity mentioned are the criteria we used for coding the studies we reviewed.del misspecication (a) Not correlating disturbances of potentially endogenous regressors in mediation models (and nottesting for endogeneity using a Hausman test or augmented regression),

(b) Using a full information estimator (e.g., maximum likelihood, three-stage least squares) without6. Inconsistent inference (a) Using normal standard errors without examining for heteroscedasticity(b) Not using cluster-robust standard errors in panel data (i.e., multilevel hierarchical or longitudinal)

7. Mo(c) Sample (participants or survey responses) suffers from self-selection or is non-representativeultaneity (a) Reverse causality (i.e., an independent variable is potential caused by the dependent variable)asurement error (a) Including imperfectly measured variables as independent variables and notmodellingmeasurement errormon-method variance (a) Independent and dependent variables are gathered from the same rating sourcecomparing men and women leaders on leadership effectiveness where the selection process toleadership is not equivalent)itted selection (a) Comparing a treatment group to other non-equivalent groups (i.e., where the treatment group is notthe same as the other groups)

(b) Comparing entities that are grouped nominally where selection to group is endogenous (e.g.,Table 1The 14 threats to validity.

Validity threat Explanation

1. Omitted variables (a) Omitting a regressor, that is, failing to include important control variables when testing thepredictive validity of dispositional or behavioral variables (e.g., testing predictive validity ofemotional intelligence without including IQ or personality; not controlling for competingleadership styles)

(b) Omitting xed effects(c) Using random-effects without statistical justication (i.e., Hausman test)(d) In all other cases, independent variables not exogenous (if it is not clear what the controls should be)

2. Om

-

usingwouldconsis

1092 J. Antonakis et al. / The Leadership Quarterly 21 (2010) 10861120will be inconsistent to the extent that they correlate with the xed effects (which is likely). What is useful is to conceptualize theerror term eij in a xed effects model as having two components: uj, the Level 2 invariant component (that is explicitly modeledwith xed-effects), and eij, the idiosyncratic error component. To maintain a distinction between the xed-effects model and therandom-effects model, we will simply refer to the error term as eij in the xed-effect model (given that the error uj is consideredxed and not random and is explicitly modeled using dummy variables, as we show later).

Obtaining consistent estimates by including xed-effects comes at the expense of not allowing any Level 2 (rm-level)predictors because they will be perfectly collinear with the xed effects (Wooldridge, 2002). If one wants to add Level 2 variablesto themodel (and remove the xed effects) then onemust ensure that the estimator is consistent by comparing estimates from theconsistent estimator to with the more efcient one, as we discuss in the next section.

Assume we estimate a model where we have data from 50 rms and we have 10 leaders from each rm (thus we have 500observations at the leader level). Assume that leaders completed an IQ test (x) and were rated on their objective performance(adherence to budget), y. Thus, we estimate the following model for leader i in rm j:

yij = 0 + 1xij + 50

k=2kDkj + eij 6

The xed effects are captured by 49 (k1) dummy or indicator variables, D identifying the rms. Not including these dummyvariables would be a big risk to take because it is possible, indeed likely, that the xed effects are correlatedwith x (e.g., some rmsmay select leaders on their IQ) and they will most certainly predict variance in y (e.g., xed effects would capture things like rmsize, which may predict y). Thus, even though x is exogenous with respect to eij the coefcient of x will be consistent only if thedummies are included; if the dummies are not included then the eij term will include uj and thus biases estimates of x. If thedummies are not included then the modeler faces the same problem as in the previous example: omitted-variable bias. Note, if xcorrelates with eij, the remedy comes using another procedure, which we discuss later when introducing two-stage least squaresestimation.

Fixed-effects could be present for a number of reasons including group, microeconomic, macroeconomic, country-level, or timeeffects and researchers should paymore attention to these contextual effects because they can affect estimate consistency (Liden &Antonakis, 2009). Finally, when observations are nested (clustered), standard errors should not be estimated the conventionalway (refer to the section later regarding consistency of inference).

3.1.3. Using random effects without meeting assumptions of the estimatorIf the modeler wants to determine whether Level 2 variables predict y, the model could be estimated using the random-effects

estimator. The random effects estimator allows for a randomly varying intercept between rms this model is referred to as theintercepts as outcomes in multilevel modeling vernacular (Hofmann, 1997). Instead of explicitly estimating this heterogeneityvia xed effects, this estimator treats the leader level differences in y (i.e., the intercepts) as random effects between rms that aredrawn from a population of rms and assumed to be uncorrelatedwith the regressors and the disturbances; the random effects arealso assumed to be constant over rms and independently distributed. Failure to meet these assumptions will lead to inconsistentestimates and is tantamount to having omitted variable bias.OLS, any possible unobserved heterogeneity in the level (intercept) of y common to leaders in a particular rm whichotherwise have been pooled in e thus creating omitted variable bias is explicitly captured. As such, the estimator istent if the regressors are exogenous. If the xed effects correlating with Level 1 variables are not modeled, Level 1 estimatessituations; one of the reasons is that researchers fail to include important control variables like IQ, personality, sex, age, and thelike (Antonakis, 2009; Antonakis, Ashkanasy, & Dasborough, 2009; Antonakis & Dietz, 2010, in press a,b).

What if irrelevant regressors are included? It is always safer to err on the side of caution by including more than fewer controlvariables (Cameron & Trivedi, 2005). The regressors that should be included are ones that are theoretically important; the cost ofincluding them is reduced efciency (i.e., higher standard errors), but that is a cheap price to pay when consistency is at stake.Note, there are tests akin to Ramsey's (1969) regression-error-specication (RESET) test, which can be useful for testing whetherthere are unmodeled linearities present in the residuals by regressing y on the predicted value of polynomials of y (the 2nd, 3rd,and 4th powers) and the independent variables. This test is often incorrectly used as a test of omitted variables or functional formmisspecication (Wooldridge, 2002); however, the test actually looks at whether the predicted value of y is linear given thepredictors.

3.1.2. Omitting xed effectsOftentimes, researchers have panel data (repeated observations nested under an entity). Panel data can be hierarchical (e.g.,

leaders nested in rms; followers nested in leaders) or longitudinal (e.g., observations of leaders over time). Our discussion later isrelevant to both types of panels, though wewill discuss the rst form, hierarchical panels (or otherwise known as pseudo-panels).If there are xed-effects as in the case of having repeated observations of leaders (Level 1) nested in rms (Level 2), theestimates of the other regressors included in the model would be inconsistent if these xed effects are not explicitly modeled(Cameron & Trivedi, 2005; Wooldridge, 2002). By xed-effects, we mean the unobserved rm-invariant (Level 2) constant effects(or in the case of a longitudinal panel, the time-invariant panel effects) common to those leaders nested under a rm (we refer tothese effects as uj later, see Eq. (7)).

We discuss an example regarding the modeling of rm xed-effects. By explicitly modeling the xed effects (i.e., intercepts)

-

coefcassum2008)

Novariabi in r

Basof thexed-eestimaThe m

clusteSkrondeither

1093J. Antonakis et al. / The Leadership Quarterly 21 (2010) 10861120yij = 0 + 1xij + 2xj + q

k=1kzkj + eij + uj 8

yij = 0 + 1xijxj + q

k=1kzkj + wij + gj 9

In the previous two equations, the interpretation of the coefcient of the cluster mean differs; that is, in Eq. (8) it refers to thedifference in the between andwithin effects whereas in Eq. (9) it refers to the between effect (Rabe-Hesketh & Skrondal, 2008). Inboth cases, however, the estimate of 1 or 1 is consistent (and equals that of the xed-effects estimator, though the intercept willbe different in the case of Eq. (9)). Note that if Level 2 variables are endogenous, the cluster-mean trick cannot help; however,there are ways to obtain consistent estimates by exploiting the exogenous variation in Level 2 covariates (see Hausman & Taylor,1981).rs) and allow for consistent estimation of Level 1 parameters just as if xed-effects had been included (see Rabe-Hesketh &al, 2008). Thus, if the Hausman test is signicant, we could still obtain consistent estimates of the Level 1 parameters withone of the following specications (given that the cluster mean will be correlated with the covariate but not with uj):examines whether the estimate of of the efcient (RE) estimator differ signicantly from that of the consistent (FE) estimator,using the following t test (which has an asymptotic standard normal distribution):

z =FE RE

SEFE2SERE2q

This test can be extended for an array of parameters. In comparing the xed-effects to the random-effects estimator, analternative to the Hausman test is the SarganHansen test (Schaffer & Stillman, 2006), which can be used with robust or cluster-robust standard errors. Both these tests are easily implemented in Stata (see StataCorp, 2009), our software of choice. Again,because observations are nested (clustered), standard errors should not be estimated the conventional way (refer to the sectionlater regarding consistency of inference).

One way to get around the problem of omitted xed effects and to still include Level 2 variables is to include the cluster meansof all Level 1 covariates in the estimated model (Mundlak, 1978). The cluster means can be included as regressors or subtracted(i.e., cluster-mean centering) from the Level 1 covariate. The cluster means are invariant within cluster (and vary betweenically, what the Hausman test does is to compare the Level 1 estimates from the consistent (xed-effects) estimator to thoseefcient (random-effects estimator). If the estimates differ signicantly, then the efcient estimator is inconsistent and theffects estimator must be retained; the inconsistency must have come from uj correlating with the regressor. In this casetes from the random-effects estimator cannot be trusted; our leitmotif in this case is consistency always trumps efciency.ost basic Hausman test is that for one parameter, where is the element of being tested (Wooldrige, 2002). Thus, the testyij = 0 + 1xij + q

k=1kzkj + eij + uj 7

In Eq. 7, we include regressors 1 to q (e.g., rm size, type, etc.) and omit the xed-effects, but include a rm-specic errorcomponent, uj. The random effects estimator is more efcient than the xed-effects estimator because it is designed to minimizethe variance of the estimated parameters (loosely speaking it has fewer independent variables because it does not include all thedummies). But you guessed it; it comes with a hefty price in that it may not be consistent vis--vis the xed-effects estimator(Wooldridge, 2002). That is, ujmight correlate with the Level 1 regressors. To test whether the estimator is consistent, one can usewhat is commonly called a Hausman Test (see Hausman, 1978) this test, which is crucial to ensuring that the random-effectsmodel is tenable does not seem to be routinely used by researchers outside of econometrics, and not even in sociology, a domainthat is close to economics (Halaby, 2004).ients models versus the random-effects model (using a likelihood-ratio test); only if the test is signicant (i.e., theption that the slopes are xed is rejected) should the random-coefcients estimator by used (Rabe-Hesketh & Skrondal,.w, the advantage of the random-effects estimator (which could simultaneously be its Achilles heel) is that then Level 2les can be included as predictors (e.g., rm size, public vs. private organization, etc.), in the following specication for leaderm j:Also, prior to using this estimator, the modeler should test for the presence of random effects using a Breusch and PaganLagrangianmultiplier test for random effects if themodel has been estimated by GLS (Breusch & Pagan, 1980), or a likelihood-ratiotest for random effects if the model has been estimated with maximum likelihood estimation (see Rabe-Hesketh & Skrondal,2008); this is a chi-square with 1 degree of freedom and if signicant, rules in favor of the random-effects model. We do notdiscuss the random-coefcients model, which is direct extension of the of the random-effects model and allows varying slopesacross groups. Important to note is that before one uses such a model, one must test whether it is justied by testing the random-

-

3.1.4.Sel

trunca

3.2. Si

Howecorrela

1094 J. Antonakis et al. / The Leadership Quarterly 21 (2010) 10861120leadership style (useof sanctions) andy is followerperformance (andweexpect the relation, asestimated in1, tobenegative):

yi = 0 + 1xi + ei 12re is a simple example to demonstrate the simultaneity problem:Hiringmore police-ofcers (x) should reduce crime (y), right?ver, it is also possible too that when crime goes up, cities hire more police ofcers. Thus, x is not exogenous and will necessarilyte with e in the y equation (see Levitt, 1997; Levitt, 2002). To make this problem more explicit, assume that x is a particularThis problem is one that is tricky andwhich has givenmany economists and other social scientists a headache. Suppose that xcauses y and this relation should be negative; you regress y on x but to your surprise, you nd a non-signicant relation (or even apositive effect). How can this be? If y also causes x it is quite possible that their covariation is not negative. Simultaneity has to dowith a two variables simultaneously causing each other. Note, this problem is not necessarily the supposed simplistic backwardcausality problem often evoked by researchers (i.e., that the positive regression coefcient of x on y could be due to y causing x); ithas to do with simultaneous causation, which is a different sort of problem.

Hele). Various remedies are available in such cases, for example, censored regression models (Tobin, 1958) or other kinds ofted regression models (Long & Freese, 2006) depending on the nature of the problem at hand.

multaneityendogenous. Assume:

yi = 0 + 1xi + 2zi + ei 10

Here, x takes the value of 1 if the individual receives a treatment (e.g., attends a leadership-training program), else x is 0 (theindividual has not received the treatment). Assume that y is how charismatic the individual is rated. However, assume thatindividuals have been selected (either self-selected or otherwise) to receive the training. That is, x, the binary variable has not beenrandomly assigned, which means that the groups might not be the same on the outset on observed or unobserved factors andthese factors could be correlated with y and of course x. Thus, the problem arises because x is explained by other factors (i.e., theselection can be predicted) that are not observed in Eq. (10), which we refer to as x* (subsumed) in e. That is, assume x* is modeledin the following probit (or logistic) equation (Cong & Drukker, 2001)

xi = 0 + q

k=1kzkj + ui 11

Where k refers to regressors 1 to q and u to a disturbance term. We observe x=1when x*N0 (i.e., treatment has been received),else x=0. The problem arises because uwill be correlated with e (this correlation is called e,u) and thus xwill be correlated with e.

As an example, suppose that individuals who have a higher IQ (as well as some other individual differences that correlate withleadership) aremore likely to attend the training; it is also likely, however, that these individuals aremore charismatic. Thus, there areunmodeled sources of variance (omitted variables) subsumed in e that correlate with x. Suppose that one is highly motivated, so ui ishigh, and attends training. Ifmotivation is also correlatedwith charisma, then eiwill behigher too; hence the twodisturbances correlate.As it is evident, this problem is similar to omitted variable bias in the sense that there are excluded variables, pooled into the error term,that correlate with endogenous choice variable and the outcome (Kennedy, 2003). If the selection is not explicitly (and correctlymodeled), then using untreated individuals to estimate the counterfactual is misleading: they differ from treated individuals withrespect to thingswedo not know; that is, the counterfactuals aremissing (and the effect of the treatmentwill be incorrectly estimated).

Although this problemmight seem unsolvable, it is not; this model can be estimated correctly if this selection process is explicitlymodeled (Cong & Drukker, 2001;Maddala, 1983). In fact, for a related type of model where y is only observed for thosewho receivedtreatment (see Heckman, 1979), James Heckman won the Nobel Prize in Economics! We discuss how this model is estimated later.

Another problem that is somewhat related to selection (but has nothing to do with selection to treatment) is having non-representative selection to participation in a study or censored samples (a kind of missing-data problem). We briey discuss theproblem here and suggested remedies, given that the focus of our paper is geared more towards selection problems. The problem ofnonrepresentativeness has to dowith affecting the observed variability, which thus attenuates estimates. Range restrictionwould beanexampleof this problem; for example, estimating the effect of IQon leadership in a sample that is highon IQwill bias the estimate ofIQ downwards (thus, the researcher must either obtain a representative sample or correct for range restriction). Another examplewould beusing self-selected participants for leadership training (whereparticipants are then randomly assigned to treatment); in thiscase, it is possible that the participants are not representative of the population (and only those that are interested in leadership, forexample, volunteered to participate). Thus, the researcher should checkwhether the sample is representative of the population. Also,consider the case where managers participate in a survey and they select the subordinates that will rate them (the managers willprobably select subordinates that like them). Thus, ideally, samples must be representative and random (and for all types of studies,whether correlational or testing for group differences); if they are not, the selection processmust bemodeled. Other examples of thisproblem include censored observations above or below a certain threshold (which creates a missing-data problem on the dependentvariabOmitting selectionection refers to the general problem of treatment not being assigned randomly to individuals. That is, the treatment is

-

Exp

As

Thfrom t(17). Twill al

showebetwe

restricindica

1095J. Antonakis et al. / The Leadership Quarterly 21 (2010) 10861120variable a structural equation modeling program must be used.Later, we also discuss a second way to x the problem of measurement error particularly if the independent variable

correlates with e for other reasons beyond measurement error using two-stage least squares regression.tage of using a program like Stata is that the eivreg least-squares estimator does not have the computational difculties,tive assumptions, and sample size requirements inherent to maximum likelihood estimation so it is useful with singletor (or index) measures (e.g., see Bollen, 1996; Draper & Smith, 1998; Kmenta, 1986); for multi-item measures of a latentmultivariate effects and measurement error, leading to incorrect inference.The effect of measurement error can be eliminated with a very simple x: by constraining the residual variance of x to (1

reliabilityx)Variancex (Bollen, 1989); if reliability is unknown, the degree of validity of the indicator can be assumed fromtheory and hence the residual is constrained accordingly (Hayduk, 1996; Onyskiw & Hayduk, 2001). What the modeler needs is areasonably good estimate for the reliability (or validity) of themeasure. If xwere a test of IQ, for example, andwe have good reasonto think that IQ is exogenous as we discuss later (see Antonakis, in press), a reasonable estimate could be the testretest reliabilityor the Cronbach alpha internal consistency of estimate of the scale. Otherwise, theory is the best guide to how reliable themeasureis. Using this technique is very simple in the context of a regression model with a program like Stata and its eivreg (errors-in-variables) routine or most structural equation modeling programs using maximum likelihood estimation (e.g., Mplus). Theadvanerror-in-variables (with maximum likelihood estimation, given that he reanalyzed summary data), Antonakis (2009)d that emotional intelligencemeasures were linearly dependent on the big ve and intelligence, with multiple rs rangingen .48 and .76 depending on the measures used. However, this relation was vastly underestimated when ignoringAntonakis (2009) for a example in leadership research, where he showed that emotional intelligencewas more strongly relatedto IQ and the big ve than some have suggested (which means that failure to include these controls and failure to modelmeasurement error will severely bias model estimates, e.g., see Antonakis & Dietz, in press-b, Fiori & Antonakis, in press). In fact,usinge above discussion concerns a special kind of omitted variable bias because by estimating the model only with x, we omit uhe model; given that u is a cause of x creates endogeneity of the sort that x correlates with the combined error term in Eq.his bias attenuates the coefcient of x, particularly in the presence of further covariates (Angrist & Krueger, 1999); the biasso taint the coefcients of other independent variables that are correlated with x (Bollen, 1989; Kennedy, 2003) refer tois correlated with x. Note that measurement error in the y variable does not bias coefcients and is not an issue because it isabsorbed in the error term of the regression model.is evident, the coefcient of xwill be inconsistent given that the full error term, which now includes measurement error too,yi = 0 + 1xi + ei1ui 17anding and rearranging the terms gives:Because leader style is not randomly assigned it will correlate with eimaking 1 inconsistent. Why? For one, leaders could alsochange their style as a function of followers' performance, leading to Eq. (13).

xi = 1yi + uj 13

We expect 1, to be positive. Now, because we do not explain y perfectly, y varies as a function of e too; y could randomlyincrease (e.g., higher satisfaction of followers because of a company pay raise) or decrease (e.g., an unusually hot summer).Suppose y increases due to e; as a consequence x will vary; thus, e affects x via y in Eq. (13). In simple terms e correlates with x,rendering 1 inconsistent. Instrumental-variable estimation can solve this problem, as we discuss later.

3.3. Measurement error (errors-in-variables)

Suppose we intend to estimate our basic specication, however, this time what we intent to observe is a latent variable,x*:

yi = 0 + 1xi + ei 14

However, instead of observing x*, which is exogenous and a theoretically pure or latent construct, we observe instead a not-so-perfect indicator or proxy of x*, which we call x (assume that x* is the IQ of leader i). This indicator consists of the truecomponent (x*) in addition to an error term (u) as follows (see Cameron & Trivedi, 2005; Maddala, 1977):

xi = xi + ui 15a

xi = xiui 15b

Now substituting Eq. (15b) into Eq. (14) gives:

yi = 0 + 1xiui + ei 16

-

3.4. Co

Re

1096 J. Antonakis et al. / The Leadership Quarterly 21 (2010) 10861120y could be because they both depend on q. For example, suppose raters rate their leaders on their leader style (x) and ratersare simultaneously asked to provide ratings on the leaders' effectiveness (y); given that a common source is being used it isquite likely that the source (i.e., rater) will strive to maintain consistency between the two types of ratings (Podsakoff,MacKenzie, Lee, & Podsakoff, 2003; Podsakoff & Organ, 1986) suppose due to q, which could reect causes including haloeffects from the common source (note, a source could also be a method of data gathering). Important to note is that thecommon source/method problem does not only inate estimates as most researchers believe; it could bias them upwards aswell as downwards as we will show later. As will be evident from our demonstrations, common-method variance is a veryserious problem and we disagree in the strongest possible terms with Spector (2006) that effects associated with common-method variance is simply an urban legend.

Although Podsakoff et al. (2003) suggested that the common-method variance problem biases coefcients, they did notspecically explain why the coefcient of x predicting y can be biased upwards or downwards. To our knowledge, we makethis demonstration explicit for the rst time (at least as far as the management and applied psychology literature isconcerned). We also provide an alternative solution to deal with common-method variance (i.e., two-stage least squares, asdiscussed later), particularly in situations where the common cause cannot be identied. An often-used remedy for common-methods variance problem is to obtain independent and dependent variables from different sources or different times, aremedial action which we nd satisfactory as long as the independent variables are exogenous. In the case of split-sampledesigns where half the raters rate the leader's style and the other half the leader's effectiveness (e.g., Koh, Steers, & Terborg,1995) precision of inference (i.e., standard errors) will be reduced particularly if the full sample is not large. Also, splittingmeasurement occasions across different time periods still does not fully address the problem because the common-methodvariance problem could still affect the independent variables that have been measured from the common source (refer to theend of this section).

One proposed way to deal with this problem is to include a latent common factor in the model to account for the common(omitted) variance (Loehlin, 1992; Podsakoff et al., 2003, see Figure 3A in Table 5; Widaman, 1985). Although Podsakoff, et al.suggested this method as a possible remedy and cited research that has used it as evidence of its utility, they noted that thismethod is limited in its applicability. We will go a step further and suggest that this procedure should never be used. As we willshow, one cannot remove the common bias with a latent method factor because the modeler does not know how the unmeasuredcause affects the variables (Richardson, Simmering, & Sturman, 2009). It is impossible to estimate the exact effect of the commonsource/method variance without directly measuring the common source variable and including it in the model in the correctcausal specication.

Suppose that an unmeasured common cause, degree of organizational safety and risk, affects two latent variables, as depictedin Fig. 2; this context of leadership is onewhere teammembers are exposed to danger (e.g., oil rig). We generated data for a modelwhere 1 and 2 measure subordinate ratings of a leader's style (task and person-oriented leadership respectively). The effect ofthe cause on 1 is positive (.57), that is, in a high-risk situation the leader is very task-oriented because in these situations,violation of standards could cost lives; however, for 2 the effect of the common cause is negative ( .57), that is, in high-risksituations, leaders pay less attention to being nice to subordinates. Thus, leadership style is endogenous; this explanation shouldmake it clear why leader style can never be modeled as an independent variable. When controlling for the common cause theresidual correlation between 1 and 2 is zero. The data are such that the indicators of each respective factor are tau equivalent(i.e., they have the same loadings on their respective factors) and with strong loadings (i.e., all s are .96 and are equal on theirrespective factors). We made the models tau equivalent to increase the likelihood that the model is identied when introducing alatent common method/source factor. The sample size is 10,000, and the model ts the data perfectly, according to theoveridentication test: 2(31)=32.51, pN .05 (as well as to adjunctive measures of t CFI=1.00, RMSEA=.00 which we donot care much for as we discuss later). Estimating the model without the common cause gives a biased structural estimate (acorrelation of .32 between the two latent variables), although the model ts perfectly: 2(25)=28.06, pN .05 (CFI=1.00,RMSEA=.00); hence, it is important of theoretically establishing if modeled variables are exogenous or not because amisspeciedmodel (with endogeneity) could still pass a test of t. Finally, when including a latent common factor to account for the supposedcommon-cause effects, the model still ts well: 2(17)=20.32, pN .05 (CFI=1.00, RMSEA=.00). However, the loadings and thestructural parameter are severely biased. This method, which is very popular with modelers, is obviously not useful; also, as isevident, this misspecication is not picked up with the test of model t. The correct model estimates could have been recoveredwhen using instrumental variables (we present this solution later for the simple case of a path model and then extend thisprocedure to a full structural-equation model).

We rst broaden Podsakoff et al.'s (2003) work to show the exact workings of common-method bias, and then present asolution to the common-method problem. We start with our basic specication, where a rateri has rated leaderj (n=50 leaders)on leader style x and leader effectiveness y, where we control for the xed effects of rm (note, the estimator should be a robustone for clustering, as discussed later; also, assume in the following that we do not have random effects):

yij = 0 + 1xij +

50

k=2kDjk + eij 18mmon source, common-method variance

lated to the previous problem of measurement error is what has been termed common-method variance. That x causes

-

1097J. Antonakis et al. / The Leadership Quarterly 21 (2010) 10861120Simfollow

Fig. 2. Cinclude(whichilar to the case of measurement error we cannot directly observe y* or x*; however what we do observe is y and x in theing respective equations (where qi is the common bias):

yij = yij + yqij 19

orrecting for common-source variance: the common method factor fallacy (estimates are standardized). A: this model is correctly specied. B: failing tothe common cause estimates the correlation between 1 and 2 incorrectly (0.32). C: including an unmeasured common factor estimates the loadingsare also not signicant for 1) and the correlation between 1 and 2 (0.19, not signicant) incorrectly.

-

Asconsis

coefcothermay b

3.5. Co

We

paperimpor

Inidenti

observWhethfor he

If rvarian

just rovarianstanda

1098 J. Antonakis et al. / The Leadership Quarterly 21 (2010) 10861120bust standard errors. We cannot stress the importance of having the standard errors correctly estimated (either with a robustce estimator or using bootstrapping) and this concern is really not on the radar screen of researchers in our eld. Consistentrd errors are just as important as consistent estimates. If standard errors are not correctly estimated, p-values will be over orworkofHuberWhite, and the standard errors are usually referred to asHuberWhite standard errors, sandwiched standard errors, orations are nested under a Level 2 entity). It is always a good idea, however, to check whether residuals are homoscedastic.er they are clustered is certainly evident from the data-gathering design. Programs like Stata have nice routines for checkingteroscedasticity, including White's test, and for the presence of clustering.esiduals are heteroscedastic, coefcient estimates will be consistent; however, standard errors will not be. In this context, thece of the parameters has to be estimateddifferently as the usual assumptionsdonot hold. The variance estimator is based on thehave a uniform variance. By independently distributed we mean that they are not clustered or serially correlated (as whens by Huber (1967) and White (1980); this work is extremely technical so we will just provide a short overview of itstance and remedial action that can be taken to ensure correct standard errors.a simple experimental setting, regression residuals will usually be i.i.d. (identically and independently distributed). Bycally distributed we mean that residuals are homoscedastic, that is, they have been drawn from the same population andwe mean whether the standard errors are consistent. There has been quite a bit of research on this area particularly after thensistency of inference

nish this section by bringing up another threat to validity, which has to do with inference. From a statistical point-of-viewand one that, to our knowledge, will be presented for the rst time to leadership, management, and applied-psychologyresearchers. This solution has been available to econometricians for quite some time, and we will discuss this solution in thesection on two-stage least squares estimation.

Note, assume the case where only the independent variables (e.g., assume x1 and x2) suffer from common-method variance; inthis case, the estimates of the two independent variables will be biased to zero and be inconsistent (though their relativecontribution, x1

x2is consistent), which can be shown as follows. Suppose:

y = 0 + 1x1 + 2x

2 + e 25

Instead of observing the latent variables x1* and x2*, we observe x1 and x2, which are assumed to have approximately the samevariance and are both equally dependent on a common variable q. Thus, by substitution it can be shown that both estimates will bebiased downwards but equally so, suggesting their relative contribution will remain consistent:

y = 0 + 1x1 + 2x2 + e1q2q 26ients. The coefcient 1 is uninterpretable because it includes the effect of q on x and y. Assuming that the researcher has nooption but to gather data in a common-source way, and apart from measuring and including q directly in the model, whiche difcult to do because q could reect a number of causes, there is actually a rather straightforward solution to this problemhowever, the bias can attenuate or accentuate the coefcient of x. Furthermore, it is now clear that this bias cannot be eliminatedunless q is directly measured (or instruments are used to purge the bias using two-stage least-squares estimation).

Thus, as we alluded to previously, the problem is not one of ination of variance of coefcients; it is one of consistency ofwith measurement error, common-method variance introduces a correlation between x and the error term, which nowts of three components (and cannot be eliminated by estimating the xed effects). Unlike before with measurement error,xij = xij + xqij 20

Rearranging the equations gives:

yij = yijyqij 21

xij = xijxqij 22

Substituting Eqs. (21) and (22) into Eq. (18) shows the following:

yijyqij = 0 + 1xijxqij + 50

k=2kDjk + eij 23

Rearranging the equation gives:

yij = 0 + 1xij + 50

k=2kDjk + eij1xqij + yqij 24

-

understated, whichmeans that results could change from signicant to non-signicant (and vice-versa); refer to Antonakis and Dietz(in press-a) for an example.

Similar to the previous problem of heteroscedasticity, is the problem of standard errors from clustered observations. A recenpaper published in a top economics journal blasted economists for failing to correctly estimate the variance of the parameters andsuggested that many of the results published with clustered data that had not corrected for the clustering were dubious (seeBertrand, Duo, &Mullainathan, 2004), and this in a domain that is known for its methodological rigor! The variance estimator forclustered data is similar in form to the robust one but relaxes the assumptions about the independence of residuals. Note that atimes, researchers have to correct standard errors for multiple dimensions of clustering; that is, we are not discussing the case ohierarchically clustered but truly independently clustered dimensions (see Cameron, Gelbach, & Miller, in press). Again, thesecorrections are easily achieved with Stata or equivalent programs.

4. Methods for inferring causality

1099J. Antonakis et al. / The Leadership Quarterly 21 (2010) 10861120To extend our discussion regarding how estimates can become inconsistent, we now review methods that are useful forrecovering causal parameters in eld settings where randomization is not possible. We introduce two broad methods of ensuringconsistent estimates. The rst is what we refer to as statistical adjustment, which is only possible when all sources of variation in yare known and are observable. The second way we refer to as quasi-experimentation: Here, we include simultaneous equationmodels (with extensive discussion on two-stage least squares), regression discontinuity models, difference-in-differencesmodels,selection models (with unobserved endogeneity), and single-group designs. These methods have many interesting and broadapplications in real-world situations, where external validity (i.e., generalizability) is assured, but where internal validity (i.e.,experimental control) is not easily assured. Given space constraints, our presentation of these methods is cursory; our goal is tointroduce readers to the intuition and the assumptions behind the methods and to explain how they can recover the causalparameter of interest. We include a summary of these methods in Table 2.

4.1. Statistical adjustment

The most simple way to ensure that estimates are consistent is to measure and include all possible sources of variance of y inthe regression model (cf. Angrist & Krueger, 1999); of course, we must control for measurement error and selection effects ifrelevant. Controlling for all sources of variance in the context of social science, though, is not feasible because the researcher has toidentify everything that causes variation in y (so as to remove this variance from e). At times, there is unobserved selection at handor other causes unbeknown to the researcher; from a practical point-of-view, this method is not very useful per se. We are notsuggesting that researchers must not use controls; on the contrary, all known theoretical controls must be included. However, it islikely that researchers might unknowingly (or even knowingly) omit important causes, so they must also use other methods toensure consistency because of possible endogeneity.

4.1.1. Propensity score analysis (PSA)Readers should refer back to Eqs. (10) and (11) so as to understand why PSA could recover the causal parameter of interest and

thus approximate a randomized eld experiment (Rubin, 2008; Rubin & Thomas, 1996). PSA can only provide consistent estimatesto the extent that (a) the researcher has knowledge of variables that predict whether an individualwould have received treatmentor not, and (b) e and u in Eqs. (10) and (11) do not correlate. If e and u correlate, which may often be the case, a Heckmantreatment effects model must be used to derive consistent estimates (discussed later).

The idea behind PSA is quite simple and has to do with comparing treated individuals to similar control individuals (i.e., torecreate the counterfactual). Going back to the randomized experiment: What is the probability, or propensity to provide anintroduction to the term, that a particular individual is in the treatment versus the control group? If the treatment is assignedrandomly, it is .50 (i.e., 1 out of 2). However, this probability is not .50 is the treatment was not assigned randomly. Thus, theessence of PSA is to determine the probability (propensity) that an individual would have received treatment (as a function ofmeasured covariates). Then, the researcher attempts to compare (match) individuals from the treatment and control groups who

Table 2Six methods for inferring causality in non-experimental settings.

Method Brief description

1. Statistical adjustment Measure and control for all causes of y (impractical and not recommended)2. Propensity score analysis Compare individuals who were selected to treatment to statistically similar

controls using a matching algorithm3. Simultaneous-equation models Using instruments (exogenous sources of variance that do not correlate with the

error term) to purge the endogenous x variable from bias.4. Regression discontinuity Select individuals to treatment using a modelled cut-off.5. Difference-in-differences models Compare a group who received an exogenous treatment to a similar control group

over time6. Heckman selection models Predict selection to treatment (where treatment is endogenous) and then control

for unmodeled selection to treatment in predicting y.t

tf

-

have tgivensimila

Othervariabmaxim

1100 J. Antonakis et al. / The Leadership Quarterly 21 (2010) 10861120Turning back to the issue at hand, let us assume we have a commonmethods variance problem, where x (leader behavior) andy (perceptions of leader effectiveness) have been gathered from a common source: bossi rating leaderi (n=50 leaders; with qrepresenting unobserved common-source variance, and c control variables). Here, following Eq. (24) we estimate:

yi = 0 + 1xi + c

k=1k fik + ei1xqi + yqi 28he 2SLS estimation procedure must employ a probit model in the rst stage equation, that is, in Eq. (29) (Greene, 2008).estimators are available too for this class of model (e.g., where the y variable is a probit but the endogenous is a continuousle). The Stata cmp command (Roodman, 2008) can estimate a broad class of such single-indicator mixed models byum likelihood similar to the Mplus structural-equation modeling program (L. K. Muthn & Muthn, 2007).Suppose in our example that we want to compare individuals who undertook leadership training (and were self-selected)versus a control group. In the rst instance, we estimate a probit (or logistic) model to predict the probability that an individualreceives the treatment:

xi = 0 + q

k=1kzki + ui 27