An Alternative Storage Solution for MapReduce Eric Lomascolo Director, Solutions Marketing

Welcome message from author

This document is posted to help you gain knowledge. Please leave a comment to let me know what you think about it! Share it to your friends and learn new things together.

Transcript

MapReduce Breaks the Problem Down Data Analysis

• Distributes processing work (Map) across compute

nodes and accumulates results (Reduce)

8

• Hadoop is a popular open

source MapReduce S/W

• Processes unstructured

and semi-structured data

• HDFS™ uses location info

to replicate information

between nodes

–By Default 3 copies

*Hadoop Demystified

Rare Mile Technologies

About the Hadoop File System (HDFS)

• WORM access model

• Uses commodity hardware with the expectation that

failures will occur

• Reads data in large, contiguous data blocks and

process very large files

• Is Hardware agnostic

• Assumes that moving computation is cheaper than

moving data

9

HDFS™ Performance is Limited

• HDFS Premise

–“Moving Computation is Cheaper Than Moving Data”

• The data ALWAYS has to be moved

–Either from local disk

–Or from the network

–Includes

• Replication operations for availability

• Results data movement

• And with a good network: the network wins

–Hadoop performance is gated by file system

performance

10

Hadoop File System (HDFS) Challenges

• Performance

– a lack of caching in the case of random loads

– slow file modifications due to WORM and synchronous replication

– HTTP used for data transfer – cannot use DMA

• Scalability

– Large block sizes limits the number of files

– Limits full use of resources in the case when data is not at the

CPU

– HDFS RAID can eliminate need for replication but impacts CPU

• Storage

– Not POSIX compliant and non-general purpose access

– Data transfer into and out of Hadoop environment is required

– Data Replication storage costs

11

Lustre ® – High Performance File System Alternative

12

Client

Client

Client

Router

MDS MDS

OSS

OSS

OSS

disk

disk

disk

OSS

…

OSS OSS

Disk arrays &

SAN Fabric Lustre Client

1-100,000

Support multiple network types

Gemini, Myrinet, IB, GigE

Object Storage

Servers (OSS)

1-1,000s

Metadata

Servers (MDS)

Metadata

Target (MDT)

Object Storage

Target (OST)

OSS disk

CIFS Client

Gateway NFS

Client

Comparing HDFS to Lustre Cluster Setup Scenario

• 100 clients, 100 disks, Infiniband

• Disks: 1 TB High Capacity SAS drives (Seagate

Barracuda)

–80 MB/sec bandwidth with cache off

• Network: 4xSDR Infiniband

–1GB/s

• HDFS: 1 drive per client

• Lustre: 10 OSSs with 10 OSTs

Comparing HDFS to Lustre Theoretical Part I

• 100 clients, 100 disks, SDR Infiniband

• HDFS: 1 drive per client

–Local client bandwidth is 80MB/s

• Lustre: Each OSS has

–Lustre bandwidth is 800MB/s aggregate (80MB/s * 10)

• Assuming bus bandwidth to access all drives simultaneously

–Net bandwidth 1GB/s (IB is point to point)

• With 10 OSSs, we have same capacity & bandwidth

• Network is not the limiting factor!

Comparing HDFS to Lustre Theoretical Part II - Striping

• In terms of raw bandwidth, network does not limit

data access rate

• Striping the data for each Hadoop data block, we

can focus our bandwidth on delivering a single block

• HDFS limit, for any 1 node: 80MB/s

• Lustre limit, for any 1 node: 800MB/s

–Assuming striping across 10 OSTs

–Can deliver that to 10 nodes simultaneously

• Typical MR workload is not simultaneous access

(after initial job kickoff)

17

MapReduce I/O Benchmark

18

8 Nodes

QDR IB

8 Drives (80MB/s)

HDFS

-8 Nodes

-1 Disk each

Lustre

-2 OSS

-4 OST Disks

Lustre Advantages for Hadoop

• Performance

– Caching file system with complete cache coherence

– High performance file modifications – replication not required

– Uses high speed DMA for data transfers

• Scalability

– Support for billions of files – 2.5 Billion

– All compute clients have access to data

– Can leverage standard data and system availability techniques

• Storage

– POSIX compliant

– No data transfer for pre and post processing required

– Reduces need to manage multiple copies between analytic systems

20

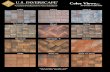

ClusterStor™ 6000 A Big Data Scale-Out Solution

Delivering the Ultimate in

HPC Data Storage with:

Optimized time to productivity

Efficiency, application availability, results

Unmatched file system

performance – Delivered!

Industry’s fastest just got two times faster

Highest reliability, availability

and serviceability

Enterprise level resiliency

21

ClusterStor Solutions

22

An integrated and scalable HPC data storage

solution designed to be

Easy to

deploy, use,

and manage

Delivering

efficiency,

application availability,

and massive results

Lustre® Community and Xyratex

Roles in the Lustre® Community

OpenSFS & EOFS Board

Member

- Direct funding of Lustre tree & roadmap

development

Active Contributor to

Lustre Source & Roadmap

-World class Lustre development team on

staff

Integration of Lustre into

ClusterStor™

- Industry leading HPC storage solutions

Lustre Support Services

-ClusterStor, Lustre & 3rd

party hardware

ClusterStor 6000 Optimized time to productivity

24

Uses Xyratex exclusive parallel scale-out file system

processing and I/O architecture

Leverages latest in Xyratex application platform

technologies and Lustre® integration

Results in increased file system throughput and

capacity efficiencies on a per rack unit volume basis

Fully

Integrated

Optimized

HW/SW Factory

Tested

Shipped

Ready to Go

ClusterStor Delivers Scale-Out Lustre Scalable Storage Unit - SSU - Building Block

25

Client

Client

Client

Router

MDS MDS

OSS

OSS

OSS

disk

disk

disk

OSS

…

OSS OSS

Disk arrays &

SAN Fabric Lustre Client

1-100,000

Support multiple network types

Gemini, Myrinet, IB, GigE

Object Storage

Servers (OSS)

1-1,000s

Metadata

Servers (MDS)

Metadata

Target (MDT)

Object Storage

Target (OST)

OSS disk

CIFS Client

Gateway NFS

Client ClusterStor SSU

ClusterStor HA-MDS

ClusterStor 6000 – Scale-Out Building Blocks Unmatched file system performance – Delivered!

26

Industry’s fastest just got two times faster

Linear processing scalability supports

installations up to 1 TB/s file system throughput

and tens of PBs of storage capacity

Each ClusterStor

6000 Scalable

Storage Unit (SSU)

Produces

6 GB/sec of File

System

Performance

ClusterStor 6000

28

ClusterStor 6000 SSU

Produces 6.0 GB/sec IOR

Doubles SSU Performance

ClusterStor Embedded Server Module

Two Modules per SSU for high availability

Increased

Performance

42GB/sec per rack

Latest Processor

Technology

2X Memory

FDR InfiniBand

ClusterStor Family Performance and Capacity More Performance and Storage Capacity in Less Space

29

5.76 11.52 17.28 23.04

ClusterStor 6000

Doubles SSU

Performance

GigaBytes

Performance (User Level

Sustained IOR

Lustre® File

System

Performance)

90

270

180

360

ClusterStor 3000

PetaBytes

(User Level

Storage

Capacity)

30 60 90 120 Number

of SSUs

28.80

150

ClusterStor 6000 Highest reliability, availability and serviceability

30

Fully resilient software-hardware integration with low

level diagnostics, embedded monitoring, enterprise

level data protection architecture, proactive alerts

Easy to

Manage

Real Time

Monitoring

Xyratex Confidential

ClusterStor – Powering The Fastest

Storage System in The World (Q3 2012)

Exponentially less cost, space, cooling

and power than the competition!

>1TB/second

Aggregate Bandwidth

Xyratex

CS-6000

System

Number of Racks: 36

Square Footage: 644 ft2

Hard Drives: 17,280

Power: ~0.443MW

Heat Dissipation (BTUs): 1,165,600

Links

• Xyratex

–http://www.xyratex.com/

• NCSA

–http://www.ncsa.illinois.edu/

• Hadoop Demystified

–http://blog.raremile.com/2012/06/hadoop-demystified/

• Wikibon on Big Data

–http://wikibon.org/wiki/v/Big_Data

–http://wikibon.org/blog/taming-big-data/

32

Related Documents