Sponsored by the U.S. Department of Defense © 2004 by Carnegie Mellon University Version 1.0 page 1 Pittsburgh, PA 15213-3890 CarnegieMellon Software Engineering Institute A Measurement & Analysis Training Solution Supporting CMMI & Six Sigma Transition Dave Hallowell Jeannine M. Siviy

Welcome message from author

This document is posted to help you gain knowledge. Please leave a comment to let me know what you think about it! Share it to your friends and learn new things together.

Transcript

Sponsored by the U.S. Department of Defense© 2004 by Carnegie Mellon University

Version 1.0 page 1

Pittsburgh, PA 15213-3890

Carnegie MellonS oftware Engineer ing Inst itute

A Measurement & Analysis Training Solution SupportingCMMI & Six Sigma Transition

Dave HallowellJeannine M. Siviy

Report Documentation Page Form ApprovedOMB No. 0704-0188

Public reporting burden for the collection of information is estimated to average 1 hour per response, including the time for reviewing instructions, searching existing data sources, gathering andmaintaining the data needed, and completing and reviewing the collection of information. Send comments regarding this burden estimate or any other aspect of this collection of information,including suggestions for reducing this burden, to Washington Headquarters Services, Directorate for Information Operations and Reports, 1215 Jefferson Davis Highway, Suite 1204, ArlingtonVA 22202-4302. Respondents should be aware that notwithstanding any other provision of law, no person shall be subject to a penalty for failing to comply with a collection of information if itdoes not display a currently valid OMB control number.

1. REPORT DATE OCT 2004 2. REPORT TYPE

3. DATES COVERED 00-00-2004 to 00-00-2004

4. TITLE AND SUBTITLE A Measurement & Analysis Training Solution Supporting CMMI & SixSigma Transition

5a. CONTRACT NUMBER

5b. GRANT NUMBER

5c. PROGRAM ELEMENT NUMBER

6. AUTHOR(S) 5d. PROJECT NUMBER

5e. TASK NUMBER

5f. WORK UNIT NUMBER

7. PERFORMING ORGANIZATION NAME(S) AND ADDRESS(ES) Carnegie Mellon University,Software Engineering Institute,Pittsburgh,PA,15213

8. PERFORMING ORGANIZATIONREPORT NUMBER

9. SPONSORING/MONITORING AGENCY NAME(S) AND ADDRESS(ES) 10. SPONSOR/MONITOR’S ACRONYM(S)

11. SPONSOR/MONITOR’S REPORT NUMBER(S)

12. DISTRIBUTION/AVAILABILITY STATEMENT Approved for public release; distribution unlimited

13. SUPPLEMENTARY NOTES

14. ABSTRACT

15. SUBJECT TERMS

16. SECURITY CLASSIFICATION OF: 17. LIMITATION OF ABSTRACT Same as

Report (SAR)

18. NUMBEROF PAGES

55

19a. NAME OFRESPONSIBLE PERSON

a. REPORT unclassified

b. ABSTRACT unclassified

c. THIS PAGE unclassified

Standard Form 298 (Rev. 8-98) Prescribed by ANSI Std Z39-18

© 2004 by Carnegie Mellon University Version 1.0 page 2

Carnegie MellonS oftware Engineer ing Inst itute

Trademarks and Service Marks® Capability Maturity Model, Capability Maturity Modeling, Carnegie Mellon, CERT, CERT Coordination Center, CMM,and CMMI are registered in the U.S. Patent and TrademarkOffice by Carnegie Mellon University.

SM Architecture Tradeoff Analysis Method; ATAM;CMM Integration; CURE; IDEAL; Interim Profile; OCTAVE; Operationally Critical Threat, Asset, and Vulnerability Evaluation; Personal Software Process; PSP; SCAMPI; SCAMPI Lead Assessor; SCAMPI Lead Appraiser; SCE; SEI; SEPG; Team Software Process; and TSP are servicemarks of Carnegie Mellon University.

© 2004 by Carnegie Mellon University Version 1.0 page 3

Carnegie MellonS oftware Engineer ing Inst itute

Objectives

Primary• Trace the design and development of a measurement &

analysis course that integrates CMMI and Six Sigma• Show why such integration is important

Secondary• Highlight the complexities of process improvement in a

“multi-technology world”• Share issues related to technology transition• Describe instructional design choices• Illustrate a course case study in Six Sigma project form

© 2004 by Carnegie Mellon University Version 1.0 page 4

Carnegie MellonS oftware Engineer ing Inst itute

Outline

Motivations for process improvement

Process improvement solutions• Roots, evolutions, and integrations• Transitioning your solution

Training as part of your “whole product”• Designing an integrated training solution• Illustration(s)

© 2004 by Carnegie Mellon University Version 1.0 page 5

Carnegie MellonS oftware Engineer ing Inst itute

What Drives “Process Improvement”?

Performance issues: product, project• And, eventually, process issues

Regulations and mandates• Sarbanes Oxley• “Level 3” requirements to win contracts

Business issues and “burning platforms”• Lost market share or contracts• Continuous cost and cycle time improvement• Capitalizing on new opportunities

There is compliance-driven improvement.And there is performance-driven improvement.

© 2004 by Carnegie Mellon University Version 1.0 page 6

Carnegie MellonS oftware Engineer ing Inst itute

Solutions

CMMI®

EIA 731

TSPSM

ISO12207

Score-card

EIA 632

ISO9000

ITIL

COBIT

PSM

GQIM

© 2004 by Carnegie Mellon University Version 1.0 page 7

Carnegie MellonS oftware Engineer ing Inst itute

More Solutions: SPC Frameworks Quagmire

Cop

yrig

ht 2

001,

Sof

twar

e P

rodu

ctiv

ity C

onso

rtiu

m.

Incl

uded

her

e w

ith p

erm

issi

on.

© 2004 by Carnegie Mellon University Version 1.0 page 8

Carnegie MellonS oftware Engineer ing Inst itute

And Yet More Solutions

[CIO 04]

CIO Magazine: “Quality Model Mania”

© 2004 by Carnegie Mellon University Version 1.0 page 9

Carnegie MellonS oftware Engineer ing Inst itute

And There Are Still More…

In addition to the traditional list of “process improvement”models, methods, and standards, there are life-cycle,business-sector-specific, and other types of relevanttechnologies.

For instance:• Rational Unified Process (RUP)• Agile• Architecture Tradeoff Analysis Method (ATAM)• TL9000• People CMM

© 2004 by Carnegie Mellon University Version 1.0 page 10

Carnegie MellonS oftware Engineer ing Inst itute

Do You Have a Headache Yet?

© 2004 by Carnegie Mellon University Version 1.0 page 11

Carnegie MellonS oftware Engineer ing Inst itute

Observations

Organizations are implementing one or more of thesesolutions simultaneously.

Economies of scale are needed in training.

A holistic, “connected” approach is needed in training• Leaving students to their own devices to make

connections can be risky and/or time-consuming.

Before we discuss our project, let’s unfold the story…

© 2004 by Carnegie Mellon University Version 1.0 page 12

Carnegie MellonS oftware Engineer ing Inst itute

Evolutions (examples)Six Sigma• Evolved at Motorola in the 1980s from TQM• First focused on reducing process defects and cycle

time in manufacturing• Later expanded to address design (DFSS)• Spread to services and is in early stages in software

CMMI• Released in 2000• Evolved from several Capability Maturity Models,

reflects Crosby’s 5 maturity levels• Focuses on infrastructure and process maturity• Intended for software and systems engineering

Common roots and common improvement intent[BPD]

© 2004 by Carnegie Mellon University Version 1.0 page 13

Carnegie MellonS oftware Engineer ing Inst itute

A Technology Adoption Process

EstablishBusinessDrivers

SelectTechnology

Implement Solution Measureimpact

Organization’s Process Improvement Groups:SEPGs, Six Sigma Practitioners, et. al.

SEI (or other institution)

developtechnology

transition tech-nology

LevelRating,

BusinessResults

Implement/Integrate tech.

Project Team

Execute project life cycle phases, steps

Measureresults

ProjResults,

transitiondevelop

© 2004 by Carnegie Mellon University Version 1.0 page 14

Carnegie MellonS oftware Engineer ing Inst itute

Designing Your ApproachSelection and development considerations include:• What is the goal?• What model(s) or references should be used?• Should they be implemented in parallel or sequentially?• Can they be used “off the shelf” or is tailoring needed?• What needs to be created internally?

Integrated process solutions that are seamless andtransparent to the engineer in the field significantlycontribute to an organization’s success.

Your Six Sigma skills can play a role in the design.

© 2004 by Carnegie Mellon University Version 1.0 page 15

Carnegie MellonS oftware Engineer ing Inst itute

Transitioning Your SolutionTechnology transition is the process of creating or maturinga technology, introducing it to its intended adopters, andfacilitating its acceptance and use.

Technology is• Any tool, technique, physical equipment or method of

doing or making, by which human capability isextended.”

• “The means or capacity to perform a particular activity.”

Do you use the words maturation, introduction, adoption,implementation, dissemination, rollout, deployment, or fielding inyour improvement approach? Each indicates transition.

[Forrester], [Schon], [Gruber]

© 2004 by Carnegie Mellon University Version 1.0 page 16

Carnegie MellonS oftware Engineer ing Inst itute

Effective Transition Planning

Features include:• Precision about the problem, clarity about the solution• Transition goals & a strategy to achieve them• Definition of all adopters and stakeholders and

deliberate design of interactions among them• Complete set of transition mechanisms — a whole

product• Risk management• Either a documented plan or extraordinary leadership

throughout transition

[Forrester]

© 2004 by Carnegie Mellon University Version 1.0 page 17

Carnegie MellonS oftware Engineer ing Inst itute

The “Whole Product” Concept*

and Policies

T

Installationand

Additional

CoreCoreProductProduct

Standards

Training

Debugging

Software

Etc.Introduction

Support

ororTechnologyTechnology

[Moore]

© 2004 by Carnegie Mellon University Version 1.0 page 18

Carnegie MellonS oftware Engineer ing Inst itute

Outline

Motivations for process improvement

Process Improvement Solutions• Roots, evolutions, and integrations• Transitioning your solution

Training as part of your “whole product”• Designing an integrated training solution• Illustration(s)

© 2004 by Carnegie Mellon University Version 1.0 page 19

Carnegie MellonS oftware Engineer ing Inst itute

Training ChallengesMany technologies have their own training.• It’s not practical to send everyone to all training courses.• Yet it’s also not practical to custom build all training.

Cross training (i.e., CMMI & Six Sigma)• At a strategic level: how to increase awareness so that

experts in one technology can make judicious decisions aboutadoption and implementation of another technology

• At a tactical level: how to balance the expertise

Who and how many should be trained? For instance,• Train whole organization in internal process standards and

possibly basic Six Sigma concepts• Train fewer in Six Sigma BB, CMMI, measurement and

analysis, other specialty areas

© 2004 by Carnegie Mellon University Version 1.0 page 20

Carnegie MellonS oftware Engineer ing Inst itute

Benchmarking

Integrated training solutions underway:• DFSS training that includes awareness

sessions of relevant technologies- SEI’s Product Line Practices, ATAM, CMMI

engineering PAs• DFSS training that leverages ATAM• DMAIC training that references PSP-based

instrumented processes

StrategicEmphasis

TacticalEmphasis

Our approach uses measurement & analysis as an integrator.

Highlights of our course design and content follow.

© 2004 by Carnegie Mellon University Version 1.0 page 21

Carnegie MellonS oftware Engineer ing Inst itute

Scope of New Analysis CoursesOur task is to build new courses that• Focus on analysis

- But more than just SPC• Focus on skills-building• Support CMMI• Appeal to many roles

- process improvement personnel- measurement personnel- project team members- CMMI appraisers (maybe)- Six Sigma practitioners- and so on

• Resonate with organizations at any maturity level

© 2004 by Carnegie Mellon University Version 1.0 page 22

Carnegie MellonS oftware Engineer ing Inst itute

Approach• Leverage other technologies and initiatives.

- Reuse demonstrated frameworks and toolkits- Build explicit connections to models- Define “certification” boundaries and options- Return to common roots but don’t reinvent the wheel

• Assemble a cross-organizational, cross-functionaldevelopment team

• Use Gagne’s Model for Instructional Design• Use Kirkpatrick’s Four-Level Evaluation Model• Design for extensibility: case study approach

- Allows easy swap-in of other domains, technologies- Allows easy updates as core technologies evolve

© 2004 by Carnegie Mellon University Version 1.0 page 23

Carnegie MellonS oftware Engineer ing Inst itute

Considerations

• Integrate product, process, project analysis- Focus on process- Reuse SEI “Analysis Dynamics”

• Use aspects of Six Sigma- DMAIC problem solving framework- Toolkit- Training philosophy (hands on, practitioner focus)

• Make CMMI links explicit• Determine fit with existing measurement courses• Couple with an annual Measurement Practices

Workshop (future)

© 2004 by Carnegie Mellon University Version 1.0 page 24

Carnegie MellonS oftware Engineer ing Inst itute

A Base Architecture - Connecting all the Improvement Models

Fuzzy Senseof a Problem

or Opportunity

Typeof Data

LanguageStatementsObservations

NumbersActual Capability

Variation Over TimeSample Data

Models

Language + Numbers

Focus on key aspects

Product/Process Improvement Progress

Dis

till

Plan to

Gath

er

Monitored Results

Dis

till

Plan to G

ather

Plan to G

ather

Dis

till

Solution& Standards

Distilling &Understanding

Experience: Gathering &

Discovering

Teams moveback and

forthbetween..

[Kawakita], [Shiba]

© 2004 by Carnegie Mellon University Version 1.0 page 25

Carnegie MellonS oftware Engineer ing Inst itute

Design & Development Process

• Identify needs, requirements• Update overall measurement curriculum design

- Integrate with “certificate programs”• Establish learning objectives• High-level design of measurement & analysis courses

- Course themes and storyboards- Desired student capability level in specific methods

• Detailed design of measurement & analysis courses- Including case study storylines

• Module development, including reviews• Pilot

© 2004 by Carnegie Mellon University Version 1.0 page 26

Carnegie MellonS oftware Engineer ing Inst itute

Curriculum

© 2004 by Carnegie Mellon University Version 1.0 page 27

Carnegie MellonS oftware Engineer ing Inst itute

Certificates and Certifications

SEI Certificate Programs• Analyst (future)

Six Sigma Practitioner Certification• SEI Partners who provide Six Sigma training and

certification can leverage courses- Adjunct, domain-specific, Black Belt training- Domain-specific Yellow Belt training

© 2004 by Carnegie Mellon University Version 1.0 page 28

Carnegie MellonS oftware Engineer ing Inst itute

Learning ObjectivesStudents should be able to• Describe the methodology (DMAIC + thoughtmap), incl

- improved process behavior for business benefit- how this methodology “plays” in the CMMI world

• Decide how, when and why to use selected key toolsand interpret their outputs.

• Demonstrate how effective measurement & analysiscontributes to a paradigm shift from a compliance-driven to performance-driven improvement.

• Analyze, interpret, and report data using “x” tools.• Explain statistical thinking, central tendency,

uncertainty and risk.

© 2004 by Carnegie Mellon University Version 1.0 page 29

Carnegie MellonS oftware Engineer ing Inst itute

Design Highlights: Course Themes

Maps to GP3.2 and GP4.2 atany maturity level.Maps to Level 5 thinking.

Maps to GP2.8 and GP3.2 atany maturity level.Maps to Level 4 thinking.

CMMI Relationship

Prevent defects, ensurecost/schedule performanceby using real-time data andoptimizing front-end planning,requirements, and designprocesses.

Reduce defects, waste (effort,resources, etc), and cycle timeby correcting special causevariation, repairing, and/orimproving processes.

ImprovementOrientation

Process Measurement &Analysis II

Process Measurement &Analysis I

© 2004 by Carnegie Mellon University Version 1.0 page 30

Carnegie MellonS oftware Engineer ing Inst itute

Design Highlights: Course Outline

Process Measurement & Analysis I• Introduce DMAIC flowchart• Call Center Case: DMAIC Process• Defect Containment Case: Data Stratification• Cost & Schedule Case: Variance Reduction

Process Measurement & Analysis II• Recap DMAIC• Project Simulation: Organization and Project Baseline• Defect Containment Case:

- Optimize inspections and improve design• Cost & Schedule Case:

- Optimize estimating and improve requirements

illustration

© 2004 by Carnegie Mellon University Version 1.0 page 31

Carnegie MellonS oftware Engineer ing Inst itute

Skills Capability Matrix (excerpt)Analytical Methods

desired student capability

addressed in course

7+ basic tools (histogram, scatterplot, pareto, etc.) skill 1process mapping / SIPOC skill 1

systems thinking - current/future reality trees, causal loop diagrams tbd tbdsampling, grouping, alpha/beta risk understandFMEA skill 1Orthogonal decomposition knowledge tbdmultivariate analysis (principle components) awareness tbdConfidence and prediction intervals understand 2hypothesis tests, Means comparison tests skill 1Variance comparison tests knowledge tbddesign of experiments (DOE) understand 2analysis of variance, incl analysis of error knowledge 2modeling - regression, residual analyis, response surface knowledge 2Rayleigh distributions understand 2chi square distributions understand tbdcapability analysis understand 2KJ skill 2VOC skill 2QFD awareness 2pughs concept selection skill 2process simulation awareness 2

Awareness = on the radarKnowledge = knows basic information

Skill = can do itUnderstand = can do, interpret, explain

© 2004 by Carnegie Mellon University Version 1.0 page 32

Carnegie MellonS oftware Engineer ing Inst itute

Case 1 Storyline 1Define• organization project portfolio includes both new

development and maintenance• project size and complexity varies significantly• project schedules vary from <1 month to >18 months• Primary focus: customer satisfaction as proxied by field

defects and effort & schedule variance• Organization is transitioning from CMM to CMMI,

working toward high maturity• Organization is not a Six Sigma adopter (yet)

Measure• Earned value data• Defect data• Customer satisfaction survey (new)

© 2004 by Carnegie Mellon University Version 1.0 page 33

Carnegie MellonS oftware Engineer ing Inst itute

Case 1 Storyline 2Analyze• Iteration 1: baseline and problem/goal identification

- Reduce cost and schedule variance (in processand closed project)

- Improve data quality (presence, accuracy, etc.)• Subsequent iterations:

- baseline updates- problem/goal refinement- process understanding

• Tools used: boxplots, distributions, time series, paretocharts, capability analysis, basic descriptive statistics,indicator templates, survey analysis, SMART* goals,root cause analysis

Specific, Measurable, Attainable, Realistic, Timely

© 2004 by Carnegie Mellon University Version 1.0 page 34

Carnegie MellonS oftware Engineer ing Inst itute

Case 1 Storyline 3Improve• Measurement infrastructure• Cost and schedule variance cause code taxonomy• Estimating (training, minor process adjustments)• Adoption of “management by fact” (MBF) format• Homogeneous samples for in-process charts

Control• Organization: dashboards with charts for cost, schedule,

defects, data quality, customer satisfaction• Projects: Earned Value (EV) prediction model

© 2004 by Carnegie Mellon University Version 1.0 page 35

Carnegie MellonS oftware Engineer ing Inst itute

Case 1 Sample ArtifactsSample artifacts on following slides include• Baseline charts: boxplots, capability analysis• Co-optimized pareto analysis• SMART goals and root cause analysis• Homogeneous sampling• Earned Value prediction model• Management by Fact

The full case storyline demonstrates the usage of animprovement process• consistent with DMAIC, incl gates• meeting CMMI specific practices• leveraging measurement best practices

© 2004 by Carnegie Mellon University Version 1.0 page 36

Carnegie MellonS oftware Engineer ing Inst itute

Case 1 Artifacts: Goal StructureCustomer Satisfaction

Track/chart fielddefects

Track/chart cost & scheduledeviation

Deliver high quality product

• other factors

Plot, plot, plot:• trends• distributions• control charts (c-charts)• scatter plots

Plot, plot, plot:• trends• distributions• control charts (x-bar, r; x, mr)• scatter plots

otherfactors

Success Indicators,Management Indicators

Analysis Indicators,Progress Indicators

Analysis Indicators,Progress Indicators

SPI TaskPlans

Consonantwith GQIMand SixSigma CTQs

© 2004 by Carnegie Mellon University Version 1.0 page 37

Carnegie MellonS oftware Engineer ing Inst itute

%S

ch. V

ar.

-100

-80

-60

-40

-20

0

20

40

60

80

100

1: oct-01 2: nov-01 3: dec-01 4: jan-02 5: feb-02 6: mar-02

month label

In-Process Schedule Variance Boxplot

x

90th percentile75th percentilemedian: 50th percentile25th percentile10th percentile

mean

Data reported monthly for all projects, cycle phases

Conclusion: need to address variability

This andfollowingslidesconsonant withSix Sigmatoolkit, CMMIQPM, OPP,CAR

© 2004 by Carnegie Mellon University Version 1.0 page 38

Carnegie MellonS oftware Engineer ing Inst itute

Completed Project Data Baseline

This represents (initial plan – final actual)• negative numbers are overruns• schedule is in terms of calendar days

It is the total cumulative variance• customer-requested/approved changes are included• one way or another, this is what the customer sees

% effort variance % sched varianceaverage -66.1% -15.0%standard deviation 415.9% 38.3%median 0.9% -8.1%min to max -2689.9% to 50.1% -99.8% to 128.0%n 42 42capability notes(spec = +/- 20%)

45.2%outside spec

40.4%outside spec

-30

-25

-20

-15

-10

-5

0

5

LSL

USL

Target

-1

-0.5

0

0.5

1

1.5

LSL

USLTarget

© 2004 by Carnegie Mellon University Version 1.0 page 39

Carnegie MellonS oftware Engineer ing Inst itute

Transformed original brainstorm list• initial experiential assessment of frequency, impact of each

cause code• refined “operational definitions” and regrouped brainstorm list• tagged causes to historical data• refined again

Final list included such things as• Missed requirements• Underestimated task• Over commitment of personnel• Skills mismatch• Tools unavailable• EV Method problem• Planned work not performed• External

Cause Code Taxonomy

Direct Cause vs.Root Cause

Causes resolved in-process vs. causesthat affect finalperformance

© 2004 by Carnegie Mellon University Version 1.0 page 40

Carnegie MellonS oftware Engineer ing Inst itute

Co-Optimized Pareto Analysis

EV ProblemsUnexpecteddeparture

Planned worknotperformed

Assetavailability

Underestimatedtask

SkillsMismatch

5

Unexpecteddeparture ofpersonnel

MissedRequirements

EV ProblemsUnder plannedrework

Plannedwork notperformed

Missedrequirements

4

Missedrequirements

UnderestimatedTask

Missedrequirements

Missedrequirements

Underplannedrework

EV Problems3

UnderestimatedTask

Skillsmismatch

Underplannedrework

EV ProblemsAssets notavailable

Tools2

ToolsToolsUnderestimatedTask

Underestimated Task

ToolsUnderestimatedTask

1

OrganizationSlice 2 Effort

OrganizationSlice 2

Schedule

OrganizationSlice 1 Effort

OrganizationSlice 1

Schedule

EffortScheduleImpact# (fromPareto)

© 2004 by Carnegie Mellon University Version 1.0 page 41

Carnegie MellonS oftware Engineer ing Inst itute

SMART Schedule Variance GoalReduce the total variance by decreasing the variance ofthe top 3 internal causes by 50% in 1 year.

Reduce the impact of external causes by 50%.

Indicators:• Trend for each cause independently• Trend for total variance

Will focusing on thesecauses give us bottom-lineresults?

© 2004 by Carnegie Mellon University Version 1.0 page 42

Carnegie MellonS oftware Engineer ing Inst itute

Schedule Variance Root Cause 1Cause Code: Underestimated tasksProcess: Project ManagementSubprocesses: Planning

• Establish requirements• Define project process• Perform detailed planning

Requirements Management

As subprocesses are explored, processmapping may be used with (or based on) ETVXdiagrams

CMMIFriendly

SixSigmaFriendly

© 2004 by Carnegie Mellon University Version 1.0 page 43

Carnegie MellonS oftware Engineer ing Inst itute

Schedule Variance Root Cause 2Root causes of common cause variation• Inexperience in estimation process• Flawed resource allocation• Estimator inexperience in product (system)• Requirements not understood

Root causes of special cause variation• Too much multitasking• Budget issues

© 2004 by Carnegie Mellon University Version 1.0 page 44

Carnegie MellonS oftware Engineer ing Inst itute

Improving Sampling HomogeneityOverall rollup:• group data by project milestones

Within project:• identify control limits for each development phase• compare each project’s phase against the history of

similar projects in that same phase• robust sample for limit calculations is critical

A L Q 1 8 4 p r o je c t c o s t in d

- 1 0

- 5

0

5

1 0

1 3 5 7 9 11 13 15

M o n th

project cost index

wider limitsfor projectsin planningphase

narrower limitsfor projects inexecution phase

© 2004 by Carnegie Mellon University Version 1.0 page 45

Carnegie MellonS oftware Engineer ing Inst itute

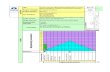

EV Estimate-At-Completion Model

Earned Value Chart

0

2000

4000

6000

8000

10000

12000

14000

16000

18000

10/1

/200

1

11/1

/200

1

12/1

/200

1

1/1/

2002

2/1/

2002

3/1/

2002

4/1/

2002

5/1/

2002

6/1/

2002

7/1/

2002

8/1/

2002

9/1/

2002

10/1

/200

2

11/1

/200

2

12/1

/200

2

1/1/

2003

2/1/

2003

3/1/

2003

4/1/

2003

5/1/

2003

6/1/

2003

7/1/

2003

8/1/

2003

9/1/

2003

10/1

/200

3

11/1

/200

3

12/1

/200

3

1/1/

2004

2/1/

2004

3/1/

2004

4/1/

2004

Month

Ho

urs

EAC

BCWS

BCWP

ACWP

EAC Line

10% Upper Bounds

10% Lower Bounds

20% Upper Bounds

20% Lower Bounds

UCL

LCL

Process UCL, Effort and Schedule

Process LCL, Effort and Schedule

Internal Spec Limits. 20%

and 10%

Calculated Estimate at Completion

Baseline Estimate at Completion

© 2004 by Carnegie Mellon University Version 1.0 page 46

Carnegie MellonS oftware Engineer ing Inst itute

Management by Fact (see handout)

Reduce the total schedule variance by decreasing thevariance of the top 3 internal causes by 50% in 1 year.

Total variance w/mean comparison

Variance for top 3 causes:• Underestimated Tasks• EV Method Problem• Missed Requirements

Prioritization &Root Cause

• Inexperience• Resource Allocation• Requirements not

understood• ….

Counter Measures

First: Gather realtime data andverify “data archaeology”Then:•….•…

Impact, Capability

In total, thesecountermeasures willremove 15% of typicalvariance.(as possible, list impact ofeach countermeasure)

0

5

10

15

20

25

30

1 2 3 4 5Month

Per

cent

Sch

edul

e V

aria

nce

0123456789

1 2 3 4 5Month

Per

cen

t S

ched

ule

Var

ian

ce

Cause 1Cause 2Cause 3

© 2004 by Carnegie Mellon University Version 1.0 page 47

Carnegie MellonS oftware Engineer ing Inst itute

Case Study 1: The ConnectionsCMMI• Process Areas* used: MA, OPP, QPM, OID, CAR• Process Areas touched: PP, PMC, RD, REQM• Terms addressed: Baseline, process performance

model

Measurement Best Practices• Indicator template key component of measurement plan

Six Sigma• Problem-solving approach influenced design and

definition of measurement & analysis processes• Used MBF as an organizational innovation• Indicator templates added as a domain-specific tool to

the Six Sigma toolkit

*See Addenda for list of CMMI Process Areas

© 2004 by Carnegie Mellon University Version 1.0 page 48

Carnegie MellonS oftware Engineer ing Inst itute

Case Study: Skills-Building

In class practice: statistical skills-building• Boxplots• Tukey Kramer• Adapted FMEA

In class discussions and other exercises• Risks of using historical data• Small sample sizes and homogeneous sampling• Corrective action guidance (as part of indicator template,

esp. for SPC charts)• Evaluate and rewrite goals for SMARTness

© 2004 by Carnegie Mellon University Version 1.0 page 49

Carnegie MellonS oftware Engineer ing Inst itute

Key PointsEffective training is a critical part of your process improvementapproach.

Training can and should be “integrated” (as appropriate).

Measurement & Analysis is an effective platform for integration.• It is a common root!

Integrated approaches to training are win-win propositions.• If you are a Six Sigma adopter, you get exposure to domain

specific technologies that will help solve your problems.• If you are a CMMI adopter, you learn to better leverage the

most current body of knowledge for problem-solving.• If you are adopting both, you spend less time making the

connections and more time making progress!

© 2004 by Carnegie Mellon University Version 1.0 page 50

Carnegie MellonS oftware Engineer ing Inst itute

Contact Information

Dave HallowellSix Sigma AdvantageEmail: [email protected]

Jeannine SiviySoftware Engineering InstituteMeasurement & Analysis InitiativeEmail: [email protected]

© 2004 by Carnegie Mellon University Version 1.0 page 51

Carnegie MellonS oftware Engineer ing Inst itute

References[BPD] Process Maturity / Capability Maturity,

http://www.betterproductdesign.net/maturity.htm(a resource site for the Good Design Practice program, a joint initiative between theInstitute for Manufacturing and the Engineering Design Centre at the University ofCambridge, and the Department of Industrial Design Engineering at the RoyalCollege of Art (RCA) in London)

[CIO 04] Anthes, Gary H., Quality Model Mania, CIO Magazine, 8 March 2004,http://www.computerworld.com/developmenttopics/development/story/0,10801,90797,00.html

[Forrester] Forrester, Eileen, Transition Basics (reference information)

[Gruber] William H. Gruber and Donald G. Marquis, Eds., Factors in the Transfer ofTechnology, 1965.

[Kawakita] Kawakita, Jiro, The Original KJ Method, Kawakita Research Institute

[Moore] Geoffrey Moore, Crossing the Chasm: Marketing and Selling Technology Productsto Mainstream Customers. Harper Business. 1991.

[Schon] Donald A. Schon, Technology and Change: The New Heraclitus, 1967.

[Shiba] Shiba, Shiji, et al., New American TQM – Four Practical Revolutions inManagement, Productivity Press, 1993.

All URLs subject to change

© 2004 by Carnegie Mellon University Version 1.0 page 52

Carnegie MellonS oftware Engineer ing Inst itute

Addenda

CMMI Process Areas and Structure

© 2004 by Carnegie Mellon University Version 1.0 page 53

Carnegie MellonS oftware Engineer ing Inst itute

CMMIProcessAreas

ProjectManagement

Process AreasCategory

Requirements ManagementRequirements DevelopmentTechnical SolutionProduct IntegrationVerificationValidation

Engineering

Configuration ManagementProcess and Product Quality AssuranceMeasurement and Analysis (MA)Causal Analysis and ResolutionDecision Analysis and Resolution

Support

Project Planning (PP)Project Monitoring and Control (PMC)Supplier Agreement Management (SAM)Integrated Project ManagementRisk ManagementQuantitative Project Management (QPM)

Organizational Process FocusOrganizational Process DefinitionOrganizational TrainingOrganizational Process PerformanceOrganizational Innovation and Deployment

ProcessManagement

© 2004 by Carnegie Mellon University Version 1.0 page 54

Carnegie MellonS oftware Engineer ing Inst itute

CMMI StructureAppendixesAppendixes

Maturity Level 5 OID, CAR

Maturity Level 4 OPP, QPM

Maturity Level 3 REQD, TS, PI, VER, VAL, OPF, OPD, OT, IPM, RSKM, DAR

Maturity Level 2 REQM, PP, PMC, SAM, MA, PPQA, CM

Process Management PAs - Goals - Practices

Front Matter Introduction Structure of the Model Model Terminology Maturity Levels, Common Features, and Generic

Practices Understanding the Model Using the Model

CMMI-SE/SEStaged

Support CM, PPQA, MA, CAR, DAR

Engineering REQM, REQD, TS, PI, VER, VAL

Project Management PP, PMC, SAM IPM, RSKM, QPM

Process Management OPF, OPD, OT, OPP, OID

Front Matter Introduction Structure of the Model Model Terminology Capability Levels and Generic Model

Components Understanding the Model Using the Model

CMMI-SE/SWContinuous

© 2004 by Carnegie Mellon University Version 1.0 page 55

Carnegie MellonS oftware Engineer ing Inst itute

CMMI

Engineering SupportProcess

ManagementProject

Management

• Organizational Process Focus• Organizational Process Definition• Organizational Training• Organizational Process Performance• Organizational Innovation and Deployment

• Project Planning• Project Monitoring and Control• Supplier Agreement Mgmt.• Integrated Project Mgmt.• Risk Management• Quantitative Project Mgmt.

• Requirements Management• Requirements Development• Technical Solution• Product Integration• Verification• Validation

• Configuration Mgmt.• Process and Product Quality Assurance• Measurement & Analysis• Decision Analysis and Resolution• Causal Analysis and Resolution

IPPD

• Organizational Environment for Integration • Integrated Team

Acquisition

• Supplier Selection and Monitoring• Integrated Supplier Management• Quantitative Supplier Management

CMMI-SE/SW/IPPD/A - Continuous

Related Documents