1 A Fast Deterministic Parser for Chinese Mengqiu Wang, Kenji Sagae and Teruko Mitamura Language Technologies Institute School of Computer Science Carnegie Mellon University

Welcome message from author

This document is posted to help you gain knowledge. Please leave a comment to let me know what you think about it! Share it to your friends and learn new things together.

Transcript

1

A Fast Deterministic Parser for Chinese

Mengqiu Wang, Kenji Sagae and Teruko MitamuraLanguage Technologies Institute

School of Computer Science

Carnegie Mellon University

2

Outline of the talk

Background Deterministic parsing model Classifier and feature selection POS tagging Experiment and results Discussion and future work Conclusion

3

Background

Constituency parsing is one of the most fundamental tasks in NLP.

State-of-the-art accuracy previously reported in Chinese constituency parsing achieves precision and recall in the lower 80% using automatically generated POS.

Most literature in parsing only reports accuracy, efficiency is typically ignored

But in reality, parsers are deemed too slow for many NLP applications (e.g. IR, QA, web-based IX)

4

Deterministic Parsing Model

Originally developed in [Sagae and Lavie 2005] for English Input

Convention in deterministic parsing assumes input sentences (Chinese in our case) are already segmented and POS tagged1.

Main Data Structure A queue, to store input word-POS pairs A stack, holds partial parse trees

Trees are lexicalized. We used the same head-finding rules as [Bikel 2004]

The Parser performs binary Shift-Reduce actions based on classifier decisions.

Example …

1. We perform our own POS tagging based on SVM

5

Deterministic Parsing Model Cont.

Input sentence:

布朗 /NR (Brown/Proper Noun) 访问 /VV (Visits/Verb) 上海 /NR (Shanghai/Proper Noun)

Initial parser state:

Stack: ΘQueue: NR

布朗

VV

访问

NR

上海(Brown) (Visits) (Shanghai)

6

Deterministic Parsing Model Cont.

Classifier output 1: Shift Action Parser State:

Stack:

Queue:

NR

布朗

VV

访问

NR

上海

(Brown)

(Visits) (Shanghai)

7

Deterministic Parsing Model Cont.

Action 2: Reduce the first item on stack to a NP node, with node (NR 布朗 ) as the head

Parser State:

Stack:

Queue: VV

访问NR

上海

NR

布朗

NP (NR 布朗 )

(Brown)

(Visits) (Shanghai)

8

Deterministic Parsing Model Cont.

Action 3: Shift Parser State:

Stack:

Queue:

VV

访问

NR

上海

NR

布朗

NP (NR 布朗 )

(Brown)

(Visits)

(Shanghai)

9

Deterministic Parsing Model Cont.

Action 4: Shift Parser State:

Stack:

Queue: Θ

VV

访问

NR

上海NR

布朗

NP (NR 布朗 )

(Brown)

(Visits) (Shanghai)

10

Deterministic Parsing Model Cont.

Action 5: Reduce the top item on stack to a NP node, with node (NR 上海 ) as the head

Parser State:

Stack:

Queue: Θ

VV

访问NR

布朗

NP (NR 布朗 )

NR

上海

NP (NR 上海 )

(Brown)

(Visits)

(Shanghai)

11

Deterministic Parsing Model Cont.

Action 6: Reduce the top two items on stack to a VP node, with node (VV 访问 ) as the head

Parser State:

Stack:

Queue: Θ

NR

布朗

NP (NR 布朗 )

VV

访问 NR

上海

NP (NR 上海 )

VP (VV 访问 )

(Brown)(Visits)

(Shanghai)

12

Deterministic Parsing Model Cont.

Action 7: Reduce the top two items on stack to an IP node, take the head node of the VP subtree as the head -- (VV 访问 ).

Parser State:

Stack:

Queue: Θ

NR

布朗

NP (NR 布朗 )

VV

访问 NR

上海

NP (NR 上海 )

VP (VV 访问 )

VP (VV 访问 )

(Brown)(Visits)

(Shanghai)

13

Deterministic Parsing Model Cont.

Parsing terminates when queue is empty and stack only contains one item

Final parse tree:

NP (NR 上海 )

NR

布朗

NP (NR 布朗 )

VV

访问 NR

上海

VP (VV 访问 )

VP (VV 访问 )

(Brown)(Visits)

(Shanghai)

14

Classifiers

Classification is the most important part of deterministic parsing. It determines constituency label of each tree node in the final parse tree.

We experimented with four different classifiers: SVM classifier

-- finds a hyper-plane that gives the maximum soft margin that minimizes the expected risk.

Maximum Entropy Classifier -- estimates a set of parameters that would maximize the entropy over distributions that satisfy certain constraints which force the model to best account for the training data.

Decision Tree Classifier -- We used C4.5 [Quinlan 1993]

Memory-based Learning -- kNN classifier, Lazy learner, short training time

15

Features

The features we used are distributionally derived or linguistically motivated.

Each feature carries information about the context of a particular parse state.

We denote the top item on the stack as S(1), and second item (from the top) on the stack as S(2), and so on. Similarly, we denote the first item on the queue as Q(1), the second as Q(2), and so on.

16

Features

Boolean features indicating presence of punctuations, queue emptiness, last parser action, number of words in constituents, headwords and POS, root nonterminal symbol, dependency among tree nodes, tree path information, relative position.

Rhythmic features [Sun and Jurafsky 2004].

17

POS tagging

In our model, POS tagging is treated as a separate problem and is done prior to parsing.

But we care about the performance of the parser in realistic situations with automatically generated POS tags.

We implemented a simple 2-pass POS tagging model based on SVM, achieved 92.5% accuracy.

18

Experiments

Standard Chinese Treebank data collection Training set: section 1-270 of CTB 2.0 (3484 sentences,

84873 words). Development set: section 301-326 of CTB 2.0 Testing set: section 271-300 of CTB 2.0 Total: 99629 words, about 1/10 of the size of English Penn

Treebank. Standard corpus preparation

Empty nodes were removed Functional label of nonterminal nodes removed.

Eg. NP-Subj -> NP For scoring we used the evalb1 program. Labeled

recall, labeled precision and F1 (harmonic mean) measures are reported.

1. http://nlp.cs.nyu.edu/evalb

19

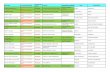

Results

Comparison of classifiers on development set using gold-standard POS

classification Parsing Accuracy

Model Accuracy LR LP F1 Fail Time

SVM 94.3% 86.9% 87.9% 87.4% 0 3m 19s

Maxent 92.6% 84.1% 85.2% 84.6% 5 0m 21s

DTree1 92.0% 78.8% 80.3% 79.5% 42 0m 12s

DTree2 - 81.6% 83.6% 82.6% 30 0m 18s

MBL 90.6% 74.3% 75.2% 74.7% 2 16m 11s

20

Classifier Ensemble

Using stacked-classifier techniques, we improved the performance on the dev set from 86.9% and 87.9 for LR and LP, to 90.3% and 90.5%. a 3.4% improvement in LR and a 2.6%

improvement in LP over the SVM model.

21

Comparison with related work

Results on test set using automatically generated POS.

<= 40 words <= 100 words

LR LP F1 POS LR LP F1 POS

Traditional probabilistic parsers for Chinese

Bikel & Chiang 2000 76.8% 77.8% 77.3% - 73.3% 74.6% 74.0% -

Levy & Manning 2003 79.2% 78.4% 78.8% - - - - -

Xiong et al. 2005 78.7% 80.1% 79.4% - - - - -

Bikel’s Thesis 2004 78.0% 81.2% 79.6% - 74.4% 78.5% 76.4% -

Chiang & Bikel 2002 78.8% 81.1% 79.9% - 75.2% 78.0% 76.6% -

Jiang 2004 80.1% 82.0% 81.1% 92.4% - - - -

Sun & Jurafsky 2004 85.5% 86.4% 85.9% - 83.3% 82.2% 82.7% -

Deterministic parser in this work

DTree model 70.0% 74.6% 72.2% 92.5% 69.2% 74.5% 71.9% 92.2%

SVM model 78.1% 81.1% 79.6% 92.5% 75.5% 78.5% 77.0% 92.2%

Stacked Classifier 79.2% 81.1% 80.1% 92.5% 76.7% 78.4% 77.5% 92.2%

22

Comparison with related work cont.

Comparison of parsing speed

Model Runtime

Bikel 54m 6s

Levy & Manning 8m 12s

DTree 0m 14s

Maxent 0m 24s

SVM 3m 50s

23

Discussion and future work

Deterministic parsing framework opens up lots of opportunities for continuous improvement in applying machine learning techniques

Eg. Experiment with other classifiers and classifier ensemble techniques.

Experiment with degree-2 features for Maxent model, which may give close performance to the SVM model with a faster speed

24

Conclusion

We presented a first work on deterministic approach to Chinese constituency parsing.

We achieved comparable results to the state-of-the-art in Chinese probabilistic parsing.

We demonstrated deterministic parsing is a viable approach to fast and accurate Chinese parsing.

Very fast parsing is made possible for applications that are speed-critical with some tradeoff in accuracy.

Advances in machine learning techniques can be directly applied to parsing problem, opens up lots of opportunities for further improvement

25

Reference

Daniel M. Bikel and David Chiang. 2000. Two statistical parsing models applied to the Chinese Treebank. In Proceedings of the Second Chinese Language Processing Workshop.

Daniel M. Bikel. 2004. On the Parameter Space of Generative Lexicalized Statistical Parsing Models. Ph.D. thesis, University of Pennsylvania.

David Chiang and Daniel M. Bikel. 2002. Recovering latent information in treebanks. In Proceedings of the 19th International Conference on Computational Linguistics.

Michael John Collins. 1999. Head-driven Statistical Models for Natural Langauge Parsing. Ph.D. thesis, University of Pennsylvania.

Walter Daelemans, Jakub Zavrel, Ko van der Sloot, and Antal van den Bosch. 2004. Timbl: Tilburgmemory based learner, version 5.1, reference guide. Technical Report 04-02, ILK Research Group, Tilburg University.

Pascale Fung, Grace Ngai, Yongsheng Yang, and Benfeng Chen. 2004. A maximum-entropy Chinese parser augmented by transformation-based learning. ACM Transactions on Asian Language Information Processing, 3(2):159–168.

Mary Hearne and Andy Way. 2004. Data-oriented parsing and the Penn Chinese Treebank. In Proceedings of the First International Joint Conference on Natural Language Processing.

Zhengping Jiang. 2004. Statistical Chinese parsing. Honours thesis, National University of Singapore. Zhang Le, 2004. Maximum Entropy Modeling Toolkit for Python and C++. Reference Manual. Roger Levy and Christopher D. Manning. 2003. Is it harder to parse Chinese, or the Chinese Treebank? In

Proceedings of the 41st Annual Meeting of the Association for Computational Linguistics. Xiaoqiang Luo. 2003. A maximum entropy Chinese character-based parser. In Proceedings of the 2003

Conference on Empirical Methods in Natural Language Processing. David M. Magerman. 1994. Natural Language Parsing as Statistical Pattern Recognition. Ph.D. thesis,

Stanford University. Quinlan,J.R.: C4.5: Programs for Machine Learning Morgan Kauffman, 1993 Kenji Sagae and Alon Lavie. 2005. A classifier-based parser with linear run-time complexity. In Proceedings

of the Ninth International Workshop on Parsing Technology. Deyi Xiong, Shuanglong Li, Qun Liu, Shouxun Lin, and Yueliang Qian. 2005. Parsing the Penn Chinese

Treebank with semantic knowledge. In International Joint Conference on Natural Language Processing 2005.

Related Documents