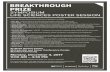

Poster Session - Day 1 L4DC 2021 June 7, 2021 Session 1.A Watch Poster Previews on YouTube Situation Plan List of Posters 2: A. Sonar, V. Pacelli, A. Majumdar, "Invariant Policy Optimization: Towards Stronger Generaliza- tion in Reinforcement Learning " 4: T. T. Doan, "Nonlinear Two-Time-Scale Stochastic Approximation: Convergence and Finite-Time Performance " 7: K. Akuzawa, Y. Iwasawa, Y. Matsuo, "Estimating Disentangled Belief about Hidden State and Hidden Task for Meta-Reinforcement Learning " 1

Welcome message from author

This document is posted to help you gain knowledge. Please leave a comment to let me know what you think about it! Share it to your friends and learn new things together.

Transcript

Poster Session - Day 1

L4DC 2021

June 7, 2021

Session 1.AWatch Poster Previews on YouTube

Situation Plan

List of Posters

2: A. Sonar, V. Pacelli, A. Majumdar, "Invariant Policy Optimization: Towards Stronger Generaliza-tion in Reinforcement Learning"

4: T. T. Doan, "Nonlinear Two-Time-Scale Stochastic Approximation: Convergence and Finite-TimePerformance"

7: K. Akuzawa, Y. Iwasawa, Y. Matsuo, "Estimating Disentangled Belief about Hidden State andHidden Task for Meta-Reinforcement Learning"

1

8: L. Ferrarotti, V. Breschi, A. Bemporad, "The benefits of sharing: a cloud-aided performance-drivenframework to learn optimal feedback policies"

11: M. Booker, A. Majumdar, "Learning to Actively Reduce Memory Requirements for Robot ControlTasks"

23: L. P. Fröhlich, M. N. Zeilinger, E. D. Klenske, "Cautious Bayesian Optimization for Efficient andScalable Policy Search"

27: B. Legat, R. M. Jungers, J. Bouchat, "Abstraction-based branch and bound approach to Q-learningfor hybrid optimal control"

30: L. Dörschel, D. Stenger, D. Abel, "Safe Bayesian Optimisation for Controller Design by Utilisingthe Parameter Space Approach"

37: L. Zheng, Y. Shi, L. J. Ratliff, B. Zhang, "Safe Reinforcement Learning of Control-Affine Systemswith Vertex Networks"

51: A. A. Ahmadi, A. Chaudhry, V. Sindhwani, S. Tu, "Safely Learning Dynamical Systems from ShortTrajectories"

59: C. Ebenbauer, F. Pfitz, S. Yu, "Control of Unknown (Linear) Systems with Receding HorizonLearning"

62: J. Zhang, Z. Yang, Z. Zhou, Z. Wang, "Provably Sample Efficient Reinforcement Learning in Com-petitive Linear Quadratic Systems"

77: N. Zhang, N. Capel, "LEOC: A Principled Method in Integrating Reinforcement Learning andClassical Control Theory"

78: F. Zhao, K. You, "Primal-dual Learning for the Model-free Risk-constrained Linear Quadratic Reg-ulator"

91: A. Mete, R. Singh, X. Liu, P. R. Kumar, "Reward Biased Maximum Likelihood Estimation forReinforcement Learning"

95: D. E. Ochoa, J. I. Poveda, A. Subbaraman, G. S. Schmidt, F. R. Pour-Safaei, "Accelerated Con-current Learning Algorithms via Data-Driven Hybrid Dynamics and Nonsmooth ODEs"

100: P. Massiani, S. Heim, S. Trimpe, "On exploration requirements for learning safety constraints"

104: J. Xu, B. Lee, N. Matni, D. Jayaraman, "How Are Learned Perception-Based Controllers Impactedby the Limits of Robust Control?"

112: A. Gahlawat, A. Lakshmanan, L. Song, A. Patterson, Z. Wu, N. Hovakimyan, E. A. Theodorou,"Contraction L1-Adaptive Control using Gaussian Processes"

113: N. Csomay-Shanklin, R. K. Cosner, M. Dai, A. J. Taylor, A. D. Ames, "Episodic Learning for SafeBipedal Locomotion with Control Barrier Functions and Projection-to-State Safety"

114: S. Ainsworth, K. Lowrey, J. Thickstun, Z. Harchaoui, S. Srinivasa, "Faster Policy Learning withContinuous-Time Gradients"

117: Y. Li, N. Li, H. E. Tseng, A. Girard, D. Filev, I. Kolmanovsky, "Safe Reinforcement Learning UsingRobust Action Governor"

119: S. Totaro, A. Jonsson, "Fast Stochastic Kalman Gradient Descent for Reinforcement Learning"

135: J. Yu, C. Gehring, F. Schäfer, A. Anandkumar, "Robust Reinforcement Learning: A ConstrainedGame-theoretic Approach"

2

Session 1.BWatch Poster Previews on YouTube

Situation Plan

List of Posters

13: L. Xu, M. S. Turan, B. Guo, G. Ferrari-Trecate, "Non-conservative Design of Robust TrackingControllers Based on Input-output Data"

16: A. Alanwar, A. Koch, F. Allgöwer, K. H. Johansson, "Data-Driven Reachability Analysis UsingMatrix Zonotopes"

19: A. Xue, N. Matni, "Data-Driven System Level Synthesis"

26: F. Bünning, A. Schalbetter, A. Aboudonia, M. Hudoba de Badyn, P. Heer, J. Lygeros, "InputConvex Neural Networks for Building MPC"

29: N. Wieler, J. Berberich, A. Koch, F. Allgöwer, "Data-Driven Controller Design via Finite-HorizonDissipativity"

36: A. von Rohr, M. Neumann-Brosig, S. Trimpe, "Probabilistic robust linear quadratic regulators withGaussian processes"

50: J. Liang, A. Boularias, "Self-Supervised Learning of Long-Horizon Manipulation Tasks with Finite-State Task Machines"

52: Z. Wang, O. So, K. Lee, E. A. Theodorou, "Adaptive Risk Sensitive Model Predictive Control withStochastic Search"

3

55: I. Proimadis, Y. Broens, R. Tóth, H. Butler, "Learning-based feedforward augmentation for steadystate rejection of residual dynamics on a nanometer-accurate planar actuator system"

64: G. Pizzuto, M. Mistry, "Physics-penalised Regularisation for Learning Dynamics Models with Con-tact"

65: A. Lederer, A. Capone, T. Beckers, J. Umlauft, S. Hirche, "The Impact of Data on the Stability ofLearning-Based Control"

82: D. Sun, M. J. Khojasteh, S. Shekhar, C. Fan, "Uncertain-aware Safe Exploratory Planning usingGaussian Process and Neural Control Contraction Metric"

84: J. Smith, M. Mistry, "ARDL - A Library for Adaptive Robotic Dynamics Learning"

92: M. Abu-Khalaf, S. Karaman, D. Rus, "Feedback from Pixels: Output Regulation via Learning-basedScene View Synthesis"

110: E. T. Maddalena, P. Scharnhorst, Y. Jiang, C. N. Jones, "KPC: Learning-Based Model PredictiveControl with Deterministic Guarantees"

115: H. Lee, M. Bujarbaruah, F. Borrelli, "Learning How to Solve “Bubble Ball”"

122: S. J. Wang, A. M. Johnson, "Domain Adaptation Using System Invariant Dynamics Models"

123: A. Havens, G. Chowdhary, "Forced Variational Integrator Networks for Prediction and Control ofMechanical Systems"

130: A. Jain, L. Chan, D. S. Brown, A. D. Dragan, "Optimal Cost Design for Model Predictive Control"

133: S. Karamcheti, A. J. Zhai, D. P. Losey, D. Sadigh, "Learning Visually Guided Latent Actions forAssistive Teleoperation"

136: Y. Nemmour, B. Schölkopf, J. Zhu, "Approximate Distributionally Robust Nonlinear Optimizationwith Application to Model Predictive Control: A Functional Approach"

4

Related Documents